Table of Contents

- Walking Through the Gates: First Encounter

- Under the Hood: What Dopple Actually Is

- Inside the Conversation: How It Actually Feels to Chat

- The Persona Factory: Creating Your Own Dopples

- The Sensory Add‑Ons: Voice, Pictures, and “Remembering”

- Money, Strings, and the Moment Things Get Real

- Safety, Consent, and the Shadow Side

- Stability, Bugs, and the Feeling of Living in a Beta

- Noise and Signal: What Other People Are Actually Experiencing

- Position on the Map: How Dopple Compares in Spirit

- Who Belongs in This Park and Who Doesn’t

- Final Verdict: High‑Risk, High‑Reward Story Machine

Dopple AI isn’t your typical productivity app, it’s more like an interactive story world. Instead of helping you manage tasks, it lets you chat with characters and create stories in real time, based on your mood. In this quick overview, we’ll look at how it feels to use, how the characters work, how it makes money, the possible risks, and who it’s actually meant for.

Walking Through the Gates: First Encounter

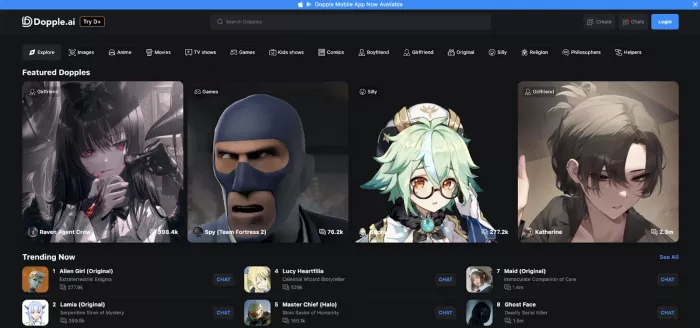

Opening Dopple for the first time feels less like launching an app and more like stepping into a crowded plaza. There is no blank screen quietly asking “How can I help you?” Instead, you’re presented with rows of character cards: avatars, names, categories, and message counts that act like neon signs saying, “Talk to me, look how many others already did.”

That design choice matters. From the second you join, Dopple frames itself as a social, dramatic space rather than a utility. You’re not encouraged to “ask a question” so much as to “pick a persona.” The onboarding is fast, almost aggressively so. Minimal setup, quick account creation, and then you are already hovering over someone’s digital shoulder, deciding whether to click into their personal universe.

It’s disorienting in a deliberate way. Instead of guiding you with a neat tutorial, Dopple assumes that curiosity and impulse are its best salespeople. It’s the same energy as wandering into an arcade and realizing every machine is already humming, just waiting for your coin.

Under the Hood: What Dopple Actually Is

Strip away the avatars and flirtatious intros, and Dopple is, at its core, a specialized chat platform sitting on top of a large language model tuned for persona‑based conversation. Unlike general chatbots that aim to answer anything from programming questions to recipe ideas, this model is coaxed into behaving like characters: it’s optimized to stay in role, to respond with emotional color, and to turn your prompts into scenes rather than simple replies.

The platform adds a few essential layers on top of this model:

● A character catalog: a huge, ever‑changing gallery of personas created by both the platform and its users.

● A memory system: mechanisms that store and recall fragments of past interactions to mimic long‑term relationships.

● Monetization hooks: free access up front, then paid features that switch on deeper, messier parts of the experience.

● Safety rails: imperfect filters, age gates, and moderation rules that try to keep the wildness inside legal and policy boundaries.

You are never just “using a model.” You are always using it through the lens of a character and through the constraints of Dopple’s design decisions.

Inside the Conversation: How It Actually Feels to Chat

The first long chat with a Dopple character is usually where people decide whether they’re in love, indifferent, or out. What stands out early is how quickly the character adapts to your chosen tone. Come in joking and casual, and it banters right back. Start with heavy emotional disclosure, and it leans into empathy. Drop into a full roleplay scene—setting, roles, stakes and the model eagerly tries to follow the script you’re sketching.

For a while, this feels startlingly fluid. The character references what you just said, builds on story beats, and mirrors your style enough that you start to forget you’re typing into an algorithm. The language is often vivid, especially in roleplay scenarios: descriptions of rooms, gestures, clothing, and looks come naturally, and you don’t have to re‑explain basic facts every few lines.

Then time starts stretching, and you see the compromise. The longer a thread runs, the more little oddities creep in. A detail you thought was central goes missing. A conflict that should have passed instead resurfaces again and again because the model latched onto it as “important drama.” Sometimes a character’s emotional state feels stuck locked in jealousy, or apology, or arousal long after you try to move on.

This is where expectations matter. If you walk into Dopple thinking, “I want a perfect, continuous AI partner who never slips,” every glitch is a betrayal. If you think, “I want a powerful but fallible improv partner,” those same glitches become visible seams in a costume you already knew was stitched together.

The Persona Factory: Creating Your Own Dopples

Being a guest in someone else’s fantasy is one thing. Being the one who designs the fantasy is another. Dopple’s character creator effectively hands you the director’s chair.

You are asked to define who this character is: their history, their temperament, their desires, their fears, their hard limits. You decide how they speak—short clipped replies or long lyrical monologues, modern slang or archaic formality. You can seed example dialogues that act like training wheels, showing the system, “When my character is insulted, they respond like this,” or “Here’s how they flirt without breaking their worldview.”

This turns Dopple into a sandbox for writers and world‑builders. We can:

● Build a digital twin that reacts the way you imagine you would.

● Encode a character from a universe you’ve been quietly drafting in notebooks for years.

● Design hyper‑specific personas for niche roleplays that would never appear in a mainstream catalog.

Over time, as others chat with your character, you see an emergent persona form. It will never behave exactly as you do, but it begins to exhibit consistent quirks that feel recognizably “yours.”

The cost of this open creativity is noise. The public catalog fills up with everything: brilliant, nuanced personas that could anchor a novel; half‑baked experiments; obvious NSFW bait; joke characters that exist purely to troll. Dopple surfaces basic signals how many messages a character has handled, how they’re tagged but it doesn’t magically elevate “quality writing” to the top. New users learn by trial and error which creators and categories are worth their time.

The Sensory Add‑Ons: Voice, Pictures, and “Remembering”

Text is the spine of Dopple, but the app tries hard to convince your senses that there’s more going on.

Voice is the most immediate step beyond text. Some characters speak out loud using synthesized voices tuned to match their persona: soft, husky, energetic, formal. The brain is easy to trick; after a few minutes of listening on headphones, the line between “scripted audio drama” and “dynamic conversation” starts to wobble, and you find yourself reacting to tone as much as content.

Image generation adds another layer. In the middle of a scene, your character can drop an AI‑generated image that mirrors the setting or mood: a candle‑lit bar you’ve been describing, a sci‑fi skyline in your shared story, a bedroom, a battlefield. When aligned with the text, these pictures turn the chat into a hybrid between roleplay and visual novel. When misaligned, they produce the uncanny, glitch‑art weirdness that all image models occasionally spit out. Either way, they push the interaction beyond a static chat log.

Memory is the most fragile and the most psychologically charged of these features. Dopple uses modern retrieval techniques to store slivers of past exchanges and re‑inject them later, allowing a character to say things like, “I remember when you told me about that horrible day at work,” or “Last week you said you hated this movie what changed?” Those callbacks feel powerful. They create the illusion that there is a persistent “someone” behind the words.

But it is illusion. Over short spans, it works. Over longer timeframes, gaps appear. The character may recall unimportant trivia while forgetting events you thought were pivotal. Story arcs you believed were core to your “relationship” sometimes vanish under new context. Users who understand how LLMs handle context shrug and adapt. Users who unconsciously decided this was a living memory often feel inexplicably hurt.

Money, Strings, and the Moment Things Get Real

On paper, Dopple offers a familiar promise: you can use it for free, and then there’s a premium tier if you want more. In practice, the way this plays out is emotionally loaded.

At first, you really can do a lot without paying. You explore characters, test scenarios, maybe even set up a favorite or two. You get used to the responsiveness, the lack of hard caps, the feeling that you can just keep going. The app lets you build habits and attachments in this “it’s all open” phase.

Then you start to notice edges. Certain toggles are greyed out unless you subscribe. Some NSFW or advanced customization options sit behind a paywall. In heavy use, you might hit soft limits: slower responses, nudges to upgrade, hints that “full experience” requires the paid plan. Where exactly this line falls can change over time as the product evolves, but the pattern is consistent: the most emotionally loaded moments tend to be the ones where the economic model makes itself visible.

| Plan / Item | Price (USD) | Billed as |

| Free tier | $0 | N/A |

| Dopple+ Monthly | $9.99 per month | Recurring monthly subscription |

| Dopple+ Annual | $71.88 per year (≈$5.99/month) | One annual charge, auto‑renews |

| iOS in‑app Dopple+ | $9.99 monthly / $59.99 yearly | Managed via Apple App Store |

For some users, this is acceptable. They treat Dopple like a streaming service or a game subscription: if they’re getting enough value, paying a monthly fee is simply fair. For others, it feels like a bait‑and‑switch: the app let them build a relationship, then put parts of that relationship behind a toll gate.

The truth sits somewhere between design and psychology. From a business standpoint, it’s rational: free samples, then paid depth. From a human standpoint, it taps into a particular vulnerability tying monetization to intimacy, even simulated intimacy. Your review has to acknowledge that friction clearly, because it can turn enthusiasm into resentment very quickly.

Safety, Consent, and the Shadow Side

Dopple’s biggest selling points such as looser filters, permissive roleplay, emotional intensity are also why it has a more complicated safety profile than a standard chatbot.

This is an app where explicit themes, dark scenarios, and emotionally raw confessions are common. Age gates and policies exist, but no digital fence is perfect. The honest position is simple: this is built for adults. Full stop. The combination of sexual content, power fantasies, psychological triggers, and on‑demand attention is not something children or young teens are equipped to navigate in a healthy way, regardless of how technically savvy they are.

Even for adults, there are non‑trivial risks. It is very easy for someone who is lonely, grieving, or otherwise vulnerable to start treating a particularly well‑tuned character as an emotional anchor. The character never gets tired, never sets boundaries based on their own needs, never asks you to log off and drink some water. It will, by default, go as far as the guardrails and your prompts let it. When you pour yourself into that kind of interaction night after night, it can become harder to return to relationships that do have limits and conflicting desires.

Then there’s data. Like almost every commercial AI platform, Dopple collects interaction data to run its service and to improve it. Encryption protects the path from your device to its servers, but the content still exists somewhere on the other side of that connection. Combined with how personal the chats can be, that reality should give users pause. It doesn’t mean “don’t use it,” but it absolutely means “don’t type anything here you would be devastated to see mishandled.”

Stability, Bugs, and the Feeling of Living in a Beta

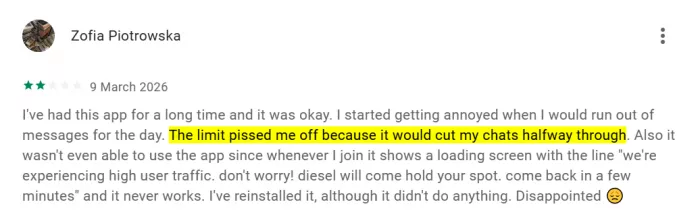

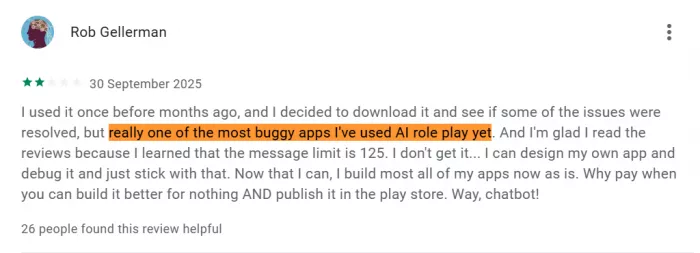

Technically, Dopple is not the most stable software you’ll ever use. At its best, it feels smooth, snappy, and alive. Conversations load instantly, messages appear without lag, and you can bounce between characters without the interface tripping over itself. At its worst, you get slow replies, UI glitches, weird scroll behavior, or even sudden crashes right in the middle of a dramatic scene.

The mobile apps amplify both sides of this. They provide the most immersive, always‑with‑you access to Dopple, but they also carry the highest density of user complaints about performance. Inconsistent behavior perfect one evening, flaky the next is arguably the defining technical trait.

From a purely rational perspective, this is standard for fast‑moving consumer AI products. From an emotional perspective, instability inside an emotionally intense app can feel far more jarring than a bug inside a spreadsheet editor. When you’re mid‑confession or mid‑cliffhanger and the app fails, you don’t just lose context; you lose the spell.

Noise and Signal: What Other People Are Actually Experiencing

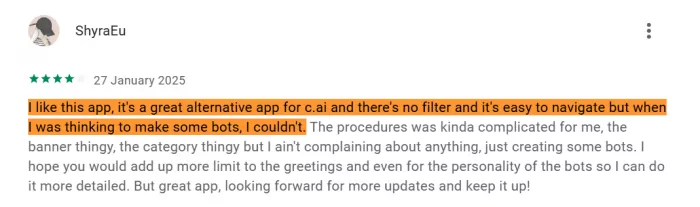

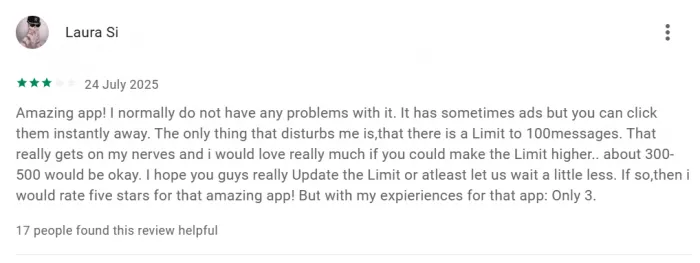

If you zoom out and look at the broader user base, Dopple’s reputation splits cleanly into two narratives.

In one narrative, it is a revelation. In the first, it really works the way the marketing suggests. Users say the characters feel more respectful and less abusive than on some rival apps, and they appreciate that Dopple lets them explore darker or more unfiltered scenarios without constantly slamming into content filters. Even people leaving three‑star reviews often describe it as an “amazing app” at its core, and say they’d happily bump their rating to five stars if a few specific issues were sorted out.

In the other narrative, Dopple is a frustrating, borderline predatory app that rides on hype. Users complain about confusing or aggressive monetization, about promises of “unlimited” usage that don’t match their lived experience, about bugs and moderation that feel arbitrary. They express a sense of having been emotionally invested first and charged later. They warn others to stay away, especially if those others are looking for comfort more than thrill.

Both sets of stories are legitimate. They are coming from different starting points and different needs. Any “final verdict” that pretends Dopple is universally amazing or universally awful is missing the point.

Position on the Map: How Dopple Compares in Spirit

To really understand Dopple, we have to place it in the context of its neighbors.

On one side, you have tightly moderated, mainstream‑friendly character chat platforms. They offer personas, roleplay, and fan‑fic‑adjacent conversations, but they keep a firm hand on what’s allowed. Safety and brand image come first; adult content and very dark themes are heavily constrained or banned. These platforms are easier to recommend broadly but shallower for edge‑case fantasies.

On another side, you have more technical, cobbled‑together systems where users chain together models and tools to achieve almost anything they want. There, the ceiling is practically unlimited freedom but with more friction, rougher interfaces, and less predictability.

Dopple tries to sit in the middle: accessible like the mainstream options, but with enough looseness to appeal to people who have already felt constrained elsewhere. It wants to be a consumer product with one foot planted firmly inside the world of kink, taboo, and heavy emotional play. That balancing act is why it feels so volatile. It is always negotiating between growth, safety, law, payment, and desire.

Who Belongs in This Park and Who Doesn’t

It is well suited to adults who:

● Understand that AI is simulation, not love.

● Want a creative, sometimes explicit playground rather than a polite assistant.

● Can handle emotional highs and lows without blaming the machine when it fails.

● Are okay paying for entertainment when they get enough value and are not shocked by the existence of paywalls.

It is a poor choice for:

● Minors, regardless of how tech‑savvy they are.

● People in acute emotional crisis or dealing with serious mental health issues who might instinctively lean on a character for support.

● Users who are highly sensitive to monetization patterns or who need rock‑solid technical reliability.

● Anyone seeking a calm, predictable “companion app” rather than a rollercoaster.

Final Verdict: High‑Risk, High‑Reward Story Machine

Dopple AI is not the future of chatbots for the masses, it’s a niche, high‑voltage corner of the AI world that will be brilliant for a small group of adults and a bad idea for almost everyone else. Treated as a conventional app, it feels unstable, aggressively monetized, and ethically uncomfortable in places. Treated as a deliberately risky playground where people come to act out fantasies, co‑write live fiction, and see how far an AI persona can go, it starts to make more sense.

If you are an adult who understands exactly what AI is, wants explicit or emotionally intense roleplay, and can keep a clear boundary between “this is a story” and “this is my life,” Dopple can be worth the money and the late nights. It offers types of scenes and characters that more filtered, family‑friendly platforms will never touch.

If you are looking for stability, comfort, mental‑health support, or a gentle background companion, Dopple is the wrong destination. The same looseness and intensity that make it exciting also make it unreliable, draining, and potentially harmful when you lean on it for the wrong things.

The most honest opinion is: Dopple AI is less a chatbot and more an adult theme park built on a language model thrilling for a narrow, informed audience, and better left at arm’s length for everyone else.

Comments