The most advanced object in the average classroom used to be the laminator. Two years after ChatGPT shipped, that title belongs to whatever cloud the school's preferred LLM happens to live in. Adoption did not creep; it sprinted. Ninety-two percent of students now use AI for coursework, sixty percent of teachers use it weekly, and most school boards are still drafting the policy for what happened last semester.

This article looks at six AI-powered learning platforms that are actually moving numbers, not just press releases. Each gets a tight review, a pros and cons table that avoids the obvious, and a use case table built around real scenarios. The aim is to leave you with a usable map of who should buy what, rather than a feature list copied from a vendor deck.

The Market Is Not Pretending Anymore

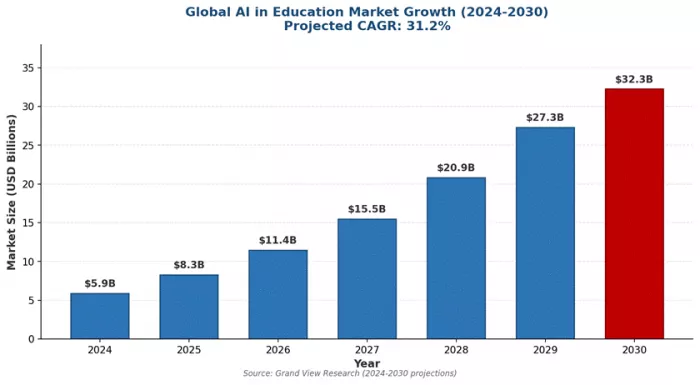

Three numbers explain why this category stopped being a curiosity. Grand View Research puts the global AI in education market at $5.88 billion in 2024 and projects $32.27 billion by 2030, a 31.2% compound annual growth rate. Mordor Intelligence forecasts a 42.83% CAGR through 2030. Precedence Research models the longest horizon at $136.79 billion by 2035. The estimates differ by tens of billions; the direction does not differ at all.

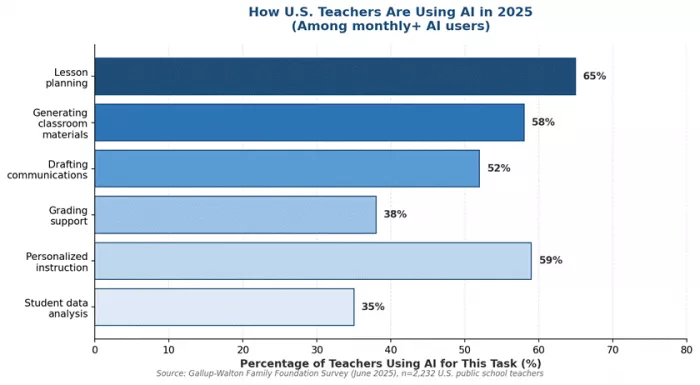

The funding has caught up to the forecast. Microsoft committed over $4 billion to AI education in July 2025 through its Elevate program. Google handed college students in five countries a free year of Google AI Pro. China mandates eight hours of AI coursework annually for primary learners. The UAE made AI compulsory from kindergarten. The 2025 Gallup-Walton Family Foundation survey of 2,232 U.S. teachers found weekly AI users save 5.9 hours per week, the equivalent of six recovered weeks per school year. That is the dividend behind the spreadsheet.

Figure 1: Global AI in education market growth, 2024-2030. Source: Grand View Research.

Khanmigo: The Tutor That Refuses to Just Tell You the Answer

Khanmigo, Khan Academy's AI tutor launched in 2023, is built on GPT-4 and one stubborn principle: when a student asks for the answer, it will not give it. That refusal is the whole product. Instead, it asks where the student is stuck, what they have tried, and what the next step might be. Common Sense Media gave it four stars and ranked it ahead of ChatGPT and Bard for educational use, partly because it does not collapse into a homework-answer dispenser the moment a kid stops trying.

Mechanically, the tool sits inside Khan Academy's content library, so it knows which lesson is open and which prerequisite skills the student has already shown. For teachers, the same engine generates rubrics, exit tickets, and differentiated practice items in minutes. Pricing is the most accessible in the category: free for verified K-12 teachers, $4 a month or $44 a year for parents and learners, with up to ten child accounts on a single subscription. Districts negotiate separately. Microsoft is a partner, which is why the safety story is unusually mature for an AI product aimed at minors.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| Refuses to give answers, which is the design, not a flaw. Students who want a cheating tool will hate it. Students who want to actually learn will not. | Curriculum lock-in is severe. If your school does not use Khan Academy, alignment cracks the moment you leave standard topics. |

| Parents see every conversation a child has with the tool. This solves the AI-cheating panic better than detection software ever did. | Humanities and arts feedback feels like a generic chatbot wearing a teacher costume. Math is the spine; everything else is hanging off it. |

| Math, writing, and coding feedback is genuinely competent because it sits on Khan Academy's curriculum spine. | The Socratic method is slower than students under deadline pressure want it to be. Several testers in independent reviews said the words 'just tell me' out loud. |

| $44 a year sits well below the $30 to $100 hourly cost of a human tutor, and Khan Academy's nonprofit status keeps the pricing structure stable. | Classroom student access requires district partnership, which means individual teachers cannot simply roll it out for their kids next Monday. |

Table 1: Khanmigo at a glance. The pros are mostly about restraint; the cons are mostly about scope.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| Eighth grader on Algebra I | Stuck on systems of equations the night before a test, parent unavailable | Walks the student through identification of the unknown, not the answer; works because the prerequisite chain is mapped |

| AP teacher with 30 students | Needs three differentiated practice sets across ability levels for tomorrow | Rubric and worksheet generators turn a 60-minute task into 15, with standards alignment baked in |

| Parent of a tween | Wants AI in the house but is uneasy about unsupervised ChatGPT use | Conversation history is fully visible; child accounts are gated under the parent account |

| District using Khan Academy | Looking to scale 1:1 tutoring without hiring | Integrates with existing curriculum without parallel content procurement |

Table 2: Khanmigo use cases. Best for institutions and families already inside Khan Academy's orbit.

Duolingo Max: The Most Profitable AI Bet in Consumer Edtech

The case for Duolingo Max is a spreadsheet. After the GPT-4 rollout, daily active users jumped 51% to over 40 million, paid subscribers grew 37% year over year to 10.9 million, and quarterly revenue rose 41% to $252.3 million in 2025. CEO Luis von Ahn told investors that generative AI now produces close to 100% of new lesson content automatically. That is what an AI bet looks like when it works.

Max sits above the Super tier and adds two features. Video Call places learners in real-time voice conversations with an AI character named Lily, who remembers prior calls and delivers transcripts and post-call feedback. Roleplay drops them into scripted scenarios such as ordering coffee in Paris or planning a vacation, then grades accuracy and complexity. The feature works most fully for English speakers learning Spanish, French, German, Italian, or Portuguese. Pricing is $29.99 a month or $168 a year individually, with a $239.99 family plan for up to six users. That is roughly double Super, and the gap has become the central debate among reviewers.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| Lily remembers your previous calls. That continuity is the closest thing to a relationship a software tutor offers, and it materially raises engagement. | Conversations cap at two or three productive exchanges before the model runs out of steam. Good for confidence; not enough for fluency. |

| Implicit learning, the pedagogically preferred mode for language acquisition, is exactly what GPT-4 free-form conversations naturally produce. | Language pair restrictions are real. Korean-to-English speakers, for example, do not get the full feature set. The pricing does not adjust accordingly. |

| Built into the existing Duolingo gamification loop, so users who already have streaks do not have to switch apps to practice speaking. | GPT-4 errors are flagged by Duolingo itself; in language learning, a wrong correction is worse than no correction. |

| Real-world scenario library is genuinely varied: cafe orders, furniture shopping, debating vacation plans, hiking small talk. | The price doubles from Super for two features, which several long-time users called the moment Duolingo started feeling like Babbel. |

Table 3: Duolingo Max at a glance. The features work; the pricing math is the open question.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| Intermediate Spanish learner | Has finished the Duolingo tree but freezes when ordering at a restaurant abroad | Roleplay simulates the exact scenarios that cause freeze, with low social stakes |

| Frequent business traveler | Wants survival French for client dinners in three weeks | Video Call practices live conversation under time pressure without a human partner needed |

| Heritage speaker rebuilding | Adult who heard Mandarin growing up but never spoke it formally | Conversation-first approach matches how heritage speakers learn fastest, bypassing grammar drills |

| Duolingo loyalist on Super | Already pays for Super and loves the streak system | Adds speaking layer without abandoning a learning routine they have stuck with for years |

Table 4: Duolingo Max use cases. The product is a Super upgrade, not a standalone tutor.

ChatGPT Edu: The Enterprise Answer to a Problem Universities Created

Wharton's Ethan Mollick has his MBA students complete final reflections by chatting with a course-specific GPT trained on the syllabus. Arizona State runs more than two hundred AI projects across departments, including a GPT-based German tutor. Oxford, Columbia, the University of Texas at Austin, and the University of Maryland have all built similar deployments. ChatGPT Edu, launched by OpenAI in mid-2024, exists because universities wanted an enterprise version of a tool their students were already using anyway, ideally one that did not send research data into the training pipeline.

It is GPT-4o packaged with custom GPT creation, single-sign-on, audit logs, and data isolation. Faculty can build a course tutor in an afternoon without engineering help. The catch is that pricing is opaque: there is no public price page, only an enterprise contact form, which is its own form of friction. Individual users approximating the experience pay $20 a month for ChatGPT Plus and surrender the governance layer. A 2024 ScienceDirect review of the top 100 U.S. universities found most have adopted an open but cautious stance, with primary concerns parked on academic integrity, hallucinations, and FERPA exposure.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| Custom GPTs let a professor turn a syllabus into a course tutor in a few hours, with no engineering support required. | Hallucinations in citation-heavy fields, particularly law, medicine, and history, are still the unresolved tax. Verification work falls back on the user. |

| Data isolation means student conversations and faculty research are not used to train OpenAI's foundation models, which is the single deal-breaker most procurement teams cared about. | Pricing is invisible until you book a sales call. Smaller institutions with thin procurement teams stall here. |

| Centralized seat management through institutional SSO turns AI from a thousand individual subscriptions into a single audit trail. | It does not solve the academic integrity problem; it formalizes it. Schools still have to redesign assessments around AI, not against it. |

| Faculty resistance, the number one cited blocker in AI adoption surveys, drops measurably when the school sanctions a specific tool. | Effective use depends on faculty willingness to retool courses. A 2025 study found 80% of students rate their school's AI integration below expectations. |

Table 5: ChatGPT Edu at a glance. The model is good; the institutional choreography is the hard part.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| MBA professor | Teaches strategy with case studies; wants 24/7 office hours without doubling workload | Custom GPT trained on case library answers tactical questions; professor handles the hard ones |

| Graduate research student | Synthesizing 80 papers for a dissertation lit review | Document-grounded chat surfaces themes and contradictions across the corpus, with sources to verify |

| University administration | Faculty are using consumer ChatGPT with sensitive student data, creating FERPA risk | Institutional license replaces shadow IT with a sanctioned, governed deployment |

| Department chair launching AI literacy | Needs a baseline AI tool every student in the department can use ethically | Custom GPTs configured per course set explicit ground rules students can actually see |

Table 6: ChatGPT Edu use cases. The tool is for institutions large enough to have an IT governance team.

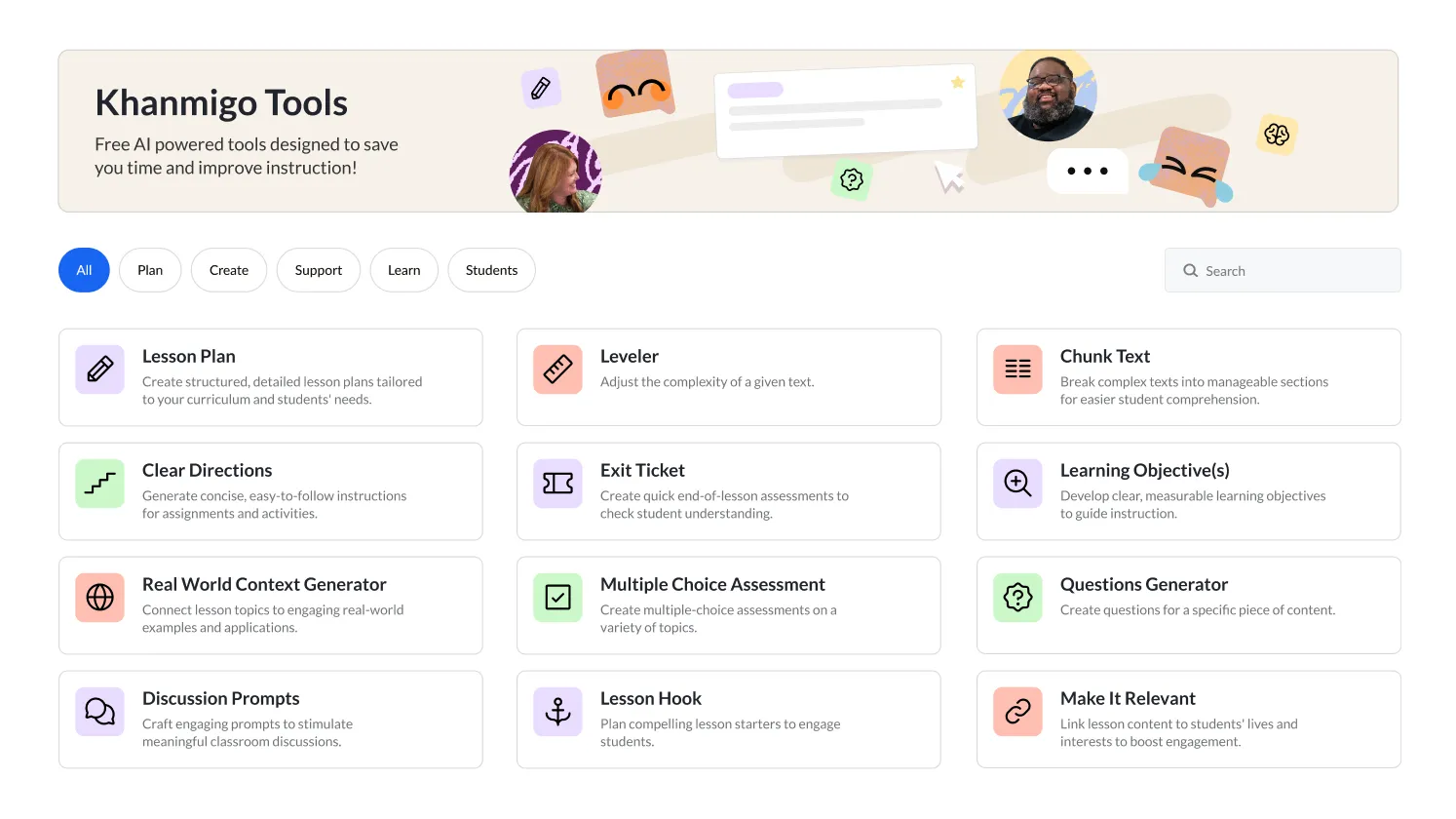

MagicSchool AI: The Productivity Layer Teachers Actually Asked For

A middle school teacher needs a quiz on the food chain by tomorrow, an IEP draft for a new student, a translated parent letter, and a rubric that aligns with state standards. In the pre-AI version of this Tuesday, she stays until seven. In the MagicSchool version, she opens a dashboard with eighty-plus task-specific tools, picks four, and is home by five. That is the pitch. The numbers behind it: more than five million educators use the platform across nearly every U.S. school district and 160 countries, with 13,000-plus school and district partnerships.

Unlike general chatbots, MagicSchool replaces open-ended prompting with guided tools: lesson plan generator, IEP writer, rubric maker, multiple-choice quiz builder, parent communication translator, presentation generator. Studio Mode lets educators edit AI outputs and export to Google Docs or Microsoft Word. Student Rooms is the classroom layer, where teachers configure which tools students can use and what learning objective scopes the AI's behavior. Pricing is structured to reduce adoption friction: a free plan with core tools, Plus at roughly $99.96 a year for individual teachers, and Enterprise contracts with SSO and district dashboards. The platform holds a 4.8 on G2 and a 93% privacy rating from Common Sense Privacy, both notably high for the category.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| Eighty-plus prebuilt tools mean teachers without prompt-engineering skills get the output of someone who has them. The skill barrier disappears. | Eighty tools is also a discoverability problem. New users frequently use the same five and never find the other 75. |

| FERPA, COPPA, and GDPR compliance is built in, with a privacy rating that beats almost everything else in the K-12 stack. | The AI's knowledge cutoff means anything time-sensitive (current events, new science) needs verification before it reaches students. |

| Reviewer-reported time savings of 7 to 10 hours a week is consistent across G2, Capterra, and independent K-12 EdTech reviews. | It is a productivity layer for educators, not a tutor for students. Schools sometimes buy it expecting both and have to course-correct. |

| Free tier is genuinely usable, not a teaser. A teacher can run an entire planning week on it before deciding whether Plus is worth it. | Output quality varies more than the marketing implies. The IEP generator is excellent; some quiz outputs need rework before classroom use. |

Table 7: MagicSchool AI at a glance. The platform's biggest weakness is also a function of its biggest strength.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| Special education teacher | Drafting four IEPs by Friday on top of regular teaching load | IEP generator pulls from goals and accommodations to produce structured first drafts in minutes |

| First-year teacher | Lesson planning from scratch with zero accumulated archive | Lesson plan and worksheet generators provide the scaffolding a veteran teacher carries in their head |

| District technology director | Needs an AI tool teachers will actually use, not abandon after one PD session | Free entry tier removes procurement risk; usage data lets the district scale to Enterprise once value is proven |

| High school department chair | Wants AI literacy taught alongside the subject, not as a separate unit | Student Rooms lets the department control AI scope per assignment, embedding literacy into normal coursework |

Figure 2: How U.S. teachers using AI at least monthly distribute their AI use across tasks. Source: Gallup-Walton Family Foundation, June 2025 (n=2,232).

Coursera Coach: The Accidental Equity Tool

Here is the finding nobody expected. According to Coursera's own published research, women are 11.1% more likely than men to engage with Coach. Learners without a college degree are 10.8% more likely to use it. Career changers, the group with the steepest prerequisite gaps, are 39.8% more likely to message the Coach than learners merely advancing in their existing field. The product was designed to help adult learners stay on track. It turned out to disproportionately help the people the platform had historically underserved.

Mechanically, Coursera Coach is generative AI grounded in the platform's expert-authored course content. It runs in three modes: in-context Q&A and lecture summarization, Help Me Practice (Socratic pre-assessment sessions), and instructor-designed Socratic dialogues built directly into courses by faculty including Andrew Ng, Vic Strecher, and Barbara Oakley. Coursera reports that Coach has supported over one million learners and is associated with a 9.5% higher quiz pass rate on first attempts, and that learners using it complete 11.6% more course items per hour. The pricing is bundled: $59 a month or $399 a year through Coursera Plus, or included in select Professional Certificates. There is no standalone Coach subscription.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| Documented equity gains are the rarest finding in EdTech research. Coach delivers them not through marketing but through measurement. | No standalone Coach access. You have to buy the broader Coursera Plus subscription, even if you only want the tutor. |

| Tightly grounded in expert course content rather than open web data, which dramatically reduces the hallucination surface. | Better at quick concept clarifications than at sustained, multi-step problem coaching of the kind a Khan-style tool delivers. |

| Help Me Practice mode is the closest a generative tool has come to recreating the part of human tutoring that actually moves outcomes: pre-assessment Socratic dialogue. | Coverage depth depends entirely on the course it is grounded in. Quality is excellent on flagship courses, thinner on long-tail content. |

| Career-changer use case is a real product-market fit, not a positioning slide. The data is on the company blog, not just in a sales deck. | Not available on the mobile app, which is where many adult learners actually study during commutes. |

Table 9: Coursera Coach at a glance. The interesting story is who uses it most, not the feature list.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| Career switcher into data science | Took a long break, intimidated by prerequisite math in Andrew Ng's course | Coach explains in plain English, points to specific lecture clips, makes asking questions feel safe |

| Working parent on a certificate | Forty minutes of study time per day, no margin for getting stuck | 11.6% faster item completion adds up to real progress under tight time budgets |

| Returning learner without a degree | Years out of formal education, unsure how to study online effectively | Coach teaches study habits alongside content, lowering the gap between motivation and outcome |

| Corporate L&D buyer | Workforce reskilling in AI literacy at thousands of seats | Coursera for Business contracts plus instructor-designed dialogues create a structured, measurable pathway |

Table 10: Coursera Coach use cases. Best for adult learners with structured goals and limited time.

Carnegie Learning's MATHia: The 1990s Veteran Still Outscoring the New Kids

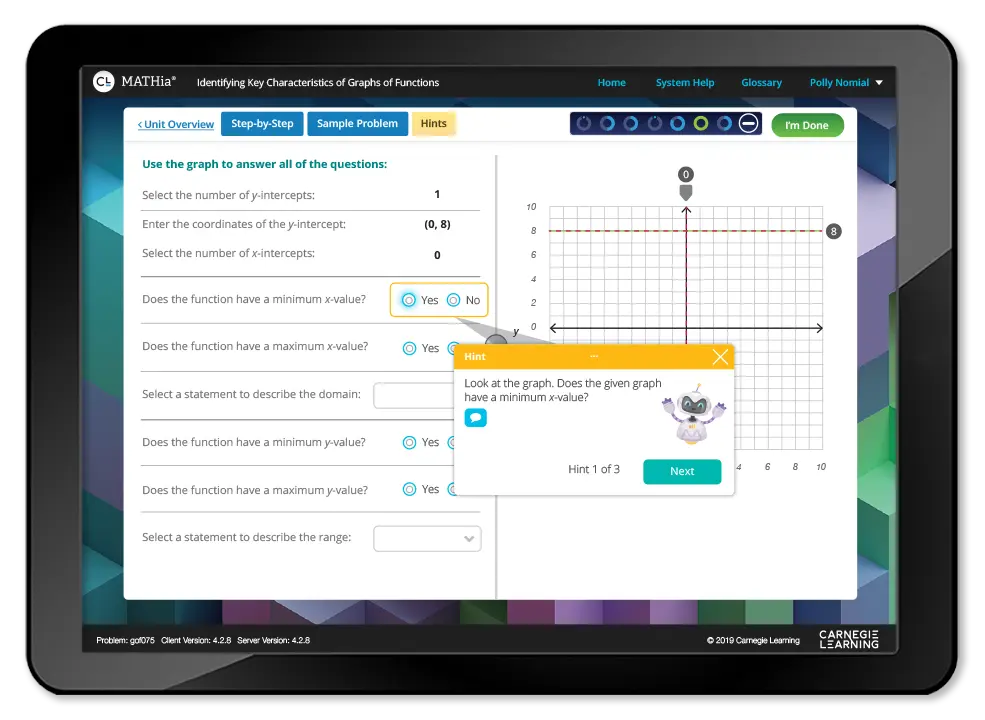

MATHia's AI is older than most of its competitors' founders. Born from cognitive science research at Carnegie Mellon University in the 1990s, it is the only AI tutoring system in the K-12 stack with a U.S. Department of Education-funded randomized controlled trial. The trial, run by RAND across 18,000-plus students at 147 middle and high schools, found that the Carnegie blended approach nearly doubled growth on standardized test performance in year two. A separate Student Achievement Partners study reported a 16-percentile-point Algebra I gain for the median student. A 2021 Saga Education study comparing five adaptive math platforms ranked MATHia first overall, and first by a wide margin on the question of whether students felt the platform was made for them.

The technical approach is the reason. MATHia models knowledge at the skill level, not the problem level. As students work through multi-step problems, the system continually estimates mastery on each underlying skill and adjusts difficulty accordingly. When errors happen, it identifies which specific skill misfired and delivers a contextual hint rather than restating the question. LiveLab, the teacher dashboard, surfaces real-time alerts when students are idle or struggling, and won the EdTech Breakthrough Award for Best Use of AI in Education in 2019. Pricing is school-only, bundled inside the Carnegie Learning Math Solution. There is no consumer access; if your district has not adopted it, neither have you.

| Where It Genuinely Wins | Where It Quietly Disappoints |

|---|---|

| The only AI tutor in this article with a Department of Education-funded RCT showing roughly 2x test-score gains in year two. | The interface looks like 2015 because much of it largely is. Students raised on TikTok find the UX dated within fifteen minutes. |

| Skill-level knowledge tracing, not problem-level adaptation. The difference is the difference between a tutor and a worksheet generator. | No consumer access at all. Your school adopts it or you do not use it. Period. |

| LiveLab dashboard tells teachers in real time which students are idle or stuck, which solves the actual problem of 30 students working at 30 different paces. | Curriculum lock-in to the Carnegie Math Solution. Districts that want MATHia generally end up adopting more of Carnegie's stack. |

| Predates the GPT era, which means hallucination risk is structurally near zero. The math is right. | The structured pacing that drives mastery feels rigid to high-achieving students who want to skip ahead. |

Table 11: MATHia at a glance. The strongest evidence base in the category, attached to the most institutional product.

| User Profile | Real Scenario | Why This Tool Fits |

|---|---|---|

| District math coordinator | Algebra I scores have flatlined for three years; needs measurable change | RCT-backed outcomes are the strongest case for budget defense; LiveLab tracks teacher implementation fidelity |

| Middle school math teacher | Five different ability levels in one Algebra I classroom | Skill-level adaptation actually means five different students see five different sequences in real time |

| Special education co-teacher | Students with reading disabilities struggling with word problems | Active IES-funded research with Carnegie Learning is specifically improving reading scaffolding inside MATHia word problems |

| High school principal | Needs a math intervention with evidence, not vibes, for the school board | RAND RCT is what you bring to a board meeting when the budget question gets serious |

Table 12: MATHia use cases. Best when math outcomes are the budget priority and adoption is district-wide.

Putting Them Side by Side

The six tools cover almost no overlap once you get past the AI label. Khanmigo and MATHia compete in K-12, but Khanmigo costs $44 a year and MATHia costs whatever your district negotiates. Duolingo Max and Coursera Coach both serve adult learners, but one teaches Spanish and the other teaches data science. ChatGPT Edu and MagicSchool AI both target institutions, but one is for graduate research workflows and the other is for fourth-grade IEPs. The honest comparison, then, is on price and reception.

Pricing

| Tool | Free Tier | Individual | Educator | Institution |

|---|---|---|---|---|

| Khanmigo | Khan Academy core remains free | $4 / month or $44 / year | Free for verified K-12 teachers | District partnership pricing |

| Duolingo Max | Free Duolingo (with ads) | $29.99 / month or $168 / year | Not built for educators | Family: $239.99 / year for 6 |

| ChatGPT Edu | ChatGPT Free for general use | ChatGPT Plus: $20 / month | Bundled in institutional license | Custom enterprise contracts |

| MagicSchool AI | Free plan with core tools | Plus: ~$99.96 / year | Free or Plus for individual use | Enterprise: custom with SSO |

| Coursera Coach | Available in select free trials | Coursera Plus: $59 / mo or $399 / yr | Included for instructors | Coursera for Business / Campus |

| MATHia | None (institution-licensed) | Accessed via enrolled school | Bundled with Carnegie curriculum | District contracts |

Table 13: Pricing across the six platforms (USD; published 2025-2026 rates).

Reviews and Independent Validation

| Tool | G2 | Capterra | Other Independent | What Reviewers Actually Say |

|---|---|---|---|---|

| Khanmigo | 4.5 / 5 | Not listed | Common Sense Media: 4 / 5 | Loved for safety. Hated by students who wanted the answer in three seconds. |

| Duolingo Max | 4.5 / 5 | 4.7 / 5 | App Store: 4.7 / 5 | Praised for novelty, criticized for short conversations and the steep price jump from Super. |

| ChatGPT Edu | 4.7 / 5 | 4.5 / 5 | Adopted by Wharton, ASU, Oxford | Strong on flexibility. Citation hallucinations remain the unsolved tax. |

| MagicSchool AI | 4.8 / 5 | 4.7 / 5 | Common Sense Privacy: 93% | Teachers report 7 to 10 hours saved weekly. Choice paralysis with 80+ tools is real. |

| Coursera Coach | 4.5 / 5 | 4.5 / 5 | Coursera: 9.5% higher quiz pass rate | Solid for getting unstuck. Weaker for sustained, multi-step problem coaching. |

| MATHia | 4.0 / 5 | 4.2 / 5 | RAND RCT (18,000+ students) | The science is current; the interface is not. Strong outcomes, dated UX. |

Table 14: Ratings from G2, Capterra, App Store, Common Sense Media, and peer-reviewed studies. Where a tool is not listed individually, the parent product's score is shown.

What the Sector Is Quietly Avoiding

The same surveys that document productivity gains also identify problems the industry has not solved. Sixty-eight percent of teachers received zero AI training during the 2024-25 school year. Only 19% work in schools with a written AI policy. Plagiarism is the top concern for 72% of educators, and 93% argue regulation is needed. In higher education, 68% of CIOs name data privacy as their primary blocker, per EDUCAUSE. The Brookings Institution found that 42% of public schools cite budget constraints as the main reason for delayed adoption, with full institutional deployment running $150,000 to $250,000 once licenses, infrastructure, and integration are accounted for. None of these are technical problems. They are policy and procurement problems, which is harder.

| What the Sector Got Right | What the Sector Got Wrong (So Far) |

|---|---|

| Personalization at scale: adaptive systems hit skill-level mastery in real time, with documented gains in math (DreamBox, MATHia) and language (Duolingo) | Hallucinations are still treated as a bug to apologize for rather than a permanent feature to design around |

| Time recovery for teachers: 5.9 hours per week for weekly AI users (Gallup-Walton 2025), enough to fund actual planning instead of survival prep | Procurement is faster than policy: 68% of teachers got zero AI training during 2024-25, only 19% work in schools with a written AI policy |

| Equity gains where measured: women, learners without degrees, and career-switchers use AI tutors more, not less | $150K to $250K typical institutional deployment cost (Brookings) means rural and lower-income districts watch the gap widen in real time |

| Accessibility wins: AI is the first widely deployed technology to actually help students with disabilities at scale, not in pilot programs | Academic integrity is being rewritten by students faster than by institutions; 72% of educators name plagiarism as their top concern |

Table 15: Sector-wide assessment. Drawn from Gallup-Walton, Brookings, EDUCAUSE, and the major market research houses.

What to Actually Do With This

Tool selection should follow user fit, not feature lists. Khanmigo suits K‑12 families that want Socratic guardrails, but it does not suit a graduate student who needs a research assistant. In the same way, teacher‑focused tools like Timtis, which streamline lesson planning and everyday classroom workflows via its site, are often more effective for educators than broad, unfocused chatbots.

The most effective deployments pair AI with human review. MagicSchool works best when teachers verify output before sharing it, and MATHia works best when LiveLab data drives real interventions. AI without oversight is just faster mediocrity.

The third point is governance. Institutions with clear AI policies, training, and vetted tools that respect privacy gain the productivity benefits. Those without them mainly accumulate risk while students and staff use AI anyway, with little supervision or audit trail. The technology itself is no longer the differentiator; the real difference is how it is deployed, and whether focused tools and clear policies guide that deployment.

Comments