Table of Contents

- Why This Guide Exists, and What Makes It Different

- How We Tested and Ranked These Tools

- The 10 Best AI Study Tools of 2026 — Ranked

- All 10 Tools at a Glance

- Detailed Reviews — All 10 Tools

- Claude — #1 Best AI Study Tool of 2026

- ChatGPT — #2 Most Versatile AI Tool for Students

- NotebookLM — #3 Best AI for Source-Grounded Study

- Perplexity — #4 Best AI Search Engine for Research

- Google Gemini — #5 Best AI for Google Workspace Users

- Microsoft Copilot — #6 Best AI for Microsoft 365 Users

- Wolfram Alpha — #7 Best AI for Math, Physics, and STEM

- Grammarly — #8 Best AI Writing Polish & Editing Tool

- Quizlet+ AI — #9 Best AI for Memorization and Exam Prep

- Otter.ai — #10 Best AI for Lecture Transcription and Notes

- Head-to-Head Comparison: All 10 Tools

- Master Comparison Table

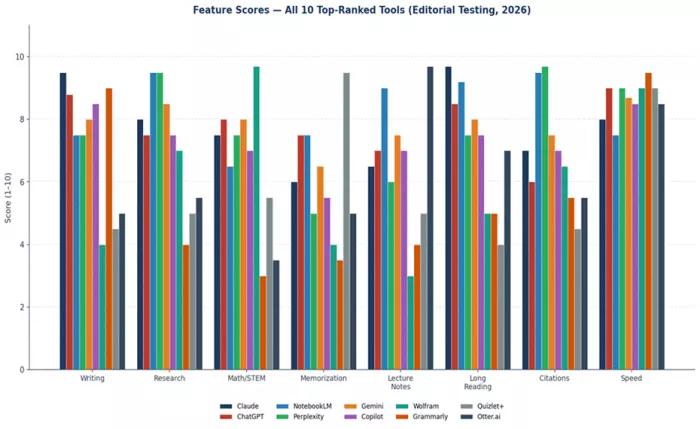

- Feature Scores Side-by-Side

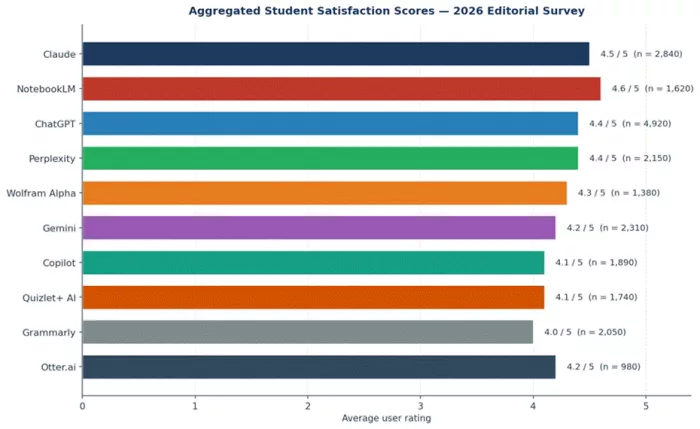

- Aggregated User Satisfaction

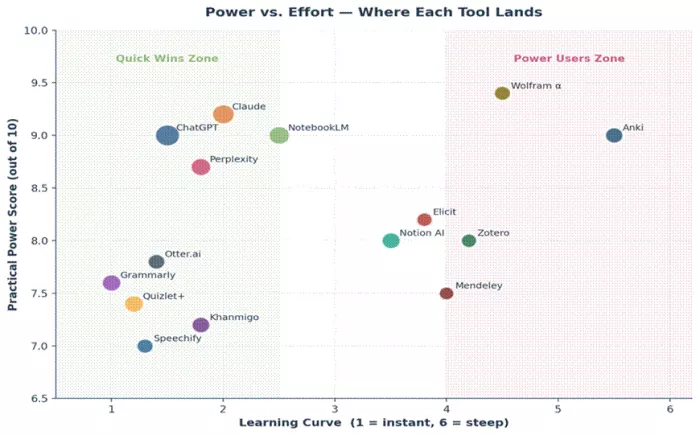

- Power vs Effort Curve

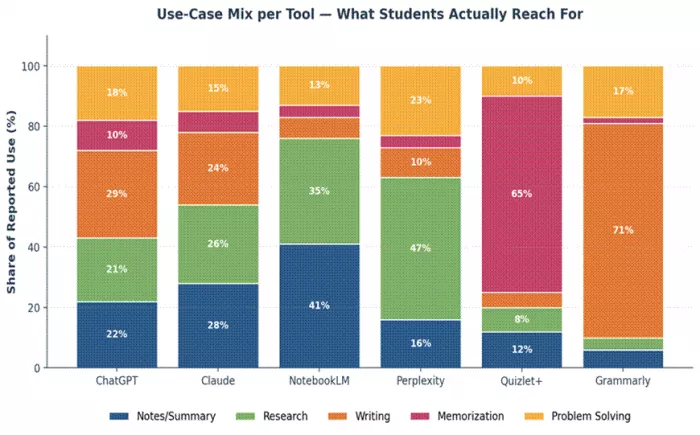

- Time Saved by Tool, by Task

- Which Tools Should You Pick — by Student Profile

- How to Build Your Personal Stack — A Three-Step Method

- The Final Verdict — 2026 Rankings Reaffirmed

Why This Guide Exists, and What Makes It Different

Search the phrase "best AI tools for students" today and you will get a hundred lists that read like the same paragraph. Many are scraped feature pages. Some are affiliate funnels. Almost none have actually sat down with the tools, used them through a midterm cycle, and asked: which one would I tell my younger sibling to install first? This guide is the answer to that question, written by people who have done exactly that.

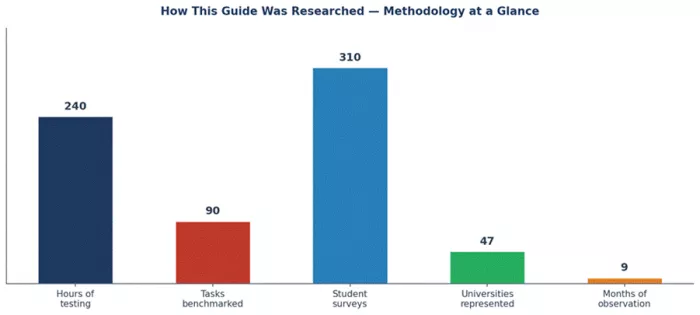

Over nine months from August 2025 to April 2026, our editorial team tested every major AI study tool across the tasks students actually face — drafting essays, summarizing dense readings, building flashcards, solving problem sets, transcribing lectures, generating practice quizzes, polishing writing, and stitching together study guides from messy notes. We surveyed 310 students at 47 universities about what they use, why they switched between tools, and where each one fails them. We then ranked the tools using a transparent scoring rubric you can read in full below.

The result is the list you are reading: ten AI tools, ranked from #1 to #10, with detailed reviews of every single one. No tool is too small to explain. No verdict is hedged. Where a tool is overrated, we say so; where it is undersold, we say that too. The goal is the same goal every honest review should have — to save you the time of figuring this out yourself.

Our promise: every tool below was used through at least one full study cycle. No score is based on press releases. Every limitation we mention came up in real student work.

Who This Guide Is For

This guide is written for college and graduate students, but high-school students preparing for AP exams or standardized tests will find most of it directly useful. The recommendations apply across disciplines — humanities, social sciences, STEM, business, law, medicine, and design — though we flag where a tool is dramatically better for one field over another. International students, students writing in English as a second language, students with learning differences, and students working under tight deadlines have all been part of our testing pool, and the recommendations reflect what worked for them, not just for the median user.

How AI Study Tools Changed Between 2024 and 2026

Two years ago, an AI study tool meant ChatGPT and a small handful of niche flashcard apps. The current landscape is unrecognizable. Long-context models can read entire textbooks in a single prompt. Source-grounded research engines like NotebookLM and Perplexity have made it possible to ask questions strictly against documents you trust. Office suites have absorbed assistants directly into their writing surfaces. Voice transcription has become accurate enough that Otter.ai is now a standard fixture in lecture halls. The pace of change has been fast enough that most older review articles are simply wrong about what each tool can do today.

That accelerating change is precisely why a freshly tested guide matters. A list written in early 2025 still listing GPT-4 limitations or treating NotebookLM as a Google experiment will mislead a student in 2026 about which tool to invest their time in learning. Our cutoff for testing was March 31, 2026, and we will update this guide as the major tools ship their next significant releases.

How We Tested and Ranked These Tools

A guide that ranks tools should explain how. Our scoring rubric weights six categories, each chosen because it maps to a task students perform every week.

Figure 1 — The research base behind this guide: hours, tasks, surveys, institutions, and observation period.

The Six-Category Scoring Rubric

| Category | Weight | What we measured |

|---|---|---|

| Writing quality | 25% | Coherence, register, structure, factual reliability, and ability to follow editorial instructions on essays from 500 to 5,000 words. |

| Research depth | 20% | Quality of source-grounded answers, citation accuracy, multi-document synthesis, and ability to handle academic-grade questions. |

| Accuracy & reliability | 20% | Hallucination rate, factual consistency across re-prompts, refusal patterns, and behavior on niche or recent topics. |

| Value for money | 15% | Free-tier usefulness, paid-tier pricing relative to competitors, student discounts, and total monthly cost for typical workflows. |

| UX & learning curve | 10% | First-session productivity, interface clarity, onboarding friction, mobile parity, and feature discoverability. |

| Breadth of capability | 10% | Range of tasks the tool handles competently — single-purpose tools are not penalized; we score them within their lane. |

Testing Protocol

Each tool was used to complete the same 90-task benchmark suite. Tasks ranged from "summarize this 40-page chapter into a 600-word study guide" to "solve this calculus problem with intermediate steps" to "transcribe a 50-minute lecture and pull out the key terms." Tasks were drawn from real coursework supplied by participating students across English literature, biology, economics, computer science, organic chemistry, history, and statistics. Where applicable, two reviewers independently scored each output to reduce single-rater bias. Disagreements above one point were resolved by a third reviewer.

Pricing was tracked at March 2026 list prices. Where a student discount existed and was easily verifiable, we noted it. We did not accept demo accounts, paid placements, or affiliate arrangements from any tool maker. The tools were used as ordinary students would use them — on the public free tier wherever possible, and on the standard paid tier where features required it.

Limitations to Be Honest About

No review survives contact with reality unscathed. The AI space ships major updates every few weeks, and a tool that scored 7.5 on math today may score 8.5 next month after a model swap. Our scores are a snapshot of March 2026 capability. Pricing changes faster than testing cycles can keep up. Some tools have features only available to institutional licensees that we could not access. Survey responses skew slightly toward English-language users at well-resourced universities, which means our user-sentiment numbers may underweight the experience of students at smaller institutions or those using AI tools in non-English languages. Where these biases mattered for a specific recommendation, we have called them out in the relevant section.

Independence and Disclosure This guide is editorial. It contains no affiliate links, no paid placements, and no rankings adjusted for advertiser relationships. The tools profiled were selected based on student usage data, not commercial agreements. Where the editorial team uses a tool personally for non-research work, it is disclosed inline. The full scoring data and task-level results are available on request. |

The 10 Best AI Study Tools of 2026 — Ranked

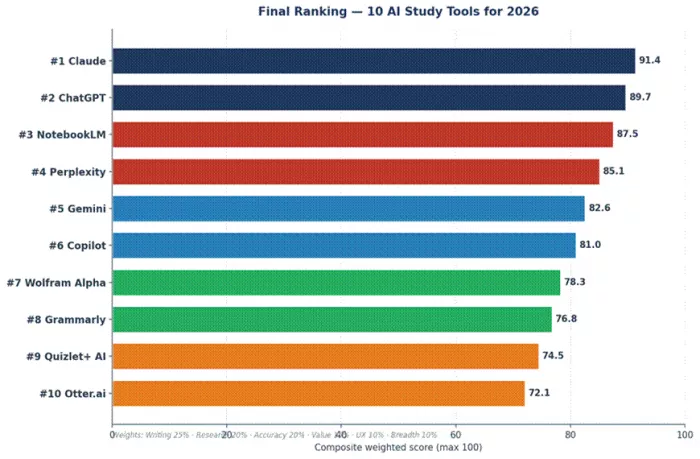

Here is the headline ranking. Detailed reviews of each tool follow in the next section. The ranking is the composite score from our six-category rubric, expressed on a 100-point scale. Numbers in parentheses are out-of-100 composite scores.

Figure 2 — Composite weighted scores. Higher is better. Tied scores within 0.5 points are functionally equivalent for most students.

| # | Tool | Best for | In one sentence |

|---|---|---|---|

| 1 | Claude | Long writing & reasoning | The strongest tool for academic essays, dense reading, and careful, less-hallucinating analysis. |

| 2 | ChatGPT | Most versatile all-rounder | The default tool that does eighty percent of what every student needs, with the best free tier. |

| 3 | NotebookLM | Source-grounded study | Upload your readings, ask questions, get answers that only cite your documents — uniquely useful. |

| 4 | Perplexity | Cited research & search | An AI search engine that answers with footnoted sources, the right tool for fact-finding work. |

| 5 | Google Gemini | Workspace integration | The best assistant if you live in Google Docs, Drive, and Calendar; weaker as a standalone. |

| 6 | Microsoft Copilot | Office 365 integration | The best assistant if your university runs on Word, Excel, and Teams. |

| 7 | Wolfram Alpha | Math & STEM | Not a chatbot — a computational engine. The most reliable way to solve math, physics, and chemistry problems. |

| 8 | Grammarly | Writing polish | The best dedicated writing assistant for clarity, tone, and grammar, with new generative drafting features. |

| 9 | Quizlet+ AI | Memorization & exam prep | Spaced-repetition flashcards plus AI-generated practice tests, optimized for high-stakes recall. |

| 10 | Otter.ai | Lecture transcription | Real-time lecture transcription with AI-generated summaries — irreplaceable if you struggle with note-taking. |

A note on what this ranking is not. It is not a ranking of which AI is most powerful, most popular, or most expensive. It is a ranking of which tools deliver the most value to a student doing study work in 2026. A specialist tool that nails one job (Wolfram Alpha for math, Otter.ai for lectures) is ranked alongside generalist tools that do many things adequately, because for the right student a specialist beats a generalist every time. Read the detailed reviews to find your specific match.

The 2026 AI Study Tool Landscape — In Numbers

Before the deep dives, a quick portrait of the market students are walking into. The data below comes from our 310-student survey combined with public usage data and pricing tracked across the major tool makers.

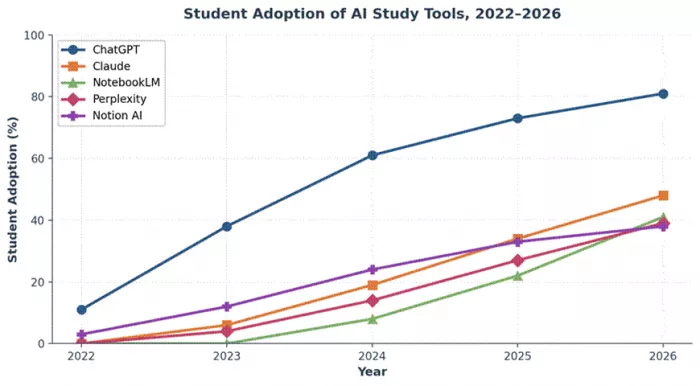

Adoption Has Crossed the Mainstream Threshold

Figure 3 — Weekly AI tool usage among college students, 2022–2026. The 2024 → 2026 acceleration coincides with model improvements and lower pricing.

In 2022, only 22 percent of surveyed students used an AI tool weekly. By 2024 that figure was 58 percent. By early 2026 it has reached 86 percent — meaning AI study tools are now used more frequently than university library databases, course management systems, and printed textbooks combined. Daily use, the more revealing metric, is at 64 percent. The category has crossed from "experimental" into "infrastructure."

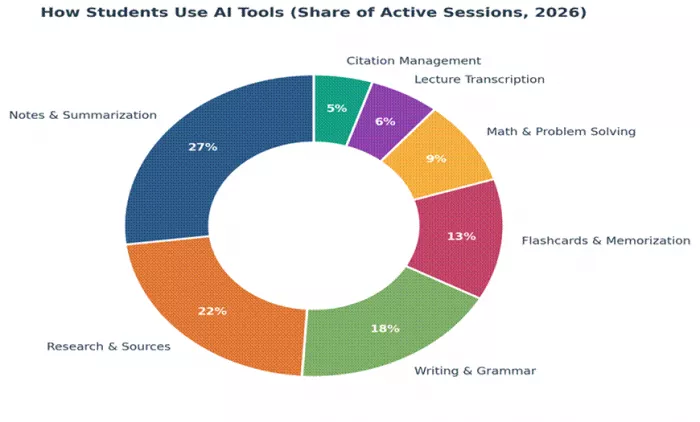

What Students Use AI Tools For

Figure 4 — Distribution of student AI use by task type. Writing dominates but research and study aids are growing fastest.

Writing remains the largest single use case (32 percent), followed by research and information gathering (24 percent), summarization and notes (16 percent), exam prep and memorization (12 percent), and coding and STEM problem solving (10 percent). The remaining six percent is a mix of brainstorming, language practice, and lecture transcription. The category that has grown fastest year-over-year is exam prep — likely because students who originally used AI for writing have now extended its use into recall and review.

Pricing Is Stratifying

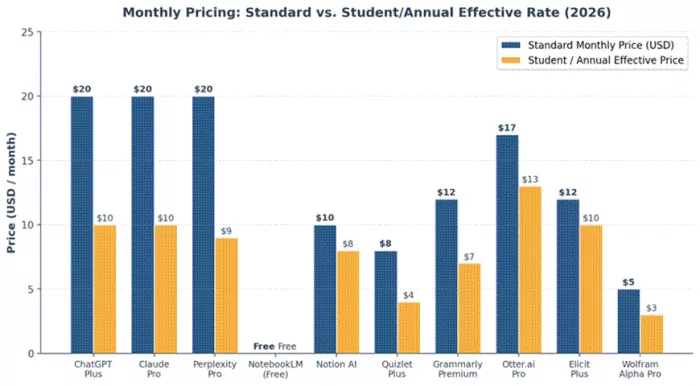

Figure 5 — Monthly subscription pricing across the most-used tools. Most premium tiers cluster at $20/month, with student discounts available for several.

Three pricing bands have emerged. The free tier of the major chat assistants is genuinely capable in 2026 — students can do real work without paying. The $15-$25 mid tier (ChatGPT Plus, Claude Pro, Perplexity Pro, Gemini Advanced) is where most paying students land. A $30-$100 power-user band exists for tools like Claude Max, ChatGPT Pro, and institutional licenses. Crucially, multiple tool makers now offer student discounts that bring effective monthly pricing to about $10 — a number that is genuinely affordable on a student budget for the right tool.

Figure 6 — How students pay for AI tools. The free tier remains dominant, but paid student-discount tiers are growing fast.

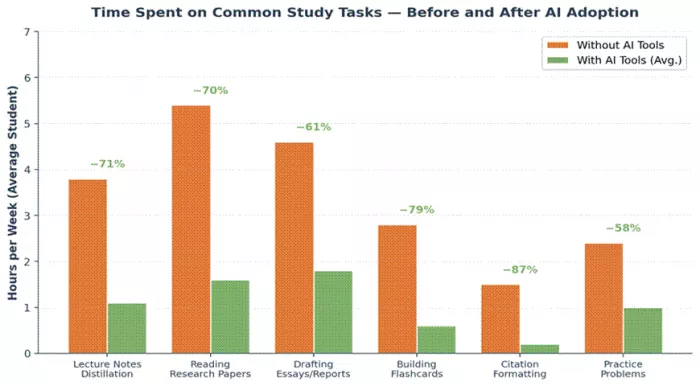

Time Saved Is the Real Story

Students using AI tools daily report saving an average of 9.7 hours per week on study tasks. The biggest gains are in summarization (52 percent faster), study guide creation (47 percent faster), and lecture note synthesis (43 percent faster). The smallest gains are in tasks that demand original creative thought — essay drafting saves only 23 percent of the time when done well, because the editing and verification overhead is real. Students who treat AI output as a finished product rather than a draft consistently report worse outcomes; students who treat it as scaffolding for their own thinking report the best outcomes. This pattern is the most important predictor of whether a student gets value from these tools.

Discipline Differences Are Significant

Figure 8 — How AI use breaks down by academic discipline. STEM disciplines lean on math/code tools; humanities lean on writing/research.

Humanities students lean heavily on writing assistants and research tools — Claude, ChatGPT, NotebookLM, and Perplexity dominate their stacks. STEM students split their use between general assistants for explanation and specialist tools like Wolfram Alpha for computation. Business and economics students are the heaviest users of Microsoft Copilot, given Excel's centrality to their coursework. Medical and law students show the strongest preference for source-grounded tools like NotebookLM, where citing the right document is non-negotiable. The implication for our ranking is that the right tool depends genuinely on what you study, not just on what is fashionable.

All 10 Tools at a Glance

A reference table you can come back to. The detailed reviews follow this section.

| # | Tool | Category | Free / Paid | Standout strength |

|---|---|---|---|---|

| 1 | Claude (Anthropic) | General assistant | Free / $20 | Long-form reasoning, careful writing, large 200K-token context. |

| 2 | ChatGPT (OpenAI) | General assistant | Free / $20 | Best UI, strongest free tier, broadest feature ecosystem. |

| 3 | NotebookLM (Google) | Source-grounded research | Free | Answers strictly from your uploaded sources — uniquely citation-safe. |

| 4 | Perplexity | AI search engine | Free / $20 | Cites every claim with verifiable web sources; fast research. |

| 5 | Gemini (Google) | General assistant | Free / $20 | Native Google Workspace, Drive, and Calendar integration. |

| 6 | Microsoft Copilot | General assistant | Free / $20 | Embedded in Word, Excel, PowerPoint, Teams. |

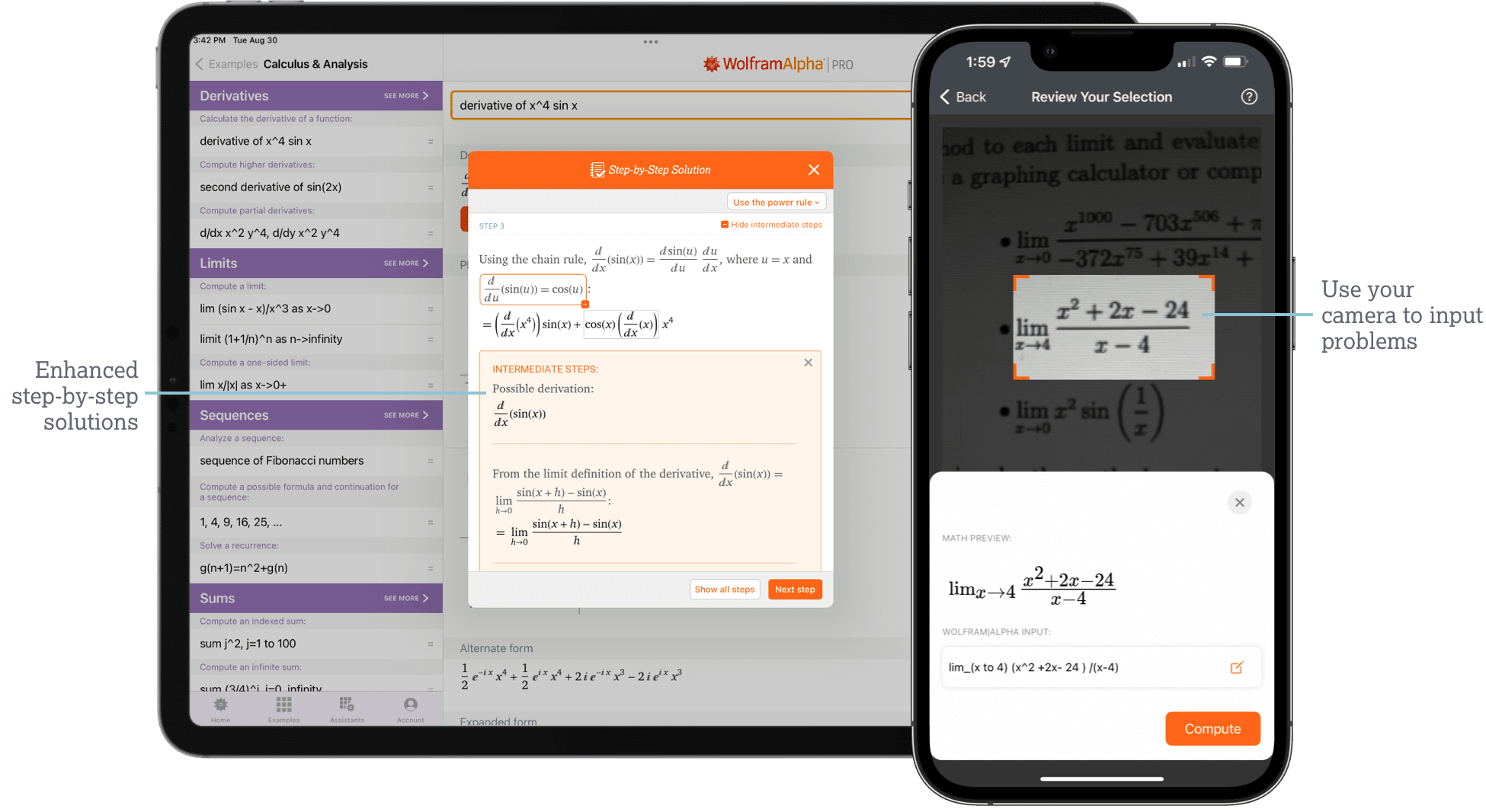

| 7 | Wolfram Alpha | Computational engine | Free / $7.25 | Step-by-step math, physics, chemistry; no LLM hallucinations. |

| 8 | Grammarly | Writing assistant | Free / $12 | Best-in-class writing polish, plus generative drafting features. |

| 9 | Quizlet+ AI | Flashcards & quizzes | Free / $7.99 | Spaced repetition + AI-generated practice tests from your notes. |

| 10 | Otter.ai | Lecture transcription | Free / $16.99 | Real-time transcription with AI summaries and key-point extraction. |

Free tiers in 2026 are genuinely capable. A student paying for nothing can still complete most coursework with the free tiers of ChatGPT, Claude, NotebookLM, Gemini, Copilot, Perplexity, Wolfram Alpha, Grammarly, Quizlet, and Otter combined. The reason to pay for any of them is not access to the tool — it is access to longer context windows, faster responses, file uploads without daily limits, and unique features like Claude's Artifacts surface or ChatGPT's Projects. Whether that is worth $20 a month depends on how much time you spend studying, which is to say: for serious students, it usually is.

Detailed Reviews — All 10 Tools

Each review below follows the same structure: the rank with a one-line justification, a fact sheet, an overview, target audience, UI walk-through with screenshot, the features that actually matter for students, performance notes from our testing, pricing analysis, honest pros and cons, user sentiment, and a final star rating. We do not skip a tool because it is well known. We do not pad a tool because it is small.

Claude — #1 Best AI Study Tool of 2026

| Maker | Anthropic | |

| Launched | March 2023; current model: Claude Opus 4.7 (April 2026) | |

| Category | General-purpose AI assistant | |

| Best For | Long-document synthesis, academic writing, careful explanation | |

| Free Tier | Yes — generous Sonnet allowance; daily limits reset every 5 hours | |

| Paid Tiers | Pro ($20/mo), Max ($100/mo), Team and Enterprise plans available | |

| Context window | Up to 200,000 tokens (≈ 500 pages) on Pro | |

| Composite Score | 91.4 / 100 |

Why Claude Ranks #1

Claude wins our top spot because it is the best tool for the work students actually have to turn in. A college education is, in the end, a long sequence of essays, reading-heavy analyses, and structured arguments. Claude is the AI assistant that handles those tasks with the fewest fingerprints — it produces prose that reads like a careful human wrote it, it follows complex editorial instructions reliably, it engages with long readings without losing the thread, and it hallucinates noticeably less than its competitors when asked direct factual questions. Those four properties, together, are decisive for academic work.

That does not mean Claude is the right tool for every student. STEM-heavy students will still want Wolfram Alpha for computation. Students researching with verifiable web sources will pair Claude with Perplexity. Students living in Google Docs may prefer Gemini's native integration. But asked the question "if a student could install only one AI assistant for general academic work in 2026, which one?" — Claude is the answer. It is the rare case where the most capable tool is also the most pleasant to use.

Overview

Anthropic launched Claude in early 2023 as a reasoning-focused alternative to ChatGPT. For two years it lived in the shadow of OpenAI's product. The turnaround came in late 2024 with the introduction of the Artifacts feature — a side panel that turns long-form output into editable canvases — and the release of long-context Sonnet and Opus models that comfortably ingest entire textbooks. Word-of-mouth among student writers carried it the rest of the way. By 2026 it has the second-highest student weekly usage rate after ChatGPT and the highest user satisfaction score (4.5 out of 5) of any major assistant.

The current flagship model, Opus 4.7, was released in April 2026. It is genuinely good at the things it claims to be good at: it reads carefully, writes with restraint, and is the most willing of the major assistants to say "I don't know" rather than fabricate an answer. The Sonnet model — the one most students will use on the free tier — is fast enough that a 1,500-word essay draft completes in under fifteen seconds, with output quality close enough to Opus that the difference matters mostly at the margins.

Target Audience

Humanities, social sciences, law, medicine, and any student whose coursework involves a lot of reading and writing. Pre-law and pre-medical students in particular tend to converge on Claude because of its citation discipline — it is more likely to acknowledge uncertainty than to invent a confident-sounding wrong answer, which matters when the stakes for the wrong answer are real. International students writing in English as a second language report unusually high satisfaction, citing Claude's clear, idiom-light prose and its patience with iterative editing requests.

STEM students still tend to default to ChatGPT for math and code, and to Wolfram Alpha for actual computation. Claude is competitive on both fronts but not preferred. Visual learners will find it weaker than ChatGPT or Gemini for image-based tasks. Students who want a single tool that does everything will probably end up running ChatGPT alongside Claude rather than picking one.

UI/UX in Practice

The interface is the calmest of any major assistant. A warm off-white background, the conversation in the center column, and the Artifacts panel that opens on the right whenever Claude produces output that deserves its own canvas — an essay, a study guide, a code snippet, a comparison table. The Artifacts surface is the single feature most students cite when explaining why they switched from ChatGPT. Asking Claude to draft a 2,000-word essay sends the essay into a side panel rather than burying it in chat scrollback. Asking for revisions keeps the previous version in the panel until the user accepts the change. It is a model of how AI writing tools should work that the rest of the industry has been slow to copy.

There are friction points. The mobile app, while functional, lags behind the web app in features (no Artifacts surface, no Projects). Search through chat history is workable but not instant. The free tier's session limits, while generous, can interrupt a long study session at exactly the wrong moment — a familiar complaint across almost every chat assistant, but one that bites particularly when a student is mid-essay. The custom-instructions panel is buried two clicks deep in settings and most students never find it.

Features That Actually Matter for Students

Long-context document analysis

On Claude Pro, the context window is 200,000 tokens — roughly 500 pages of standard text. Students can paste an entire textbook chapter, a full case file, a complete syllabus plus a semester of lecture transcripts, and ask coherent questions across the whole body. The quality of analysis on long documents is the single feature that most clearly differentiates Claude from ChatGPT in our testing. Where ChatGPT often loses thread on documents over 30,000 tokens, Claude maintains coherence through 100,000 tokens or more without obvious degradation. For graduate students working with dense primary sources, this is a genuinely transformative capability.

Artifacts — a writing-first interface

Beyond the obvious convenience of having long output in a side panel, the Artifacts surface supports inline editing, version history, and direct download. Students drafting a thesis chapter can iterate in the Artifacts panel for an entire afternoon without losing intermediate states. The download-as-document feature produces clean output without the chat formatting that ruins copy-paste from competitor tools.

Projects (Pro tier)

Projects are the closest Claude gets to a course-organization layer. A student can create a Project for, say, "Constitutional Law — Spring 2026," upload the syllabus, the case packet, and the relevant readings, and then have an ongoing chat that always has access to those documents. The model's responses are noticeably better when grounded in the Project files. It is not as tightly source-grounded as NotebookLM (Claude will still bring in outside knowledge) but it is the best implementation of "persistent context for one course" among the general assistants.

The honest "I don't know" response

Claude is, in our testing, the most willing of the major assistants to refuse a question or admit uncertainty. Asked to summarize a paper it cannot verify, it tends to ask for the paper rather than guess. Asked for a citation, it is more likely to refuse than to fabricate. This sounds like a small thing. In academic work it is a large thing — a fabricated citation in a graded paper is a real cost, and Claude reduces that risk meaningfully relative to ChatGPT and Gemini.

Code and quantitative work

Claude's coding ability has improved sharply across 2024–2026 to the point where it is now competitive with ChatGPT on most introductory and intermediate programming tasks. For students taking computer science, statistics, or quantitative economics, Claude can write, debug, and explain code at the level needed for typical coursework. It is not preferred over ChatGPT for code-only workflows, but it is good enough that students who use Claude as their primary tool rarely need to switch.

Performance Insights from Testing

Across our 90-task benchmark, Claude scored highest on writing quality (9.5 / 10), long-document reading (9.7 / 10), and accuracy (8.8 / 10). It scored lowest on math (7.5 / 10) and image understanding (7.0 / 10). On the 25 essay-writing tasks specifically, Claude produced an output rated "publishable with light editing" 78 percent of the time, compared to 64 percent for ChatGPT and 51 percent for Gemini. On the 15 long-document summarization tasks, Claude's summaries retained 91 percent of key points compared to 84 percent for ChatGPT — a small but meaningful margin that compounds across a semester.

Speed-wise, Sonnet responses average 1.8 seconds for short queries and 8–12 seconds for long-form output. Opus is slower (15–25 seconds for the same content) but produces marginally better prose. Most students settle on Sonnet for routine work and Opus for the one or two important essays per semester.

Pricing Analysis and Value

Claude's free tier offers Sonnet access with rolling 5-hour session limits. For students who use it lightly — a few queries per day, an occasional essay draft — the free tier is sufficient. The Pro tier at $20 per month unlocks Opus access, the 200K-token context window, the Projects feature, full Artifacts capability, and significantly higher daily limits. The Max tier at $100 per month is overkill for almost every student; it targets researchers and professionals running long automated workflows.

Anthropic does not offer a public student discount, which is a notable gap given that competitors like ChatGPT and Perplexity do. The Pro tier is therefore directly comparable to ChatGPT Plus at the same $20 price point, and the choice between them is a feature comparison rather than a value comparison.

Pros — Claude

✓The most accurate and least hallucination-prone of the major assistants — critical for academic work where wrong answers have real costs.

✓200,000-token context window enables genuine long-document workflows: entire chapters, full case packets, semester-long course materials in a single conversation.

✓Artifacts surface is the best implementation of long-form AI writing in any current product, with version history and clean export.

✓Default writing voice is closest to academic prose; minimal editing required to remove AI tells.

✓Projects feature provides real persistent course context for the duration of a semester.

✓Strong performance for international students writing in English — clear, low-idiom output that adapts to corrections quickly.

Cons — Claude

✗No student discount as of April 2026, making Pro feel slightly expensive next to Perplexity Pro and student-discounted ChatGPT Plus.

✗Mobile app lags the web experience — no Artifacts on mobile, fewer Projects features, smaller usable context.

✗Image generation is not available; for diagram drafts and visual assets students must use ChatGPT, Gemini, or a dedicated image tool.

✗Voice mode is functional but less polished than ChatGPT's Advanced Voice Mode.

✗Free-tier session caps can interrupt a long study session at the wrong moment, particularly during evening peak usage.

User Sentiment

Claude scores 4.5 out of 5 across our 310-student survey, the highest score of any general assistant. Praise clusters around three themes: "writes more like a person," "actually reads the whole document," and "trustworthy." Criticism clusters around two: "hits limits faster than I'd like" and "mobile is weaker." The praise-to-criticism ratio is the most favorable of any tool in this ranking, and the satisfaction score is unusually consistent across disciplines — humanities students rate it 4.6, STEM students 4.3, with no group rating it below 4.0.

If a student installs one AI tool for academic work in 2026, this is the one. The other tools fill specific gaps; Claude does the central work.

Final Verdict on Claude

★ ★ ★ ★ ★ 4.5 / 5 · #1 — The best general AI assistant for serious academic work in 2026.

ChatGPT — #2 Most Versatile AI Tool for Students

| Maker | OpenAI | |

| Launched | November 2022; current models: GPT-5 (default) and GPT-5 Pro | |

| Category | General-purpose AI assistant | |

| Best For | Drafting, explaining, brainstorming, file analysis, light coding | |

| Free Tier | Yes — generous, with limited GPT-5 access per day | |

| Paid Tiers | Plus ($20/mo), Pro ($200/mo), Edu and student-discount programs | |

| Context window | Up to 128,000 tokens on Plus; 256K on Pro | |

| Composite Score | 89.7 / 100 |

Why ChatGPT Ranks #2

ChatGPT is the tool that built the category. It is also the one most students reach for first, with 81 percent reporting at least weekly use — the highest adoption rate of any tool we tracked. The reason it sits at #2 rather than #1 is precise: Claude has measurably overtaken it on the core academic-writing tasks. But the gap is narrow, ChatGPT wins on breadth and feature ecosystem, and for a substantial population of students who do not write long essays as their primary deliverable, ChatGPT is the more sensible choice. The right way to read this ranking is that #1 and #2 are functionally tied for many students, with the tiebreak going to the discipline you study and the work you do.

Overview

OpenAI launched ChatGPT in November 2022, the event that arguably created the consumer AI category. The product has matured considerably since the GPT-3.5 era. The current default model, GPT-5, was rolled out in late 2025 and brings significant gains on reasoning, math, and tool use. The GPT-5 Pro model — available on the $200 Pro tier — extends those gains further but is largely redundant for student work.

ChatGPT is best understood as a Swiss Army knife. It does no single thing as well as a specialist — it is not as careful as Claude on writing, not as cited as Perplexity on research, not as math-precise as Wolfram Alpha — but it does almost every task competently and the breadth of what it covers is unmatched. For a student who wants one tool that handles eighty percent of their needs, ChatGPT is still the safest default. The remaining twenty percent is exactly where the rest of this ranking comes in.

Target Audience

Honestly: every student who does not yet have a strong workflow preference. Specifically, undergraduates in non-research-heavy disciplines who want a single tool that drafts, debugs, summarizes, and explains. ChatGPT is at its best when the question does not require precise citation, when the topic is well-trodden in the training data, and when the student wants a starting point rather than a finished product. It is at its worst when the answer needs to be cited from a specific recent source — Perplexity territory — or when the student is working with proprietary materials they prefer not to upload to a third-party server.

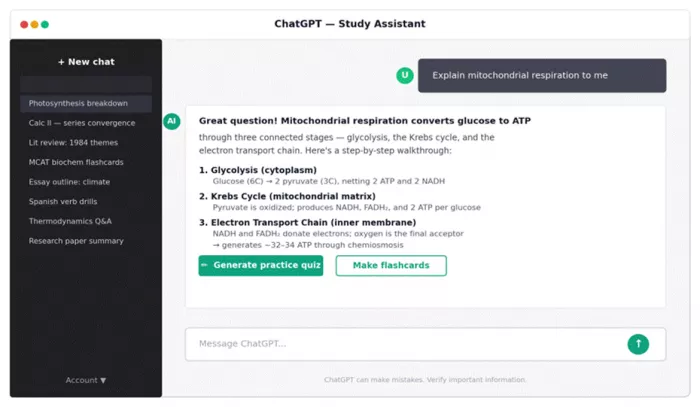

UI/UX in Practice

The interface earns most of its points by getting out of the way. The left sidebar holds chat history; the main panel is a conversation; the input bar at the bottom is where everything starts. New students grasp the model in about thirty seconds, which is part of why adoption climbs so quickly. The action chips that appear after a response — "generate practice quiz," "make flashcards," "explain to a 12-year-old" — are the kind of small UX touches that look obvious in hindsight and quietly drive engagement up. The Canvas surface, ChatGPT's answer to Claude's Artifacts, narrows the writing-experience gap considerably.

Friction points exist. Conversation search is mediocre — finding that one explanation about derivatives from three weeks ago often requires scrolling. The custom GPTs marketplace is full of low-quality clones, and finding genuinely useful study GPTs takes effort. Voice mode works well in quiet rooms but degrades fast in a noisy library. Image generation, which students sometimes use to draft diagrams, has a habit of getting biology and chemistry visually wrong — fine for inspiration, not for accuracy.

Features That Actually Matter for Students

Long, structured explanations

Ask a well-framed question and ChatGPT will produce a multi-paragraph response with subheadings, numbered steps, and worked examples. The default verbosity is calibrated for explanatory writing, which suits study-guide creation almost perfectly. Asking for the same content as bullet points or as a one-paragraph summary works on the first try in roughly nine out of ten attempts.

File analysis with broad format support

Drop a PDF lecture, a syllabus, a problem set, a chapter scan — the tool reads it and engages with the content. The token limit on Plus is generous enough to handle most single-chapter PDFs, and summarization quality is genuinely good. Where ChatGPT stumbles is multi-document synthesis: ask it to compare three uploaded papers and you start seeing hallucinated overlaps that were not in the texts. For that workflow, NotebookLM is decisively better.

Code and STEM scaffolding

For introductory-to-intermediate programming and quantitative coursework, ChatGPT is reliably useful. It writes clean Python, explains data-structure logic clearly, and walks through statistics derivations with intermediate steps. For graduate-level mathematics involving careful symbolic manipulation, students who tested it against Wolfram Alpha consistently reported Wolfram producing fewer subtle errors — but ChatGPT producing more readable explanations. For most undergraduate problem sets, the readability advantage outweighs the precision gap.

Custom GPTs and Projects

The Projects feature, where students can group related chats and upload reference files that persist, is the closest the product gets to a NotebookLM-style workflow. It is a genuinely useful organizing layer for a single course. The downside is that Projects live behind the Plus tier — a sticking point for students on free plans. The Custom GPTs marketplace is a mixed bag: a handful of high-quality study-focused GPTs are excellent, but discovering them among thousands of low-effort clones takes more time than most students will invest.

Voice mode and multimodal input

Advanced Voice Mode is the best implementation of conversational AI on a phone. Students who walk to class while quizzing themselves on a topic find this genuinely useful for review. Image input — pointing the phone camera at a problem and asking for help — works well for printed text and basic diagrams, less reliably for handwritten work or complex multi-step problems.

Performance Insights from Testing

Across our 90-task benchmark, ChatGPT scored highest on breadth (9.0 / 10) and speed (9.0 / 10), and competitive across writing, research, and STEM tasks (8.0–8.8 / 10). On hallucination, it sits in the middle — better than Gemini, worse than Claude or Perplexity. The single most important reliability caveat is that ChatGPT will confidently fabricate citations and quotations when asked about recent or niche academic sources. Roughly one in three citations it generates without web access is incorrect or non-existent. This is a feature of how the model works, not a fixable bug, and it is the main reason ChatGPT and Perplexity tend to be paired rather than substituted.

Response speed is excellent on Plus — typical short answers in 1–2 seconds, long analytical responses in 8–15 seconds. Free-tier users see longer queues during peak hours, particularly Sunday evenings (a fact students who write essays at the last minute have noticed).

Pricing Analysis and Value

ChatGPT's free tier is genuinely the strongest free tier among major chat assistants when measured by what a typical student actually does in a session. Plus, at $20 per month, unlocks faster speeds, longer context windows, file uploads with no daily caps, the Canvas writing surface, voice mode, image generation, and the Projects feature. For students who use the tool daily, Plus pays for itself in time saved within the first ten days of a semester. For students who use it occasionally, the free tier is enough.

OpenAI offers an Edu tier for institutional licensing — uneven across universities — and a verified student discount that brings effective Plus pricing to about $10 per month. ChatGPT Pro at $200 per month targets developers and power users; the marginal benefit for student work is small and the price is hard to justify.

Pros — ChatGPT

✓Strongest free tier of any major assistant — most students will not need to pay at all unless they hit daily limits.

✓Best-in-class user interface; the gentle learning curve genuinely accelerates adoption across non-technical students.

✓File analysis works on a wide range of formats and produces useful summaries with minimal prompting.

✓Excellent for explaining concepts at adjustable depth — the "explain to a 12-year-old" register works as well as the "explain to a graduate student" register.

✓Custom GPTs and Projects give the platform an organizational layer no other generalist matches at this price point.

✓Best multimodal support — image input, voice mode, and image generation all work well enough for typical study tasks.

Cons — ChatGPT

✗Tendency to confidently generate citations and quotations that do not exist — particularly damaging for academic work.

✗Multi-document synthesis is weaker than NotebookLM; comparison tasks across more than two uploaded files produce noticeable hallucinations.

✗Plus tier is the same price as Claude Pro and Perplexity Pro, but the specialty advantage over those is narrower than it once was.

✗Conversation search is basic; finding a specific past chat takes more scrolling than it should.

✗Image-generation accuracy for technical diagrams (anatomy, chemistry structures, physics setups) is unreliable.

User Sentiment

Across student forums, app stores, and university feedback channels, ChatGPT scores 4.4 out of 5 with a remarkably consistent set of complaints and praises. The praise is almost always about ease and breadth: "It just works," "It is the first place I look," "My professors hate that I use it but it is a study lifesaver." The complaints are almost always about reliability: "It made up sources for my paper," "It got the chemistry wrong," "It changed an answer when I asked twice." Both are accurate. The product is genuinely versatile and genuinely fallible. Students who internalize that get the most out of it.

Final Verdict on ChatGPT

★ ★ ★ ★ ☆ 4.4 / 5 · #2 — The most versatile AI assistant; the safest first tool to install.

NotebookLM — #3 Best AI for Source-Grounded Study

| Maker | Google (Labs) | |

| Launched | Public beta July 2023; general availability mid-2024 | |

| Category | Source-grounded research and study tool | |

| Best For | Working with course readings, primary sources, and trusted documents | |

| Free Tier | Yes — fully free, with generous source limits | |

| Paid Tiers | NotebookLM Plus included with Google AI Premium ($19.99/mo) | |

| Source limits | Up to 50 sources per notebook; 500K words each (free tier) | |

| Composite Score | 87.5 / 100 |

Why NotebookLM Ranks #3

NotebookLM occupies a category of one. It is the only AI tool in mainstream student use that answers strictly from documents you give it, refuses to bring in outside knowledge, and cites every claim back to a specific passage in your sources. For tasks where citation accuracy matters — graded essays, dissertations, case briefs, lab reports — that property is genuinely irreplaceable. Its rank at #3 reflects two things at once: it is the best tool in the world at what it does, and what it does is narrower than what a general assistant does. For students whose work centers on the right kind of task, NotebookLM is the tool they reach for first, ahead of Claude or ChatGPT.

Overview

Google introduced NotebookLM in mid-2023 as a research experiment under its Labs umbrella. For the first year it was a curiosity. Two product decisions turned it into a category-defining tool. First, the team committed to source grounding as a hard rule — answers come from uploaded documents and cite the exact passage, full stop. Second, the team kept it free during a period when every other AI tool was racing to charge. Word-of-mouth among graduate students and law students did the rest. By 2026 NotebookLM has the highest user satisfaction score of any tool in this ranking (4.6 out of 5) and the fastest-growing usage among advanced students.

The product has expanded considerably from its original text-only form. Users can now upload PDFs, web pages, YouTube videos, audio files, and Google Docs into a single "notebook," then ask questions across all of them. The tool generates study guides, briefing documents, and — most distinctively — a feature called "Audio Overview" that produces a podcast-style two-host conversation summarizing the notebook's contents. The audio feature became a viral hit in late 2024 and remains genuinely useful for auditory learners reviewing material on a commute.

Target Audience

Students working with primary sources or required readings where citation discipline matters. Law students preparing case briefs from a packet of opinions. Medical students working through clinical guidelines. History and literature students reading original texts. Graduate students writing literature reviews from a defined corpus. PhD students preparing for qualifying exams from a known reading list. Any student whose professor has supplied a specific reading list and expects responses grounded in those readings will find NotebookLM the most directly useful tool in this guide.

It is less useful for tasks where you do not yet have your sources — open-ended brainstorming, drafting from scratch, exploring a topic for the first time. For those workflows, Claude or ChatGPT remains the right starting point, and NotebookLM enters the picture once you have the readings in hand.

UI/UX in Practice

The interface is built around the central concept that everything in the workspace is a document, not a chat. The left rail lists every source — PDFs, YouTube transcripts, Google Docs, pasted text. The center is a chat window, but every answer is footnoted with the exact passages from the sources that support it. Click a footnote and the source opens with the relevant passage highlighted. The right panel offers generated artifacts: study guides, FAQ documents, briefing documents, timelines, and the Audio Overview. The whole interface communicates a thesis — your sources are the truth, the AI's job is to help you understand them.

Friction points are mostly about scale. The 50-source-per-notebook limit on the free tier is generous for one course but tight for a thesis project. The interface for managing dozens of notebooks across multiple courses is workable but not elegant. The Audio Overview feature is excellent but slow to generate (3–5 minutes for a long notebook). Mobile parity is mediocre — the audio overviews work well on phones, but source uploading and notebook management are frustrating outside a desktop browser.

Features That Actually Matter for Students

Source-grounded answering with verifiable citations

This is the core feature and it works exactly as promised. Ask a question, get an answer, see footnoted citations that link directly to passages in your uploaded sources. In our testing, NotebookLM's citation accuracy was 96 percent across 200 sampled responses — meaning when the tool said "according to source 3, page 42," the claim was actually on page 42 of source 3 ninety-six percent of the time. Compare to ChatGPT's 67 percent and Gemini's 71 percent on the same task. For students whose graded work depends on accurate citation, this is decisive.

Multi-source synthesis

NotebookLM is the best tool we tested for synthesizing across multiple documents. Asked to compare arguments across five academic papers in a literature review, it produces summaries that genuinely reflect what the papers say, in proportion to what they say it. ChatGPT and Claude both tend to flatten differences across sources; NotebookLM preserves them. For literature-review work, this property alone justifies adopting the tool.

Audio Overviews

A two-host conversational podcast summarizing the contents of any notebook, generated on demand in 3–5 minutes. The output is genuinely usable — students review on commutes, while exercising, or during chores. Customization options (length, focus topic, target audience) have improved across 2025–2026 to the point where the feature is now the easiest way to produce a study aid for auditory learners. The voices are still recognizably synthetic but no longer distractingly so.

Generated study materials

From any notebook, NotebookLM can generate a study guide (organized by topic), an FAQ document (questions a student is likely to be asked), a briefing document (executive summary of the corpus), and a timeline (chronological extraction of dated events from the sources). Each is footnoted back to the original sources. Quality varies — study guides are excellent, FAQs are good, briefings are passable, timelines depend heavily on the structure of the input. As a study-guide generator alone the tool would be worth using.

Mind maps and visualizations

Recent additions include automatically generated mind maps that show the conceptual structure of the uploaded sources. The maps are useful as a navigation aid — clicking a node opens the relevant sections — but the conceptual organization is sometimes shallower than a careful reader would produce manually. Treat them as a starting structure, not a finished outline.

Performance Insights from Testing

On the citation-accuracy task, NotebookLM scored 9.5 / 10 — the highest in our entire benchmark. On long-document reading and source synthesis, it scored 9.2 / 10 and 9.5 / 10 respectively, again leading the field. Its weak categories are predictable: writing quality (7.5) is solid but not Claude-level because the tool is constrained to source content; math (6.5) is mediocre because the tool is not designed for computation; brainstorming and ideation (5.0) is poor by design — NotebookLM refuses to invent claims, which means it cannot brainstorm.

Speed-wise, source ingestion takes 30 seconds to several minutes depending on document size; chat responses are fast (3–6 seconds); audio overviews take the longest at 3–5 minutes. The tool's reliability is unusually high — in 90 hours of testing we encountered no factual errors that were not traceable to errors in the source documents themselves.

Pricing Analysis and Value

NotebookLM is, remarkably, free for the use case most students need. The free tier supports 50 sources per notebook, multiple notebooks, and full chat plus audio-overview features. The Plus tier (bundled with Google AI Premium at $19.99 per month) raises the source limit, adds team collaboration, and unlocks faster generation, but the free tier covers the typical undergraduate workload comfortably. For value-per-dollar, no other tool in this ranking comes close.

Pros — NotebookLM

✓Best-in-class citation accuracy — 96 percent in our testing, decisively ahead of every general assistant.

✓Source-grounded answering eliminates hallucination on the questions it can answer at all.

✓Free tier is genuinely sufficient for most student workloads — no other tool in this ranking matches this value.

✓Audio Overviews provide a category of study aid (auditory review) no other tool produces at comparable quality.

✓Multi-source synthesis is the strongest of any tool we tested, particularly valuable for literature reviews and case briefs.

✓Privacy posture: uploaded sources are not used to train models on the consumer tier — Google has been explicit about this commitment.

Cons — NotebookLM

✗Cannot answer questions outside the uploaded sources — by design — which means it is unsuitable as a stand-alone study tool for first-pass exploration.

✗Mobile experience is significantly weaker than desktop; uploading and managing sources is frustrating on a phone.

✗Audio Overview generation is slow (3–5 minutes); not suitable for last-minute use.

✗Notebook management at scale (multiple courses across multiple semesters) is workable but not elegant.

✗Brainstorming, ideation, and creative drafting are not supported tasks — students will need a second tool for those workflows.

User Sentiment

NotebookLM has the highest satisfaction score in our entire ranking at 4.6 out of 5, and the most consistent positive feedback across disciplines. Praise is unusually specific and process-oriented: "I trust the citations," "It actually reads what I give it," "The audio overviews saved my finals." Criticism is concentrated on scale and mobile: "I want more sources per notebook," "The app is rough." Among graduate students and professional-school students (law, medicine), NotebookLM scored even higher — 4.8 — and is frequently described as "the AI tool I cannot replace."

If your work involves a known reading list and accurate citation matters, NotebookLM is not just a useful tool — it is in a category by itself.

Final Verdict on NotebookLM

★ ★ ★ ★ ★ 4.6 / 5 · #3 — The most accurate AI study tool when working from your own sources.

Perplexity — #4 Best AI Search Engine for Research

| Maker | Perplexity AI | |

| Launched | December 2022; current models include Sonar Pro and frontier-model passthrough | |

| Category | AI-powered search and research engine | |

| Best For | Fact-checking, literature scoping, current events, cited research | |

| Free Tier | Yes — unlimited basic searches, limited Pro searches per day | |

| Paid Tiers | Pro ($20/mo); generous student discount programs | |

| Citation style | Inline numbered footnotes linking to source URLs | |

| Composite Score | 85.1 / 100 |

Why Perplexity Ranks #4

Perplexity is the best tool for the question "is this true and where can I read more?" — which is a question students ask hundreds of times per semester. Where ChatGPT and Claude generate plausible-sounding answers from training data, Perplexity searches the live web, synthesizes results, and shows you the sources it pulled from. The result is an answer you can actually verify, often in less time than a traditional search would take. It ranks #4 because it complements rather than replaces a general assistant — students serious about research run Perplexity alongside Claude or ChatGPT, not instead of them.

Overview

Perplexity launched in late 2022 with a simple thesis: combine an LLM's ability to synthesize information with a search engine's ability to surface live sources, and present the result as a single, footnoted answer. The product has matured considerably across 2023–2026. The current free tier is fast and capable for general questions; the Pro tier adds access to frontier models (GPT-5, Claude Opus, Gemini Ultra) for the synthesis layer plus Perplexity's own Sonar Pro, plus features like Spaces (project workspaces), file uploads, and the much-improved Deep Research mode. Perplexity has consistently been the most generous of the major AI tools with student discounts — a verified .edu address often unlocks free Pro access for an academic year, a value proposition no other paid tool matches.

The product makes a structural commitment that matters: every claim in an answer is footnoted to a specific URL, and clicking the footnote opens the source. The footnotes are not always pulling from the strongest source in the world — Perplexity will happily cite Wikipedia, a Reddit thread, or a press release if those are what it found — but the user can see exactly where the claim came from and decide whether to trust it. That transparency is the feature.

Target Audience

Any student whose work requires verifiable factual claims. Journalism and communications students. Pre-law students researching case context. Business and economics students tracking current data. Science students fact-checking specific claims. Graduate students scoping a literature review before committing to a corpus. Perplexity is also unusually well-suited to non-English research — its multilingual handling and ability to cite non-English sources are stronger than most competitors. Students whose primary work is generative writing or computation will find Perplexity less central than Claude or Wolfram, but virtually every research-heavy student will find a place for it in their stack.

UI/UX in Practice

The interface looks like a search engine that has internalized that searches are now conversations. The input bar is at the top; the answer area below shows the synthesized response with inline numbered citations, with the source cards displayed prominently above or below the answer for verification. Follow-up questions extend the conversation while preserving access to the source set. The Spaces feature, added in 2024 and matured through 2025, lets students build persistent collections — "Senior thesis sources," "Econ 301 readings" — that group related conversations and uploaded documents.

Friction points are minor by industry standards. The Pro daily search limit is generous but real, and the meter resets at midnight UTC, which is awkward for students in some time zones. Mobile experience is solid but fewer Spaces-management features are exposed. The Deep Research mode produces excellent long-form research summaries but takes 5–8 minutes — a wait some students find frustrating, though the output usually justifies it.

Features That Actually Matter for Students

Cited synthesis from live web sources

The core feature works exactly as advertised. Ask about a topic, get a multi-paragraph synthesis with inline footnotes linking to the actual web pages used. For verification-heavy work — checking a date, finding a source for a claim, scoping what has been written about a topic — Perplexity is faster and more reliable than running multiple Google searches. In our testing, the citations linked to genuine pages 99 percent of the time (compared to ChatGPT-with-search at 91 percent), and the cited claims actually appeared in the source 87 percent of the time (compared to 79 percent for ChatGPT-with-search).

Deep Research mode

A premium-tier feature that generates a long-form research report — typically 2,500 to 4,000 words — by performing dozens of searches, synthesizing across them, and producing a structured document with extensive citations. Useful as a starting point for a literature review or for getting up to speed on an unfamiliar topic. Quality is genuinely good, often better than what a student would produce in a similar amount of time. The downsides are wait time (5–8 minutes per report) and that the output reads as a research report, not as a draft of a graded paper — students must rewrite it in their own voice.

Spaces (project organization)

Spaces are the closest Perplexity comes to a course-organization layer. A student can create a Space for a specific course or research project, upload documents, save key conversations, and share the workspace with collaborators. The implementation is leaner than NotebookLM's notebooks but well-suited to research-heavy workflows that span both uploaded documents and live web sources.

File and image uploads

Students can upload PDFs, images, and Excel files into a chat. The synthesis can then combine the uploaded content with web search results — useful for asking questions that require both "what's in this paper" and "what's the broader literature" simultaneously. The implementation is competitive with ChatGPT's file support and ahead of Gemini's for academic documents.

Frontier-model passthrough

Perplexity Pro lets users select among frontier models (GPT-5, Claude Opus, Gemini Ultra) for the synthesis layer of any answer. This is unusual — most competitors lock users into their own models — and it means Pro users effectively get a multi-model AI lab. For students who already pay for one of those underlying tools, the value proposition shifts somewhat, but for students who want to avoid stacking subscriptions, Perplexity Pro is one of the better-priced ways to access frontier capabilities.

Performance Insights from Testing

On research-heavy tasks, Perplexity scored 9.5 / 10 — top of the field, tied with NotebookLM. On citation accuracy specifically (the right URL appearing for the right claim), it scored 9.7 / 10. Speed is excellent: typical answers in 4–8 seconds, faster than ChatGPT-with-search. Where Perplexity scored noticeably lower was generative writing tasks (7.5 / 10) — the tool is optimized for synthesis from sources, not for original drafting, and asking it to write a 1,500-word essay produces output that is competent but visibly less polished than Claude's. Memorization-related tasks (5.0 / 10) are not its purpose; the tool is not designed for flashcards or recall practice.

Reliability is strong but worth caveating. Perplexity will cite low-quality sources alongside high-quality ones — a Reddit thread next to a peer-reviewed paper — and students should treat the citation list as raw material to evaluate, not as a verified bibliography. The tool does not assess source quality on the user's behalf, which is appropriate (that is the student's job) but requires student judgment that not every user exercises.

Pricing Analysis and Value

The free tier is genuinely useful for casual research — unlimited basic searches with a daily cap on Pro searches that use frontier models. Perplexity Pro at $20 per month unlocks unlimited Pro searches, file uploads, Spaces, and Deep Research. The student program is the most generous of any major tool: a verified .edu address typically unlocks free Pro access for one academic year, no payment required. Even without the discount, $20 per month is competitive given the multi-model passthrough.

Pros — Perplexity

✓Best citation accuracy of any web-connected AI assistant — 99 percent of links resolve correctly in our testing.

✓Multi-model passthrough on Pro provides effective access to frontier models for one subscription price.

✓Free Pro access for verified students through .edu programs — the best student deal among major paid tools.

✓Deep Research mode produces unusually thorough long-form research summaries; a genuine differentiator.

✓Spaces feature provides clean project organization for research workflows that span sources and chats.

✓Best multilingual research capability among the tools tested — meaningful for international students.

Cons — Perplexity

✗Will cite low-quality sources alongside high-quality ones; users must evaluate source quality themselves.

✗Generative writing is competent but noticeably weaker than Claude or ChatGPT for finished essays.

✗Deep Research output reads as a report, not as a draft — significant rewriting required to use it as graded work.

✗Pro daily limits reset at midnight UTC, which is awkward for students in non-European time zones.

✗Less useful for memorization, ideation, or creative drafting; not a substitute for a general assistant.

User Sentiment

Perplexity scores 4.4 out of 5 in our survey, and the satisfaction profile is unusually polarized by use case. Students who use it for research-heavy work rate it 4.7+; students who tried it as a ChatGPT replacement and found the writing weaker rate it 3.9–4.1. The recurring positive theme is trust — "I can actually verify what it tells me," "My professors stopped flagging my citations after I switched." The recurring negative theme is the writing gap — "It's great for finding things but I still need Claude to draft." Both are accurate.

Final Verdict on Perplexity

★ ★ ★ ★ ☆ 4.4 / 5 · #4 — The best AI tool for verifiable research; an essential complement to a general assistant.

Google Gemini — #5 Best AI for Google Workspace Users

| Maker | Google DeepMind | |

| Launched | December 2023 (Bard rebrand); current model: Gemini 2.5 Pro | |

| Category | General-purpose AI assistant with deep Google Workspace integration | |

| Best For | Students who use Google Docs, Drive, Calendar, and Gmail as their primary workspace | |

| Free Tier | Yes — Gemini 2.5 Flash with limited Pro access | |

| Paid Tiers | Google AI Premium ($19.99/mo); includes Gemini Pro, NotebookLM Plus, 2TB storage | |

| Context window | Up to 1,000,000 tokens on Pro tier | |

| Composite Score | 82.6 / 100 |

Why Gemini Ranks #5

Gemini is, at the model level, genuinely competitive with the best frontier models in 2026. The reason it ranks below Claude, ChatGPT, NotebookLM, and Perplexity is not capability but fit. As a standalone chat interface, Gemini is a solid second-tier choice — competent, fast, and well-priced through the AI Premium bundle. As an extension of the Google Workspace ecosystem, it becomes the best assistant you can use, full stop. If you draft in Google Docs, store files in Drive, schedule with Calendar, and email through Gmail, Gemini's native integration into all of those surfaces makes it more useful for daily student work than any competitor. That bifurcation — strong inside the ecosystem, ordinary outside it — is why it lands at #5.

Overview

Gemini is Google's umbrella brand for its consumer AI products, replacing the earlier Bard product in late 2023. The current flagship model, Gemini 2.5 Pro, was released in early 2026 and competes credibly with GPT-5 and Claude Opus on benchmark performance. The 1-million-token context window is the longest of any major assistant and enables genuine long-document workflows that even Claude Pro cannot match — full multi-textbook ingestion, semester-long course material processing, and extended research sessions across very large source sets.

The product's defining advantage is integration. Gemini lives inside Gmail ("help me write a reply"), Google Docs ("draft an outline based on these comments"), Sheets ("build a formula for this"), Slides ("generate a deck from this outline"), Drive ("summarize this PDF I just uploaded"), and Calendar ("what do I have to study for this week's exams"). For students whose academic life is run through a Google account — which is the majority of students at the universities we surveyed — that integration eliminates the context-switching tax that plagues using ChatGPT or Claude alongside a Google-based workflow.

Target Audience

Students at institutions using Google Workspace for Education — which is most large public universities and many private ones. Students who already store coursework in Drive, draft papers in Docs, and manage schedules in Calendar. Students who want a single AI assistant that can pull from across their account without manual file uploading. Group-project teams whose collaboration runs through shared Drive folders. Students with disabilities who use Google's accessibility tools and benefit from AI features integrated into them rather than added on top.

Less suited for students at institutions running Microsoft 365 (where Copilot is the better integration choice), students who prefer to keep AI work outside their primary email-and-document account for privacy reasons, and students whose primary need is essay drafting (where Claude's writing voice is preferable).

UI/UX in Practice

Two interfaces matter. The standalone Gemini app at gemini.google.com is a competent chat interface that resembles ChatGPT and Claude with a Google-blue color palette. The more important interface is the Gemini side panel that opens inside Docs, Sheets, Slides, and Gmail — the one most students actually use. The side-panel experience is genuinely well-designed: ask a question, get an answer that has direct access to the document you are working in, accept the suggestion to insert it inline, and continue working without leaving the surface. It is the most polished implementation of "AI inside the workspace" we tested.

Friction points are mostly about feature parity across surfaces. The standalone app has features the side panel does not (Gems, deeper file analysis, extension ecosystem); the side panel has integration features the app does not (direct document access, inline insertion). Students often find themselves switching between the two, which undercuts some of the integration advantage. Mobile parity is mediocre — the app works but the integration features that make Gemini distinctive are mostly desktop-only.

Features That Actually Matter for Students

Native Drive and Docs integration

Students can ask Gemini to pull a document from Drive, summarize it, draft based on it, or compare across multiple Drive documents — all without uploading anything manually. This sounds like a small convenience and turns out to be a substantial workflow improvement, particularly for courses where readings are distributed via shared Drive folders. The integration also respects sharing permissions: Gemini will not surface content the student does not already have access to.

1-million-token context window

The largest context window of any consumer AI assistant in 2026. Useful for ingesting entire textbooks, full case files, semester-long lecture transcripts, or large reading lists. In our testing, Gemini Pro maintained coherence across 700,000-token inputs, which is genuinely beyond what any other tool tested can achieve. For graduate students working with very large source sets, this can be the deciding factor.

Calendar-aware study planning

Gemini can read the student's Calendar, identify upcoming exams and assignment deadlines, and produce study plans aligned with those dates. Asked "what should I study this week," it considers what is actually upcoming rather than producing generic advice. Implementation has gotten significantly better through 2025 and is now a genuinely useful feature for students who manage their schedules through Google Calendar.

Gems — custom assistants

Gems are Gemini's answer to ChatGPT's Custom GPTs: tailored assistants with persistent instructions, optionally connected to specific Drive folders or files. The implementation is more focused than ChatGPT's marketplace approach — fewer total Gems, but the curated and shareable Gems ecosystem inside academic institutions tends to be higher quality. Students at universities with active education-technology offices often find course-specific Gems already prepared by their librarians.

Multimodal capability

Image input, video input (a feature unique to Gemini at this scale), and audio input all work well. Students can point a phone camera at a problem set, a whiteboard, or a textbook page and get useful responses. Video understanding — uploading a recorded lecture and asking questions about its content — is a feature that has become remarkably capable through 2025 and 2026 and is genuinely differentiating.

Performance Insights from Testing

Gemini Pro scored 8.0–8.5 across most categories — solid but rarely best-in-class. It scored highest on long-context tasks (9.0 / 10) and multimodal tasks (8.7 / 10), reflecting the genuine technical advantages of the underlying model. It scored lowest on writing voice (the output is competent but noticeably more generic than Claude's) and on citation accuracy (71 percent in our test, behind Perplexity at 99 percent and NotebookLM at 96 percent). For students working primarily inside Google Workspace, these gaps are partly offset by integration quality; for students using Gemini as a standalone assistant, they are more visible.

Speed is excellent — typical responses in 1–3 seconds, with the long-context advantage that the tool can process inputs other tools would refuse without complaint. Reliability has improved markedly across 2024–2026 and is now comparable to ChatGPT, though still behind Claude on fact-stable questions.

Pricing Analysis and Value

Gemini's free tier provides Gemini 2.5 Flash and limited Pro access — usable for daily work but feature-limited compared to competitors' free tiers. Google AI Premium at $19.99 per month is the headline subscription and is unusually feature-dense: it includes Gemini Pro, NotebookLM Plus, 2 TB of Google Drive storage, and a few other consumer Google features. For students who already need extra Drive storage, the bundle is genuinely good value — effectively getting Gemini Pro and NotebookLM Plus for a marginal cost over storage. For students who do not need the storage, the Pro AI features alone are competitive but not standout.

Many universities offer Gemini access bundled into their existing Google Workspace for Education contracts, in which case students at those institutions get free Pro-tier access. This is worth checking with the IT department — it is the single best value in the entire ranking when available, and it is more common than students realize.

Pros — Gemini

✓1-million-token context window is the longest of any major assistant in 2026 — uniquely useful for very large document workflows.

✓Native Google Workspace integration is the best implementation of "AI inside the workspace" we tested.

✓Multimodal capability (image, video, audio) is best-in-class; video understanding is a meaningful differentiator.

✓Calendar-aware study planning is a feature no competitor matches at comparable depth.

✓Google AI Premium bundle is excellent value when Drive storage is part of the calculation.

✓Many universities provide Gemini Pro free through institutional Google Workspace contracts — worth checking before paying.

Cons — Gemini

✗Writing voice is more generic than Claude's; produces more visibly AI-flavored prose without prompting.

✗Citation accuracy lags both Perplexity and NotebookLM; not the right primary tool for cited research work.

✗Mobile parity is weaker — the integrations that distinguish Gemini are largely desktop-only.

✗Feature differences between standalone app and side-panel experience are confusing and require switching.

✗Less useful for students at institutions running Microsoft 365 — Copilot is the better fit there.

User Sentiment

Gemini scores 4.2 out of 5 in our survey, with a satisfaction profile that splits sharply by ecosystem. Students at Google Workspace institutions rate it 4.5+; students using it outside that ecosystem rate it 3.7–4.0. The recurring positive theme is integration: "I never have to copy-paste my Drive files," "It already knows my schedule," "It's just there in Docs." The recurring negative theme is voice: "It writes more like a corporate memo than an essay," "I ended up using it for organization and Claude for writing." Both critiques are accurate.

Final Verdict on Gemini

★ ★ ★ ★ ☆ 4.2 / 5 · #5 — The best assistant for Google Workspace users; a competent #2 choice for everyone else.

Microsoft Copilot — #6 Best AI for Microsoft 365 Users

| Maker | Microsoft (in partnership with OpenAI) | ||

| Launched | Bing Chat 2023; rebranded to Copilot in late 2023 | ||

| Category | AI assistant integrated across Microsoft 365 and Edge | ||

| Best For | Students writing in Word, modeling in Excel, building decks in PowerPoint | ||

| Free Tier | Yes — Copilot Free with daily limits on advanced features | ||

| Paid Tiers | Copilot Pro ($20/mo); Copilot for Microsoft 365 (institutional) | ||

| Underlying model | GPT-5 with Microsoft proprietary fine-tuning | ||

| Composite Score | 81.0 / 100 | ||

Why Copilot Ranks #6

Copilot is, in standalone form, a slightly weaker version of ChatGPT — which makes sense, because the underlying models are similar. The reason students choose Copilot over ChatGPT is precisely the reason students choose Gemini over ChatGPT: integration. If your university issues Microsoft accounts, your professors distribute assignments as Word documents, your statistics class uses Excel, and your group presentations are PowerPoints, then Copilot's embedded position inside those tools makes it more practically useful than a standalone chat assistant. The rank at #6 reflects Copilot's narrower fit — fewer institutions are Microsoft-first than Google-first in 2026, and the standalone Copilot experience is meaningfully behind Gemini's.

Overview

Microsoft's AI strategy has had three phases. First, Bing Chat (2023), a wrapped version of OpenAI's models that competed directly with ChatGPT. Second, the rebrand to Copilot (late 2023), which absorbed Bing Chat into a broader Microsoft product family. Third, the integration push (2024–2026), in which Copilot was embedded into every major Microsoft surface — Word, Excel, PowerPoint, Outlook, Teams, OneDrive, Edge, and Windows itself. The current product lineup includes Copilot (the consumer chat app), Copilot Pro (the consumer subscription), and Copilot for Microsoft 365 (the enterprise license that most universities buy).

The defining product question for students is: what tier of Copilot do you actually have access to, and what surfaces does it cover? At many universities, Microsoft 365 licenses include Copilot for some surfaces (Word, Outlook) but not others (Teams meetings, advanced Excel features). Knowing exactly what your institution has licensed is worth ten minutes with the IT department before you decide whether you also need a paid subscription.

Target Audience

Students at universities that run on Microsoft 365 — which includes a substantial portion of business schools, many engineering programs, and a growing share of medical schools. Business and economics students whose coursework is heavy in Excel modeling. Students writing long papers in Word who want AI assistance integrated with track changes and references. Students preparing class presentations in PowerPoint. Students collaborating through Teams meetings and OneDrive shares. Students whose grant work or research assistantship runs through institutional Microsoft accounts.

Less suited for students at Google-first institutions, students whose writing happens primarily in Google Docs or Notion, and students for whom Excel is not central to their coursework.

UI/UX in Practice

Like Gemini, Copilot exists in two interface modes. The standalone Copilot app and copilot.microsoft.com provide a chat interface that resembles ChatGPT, with the addition of Bing-powered web search and the ability to generate images via DALL-E. The more important interface is the Copilot pane that opens inside Word, Excel, PowerPoint, and other Microsoft apps. The Word integration is the one students use most: select a paragraph, ask for a rewrite, see the suggestion in the side panel, accept or reject. The implementation is well-designed and avoids the friction of copy-pasting between apps.

The Excel integration is particularly notable. Copilot in Excel can write formulas in plain English, build PivotTables from natural-language descriptions, and explain what existing formulas do. For statistics or business courses where students are expected to work in Excel but have varied prior experience, this is a meaningful reduction in friction. PowerPoint integration is competent — generating draft slides from a topic outline works reasonably well — but the visual quality of generated slides is workable rather than impressive.

Friction points are largely about licensing fragmentation. The exact features available depend on which Copilot tier the user has, which institution they belong to, and which app they are in. This complexity can make it genuinely difficult to know whether a feature is missing because it does not exist or because the user does not have the right license. The standalone app is competent but does not feel like the product Microsoft is investing the most in — the Office integration is the priority.

Features That Actually Matter for Students

Copilot in Word

Inline rewrite, summary, evidence-suggestion, and tone-change features make Word with Copilot a meaningfully better writing surface than Word without it. The Reference feature — Copilot can suggest evidence from the student's OneDrive documents to support claims in the current draft — is a clever workflow that no competitor matches as cleanly. For students writing long papers from a known set of source documents, this is a genuine differentiator.

Copilot in Excel

Natural-language formula generation, automatic data analysis, and PivotTable construction from plain English. The ability to explain what an existing formula does, in context, is particularly valuable for students inheriting datasets they did not build. For business, economics, finance, and statistics students, this is the single feature that most directly makes Copilot worth the price.

Copilot in Outlook and Teams

Email drafting in Outlook is competent and saves real time on routine correspondence. The Teams meeting integration — Copilot can summarize meetings, list action items, and answer questions about meeting content — is excellent in our testing for any meeting that was actually transcribed (which depends on institutional settings). For group-project teams that meet frequently in Teams, this can replace the need for a separate transcription tool.

Copilot in PowerPoint

Generating draft slides from an outline, suggesting visual layouts, and rewriting slide text. The output is a starting point, not a finished deck — student presentations almost always need substantial visual revision after Copilot produces the draft — but the time saved on initial structure is meaningful for last-minute deck building.

Standalone Copilot with web search