Generative AI did not invent adaptive learning, but it has quietly rewritten the unit economics of every layer that sits on top of it. The global AI in education market reached USD 7.05 billion in 2025 and is projected by Precedence Research to climb to USD 136.79 billion by 2035, a CAGR of 34.52 percent. Behind that number sits a more interesting shift: tutoring, lesson planning, assessment, and content generation, once treated as separate purchases, are collapsing into single-vendor platforms. This report dissects the five tools driving that consolidation, Khanmigo, Duolingo Max, MagicSchool AI, Coursera Coach, and Carnegie Learning MATHia, with verifiable adoption data, pricing, performance benchmarks, and the trade-offs each carries inside real classrooms and learner workflows.

Market Inflection: Where the Capital Is Concentrating

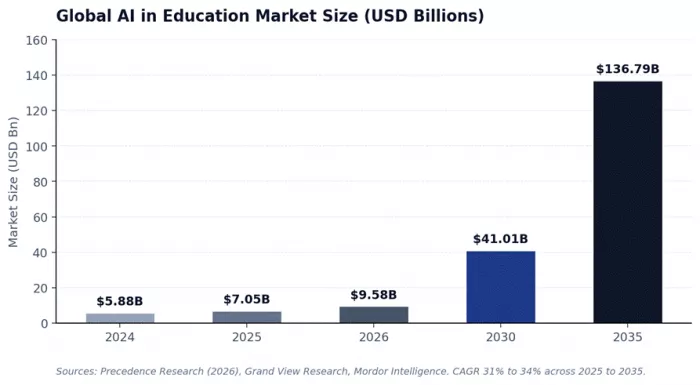

Three independent research houses converge on the same direction even where the absolute numbers diverge. Grand View Research pegs 2024 at USD 5.88 billion with a 31.2 percent CAGR through 2030. Mordor Intelligence places 2025 at USD 6.90 billion and forecasts USD 41.01 billion by 2030 at a 42.83 percent CAGR. IMARC Group projects USD 75.1 billion by 2033. The variance is methodological, not directional. North America held 38 percent of 2025 revenue, and the solutions segment, software rather than services, captured over 72 percent of share. Higher education led end-use; learning platforms and virtual facilitators alone took more than 47 percent of application share.

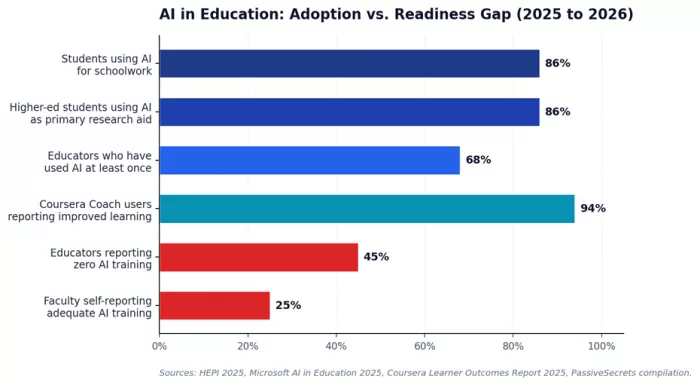

On the demand side, the readiness gap is the more important signal for buyers. According to a HEPI 2025 survey and Microsoft data compiled in early 2026, 86 percent of higher-education students now use AI as their primary research and brainstorming partner, yet only 25 percent of educators worldwide say they have been adequately trained to use it in curriculum, and 45 percent of educators report zero formal AI training. That gap is precisely what platform vendors are pricing into their school and district contracts.

Khanmigo: Socratic Constraint as a Pedagogical Moat

Architecture and Operating Logic

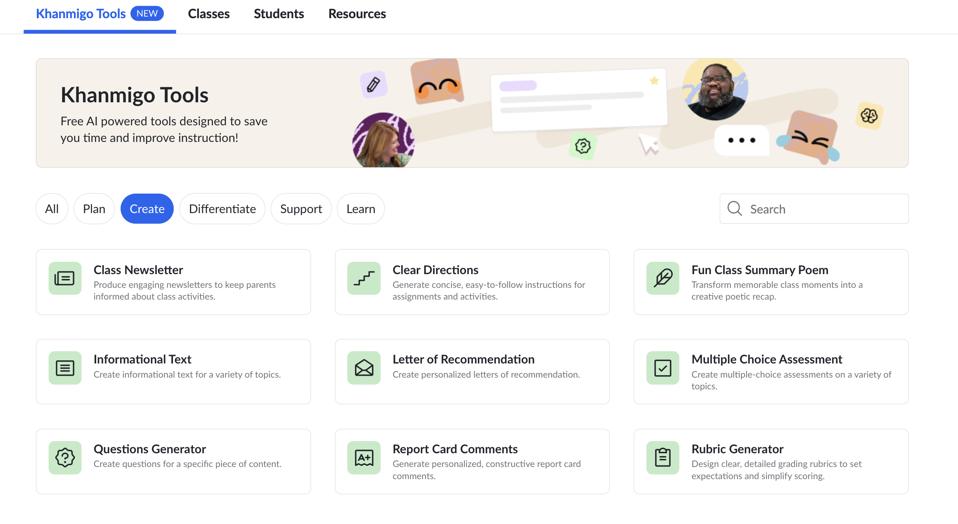

Khanmigo is a wrapper around OpenAI's GPT-4 stack, fine-tuned and grounded against Khan Academy's content library and exercise graph. Its design constraint, refusal to surface direct answers, is not a model property but a system-prompt and orchestration choice maintained by Khan Academy. When a learner asks for a solution, Khanmigo classifies the request, retrieves the relevant lesson context, and then responds through a Socratic chain: a hint, a counter-question, or a worked-step prompt. Image input was added in July 2025 for math and science, and Canvas, Google Classroom, and Schoology integrations went live in August 2025 via Clever. The teacher-facing build adds rubric generation, exit-ticket creation, lesson hooks, and student-progress summaries pulled directly from Khan Academy mastery data, an integration general-purpose chatbots cannot replicate.

Measured Performance

Common Sense Media awarded Khanmigo a 4 out of 5 rating in its AI Risk evaluation, the highest among general-purpose education chatbots in its 2024 review cycle, citing transparency, privacy, and learning design. Khan Academy reports the parent platform has surpassed 150 million learners globally. Independent classroom observation has been thinner than for legacy tools like MATHia, but verified G2 reviews place Khanmigo at 4.5 out of 5 across 178 reviews, with Capterra at 4.7 out of 5 across 32 reviews. On consumer review aggregator Sitejabber, the parent Khan Academy product (which captures Khanmigo feedback) sits between 2.8 and 3.8 stars, indicating polarized retail sentiment.

Where It Breaks

Three failure modes recur. First, the Socratic constraint creates friction for time-pressured students; testers across multiple parent reviews report children abandoning sessions because they wanted answers, not coaching. Second, content depth is uneven: math, physics, chemistry, and economics are robust, while humanities, art, and advanced college subjects are thinly covered. Third, Khanmigo only operates inside Khan Academy's curriculum, so homework from non-aligned textbooks falls outside its anchored context, increasing hallucination risk. Khan Academy itself acknowledged early math and bias issues in its first deployment cycle.

Real-World Deployment

Khanmigo's strongest traction is with U.S. school districts seeking a low-cost, vetted AI layer; teachers receive free access in supported countries via philanthropic underwriting, and districts handle student access through institutional partnerships. Homeschooling parents represent the second cohort, drawn by per-child pricing of USD 4 monthly with up to 10 child accounts under one parent subscription. Adult self-learners use it most for AP test prep, college-essay critique, and SAT/ACT preparation.

Ideal User Profile

Recommended for K-12 students working inside Khan Academy's existing math and science curriculum, homeschool families with children aged 8-17, and individual teachers in Title I or under-resourced districts. Avoid if you need humanities-heavy support, multi-curriculum flexibility, or instant-answer help for time-sensitive homework.

▸ Table 1. Khanmigo plan and pricing structure (verified as of 2026)

| Plan | Price | Best For | What's Included |

|---|---|---|---|

| Khanmigo for Teachers | Free (philanthropy-funded) | Individual classroom teachers | Lesson planning, rubrics, exit tickets, student-work summaries |

| Khanmigo Learner / Parent (monthly) | USD 4 / month | Self-paced learners, parents | Tutor mode, writing coach, debate, coding sandbox, up to 10 child seats |

| Khanmigo Learner / Parent (annual) | USD 44 / year | Long-term learners | Same as monthly with ~8% effective discount |

| Khanmigo for Districts | Custom (institutional) | School districts, schools | Classroom rostering, moderation, admin dashboards, district SSO |

Source: Khanmigo official pricing page (khanmigo.ai/pricing); access requires U.S. billing address.

▸ Table 2. Khanmigo vs. ChatGPT Edu vs. Quizlet Q-Chat (feature parity)

| Feature | Khanmigo | ChatGPT Edu | Quizlet Q-Chat |

|---|---|---|---|

| Underlying model | GPT-4 (educationally fine-tuned) | GPT-4o, GPT-4 Turbo | ChatGPT API (GPT-3.5/4 era) |

| Curriculum integration | Native (Khan Academy) | None | Quizlet sets only |

| Refuses direct answers | Yes (by design) | No | Configurable |

| LMS integration | Canvas, Google Classroom, Schoology (via Clever) | Limited | Limited |

| FERPA / COPPA compliance | Yes | Yes (Edu tier) | Partial |

| Image / handwriting input | Yes (math/science) | Yes | No |

| Pricing (per learner) | USD 4 / month | USD ~20+ / month | USD 7.99 / month (Plus) |

▸ Table 3. Khanmigo: strengths vs. weaknesses

| Strengths | Weaknesses |

|---|---|

| Among the lowest-priced AI tutors at USD 4 / month for full learner access | Refusal to give direct answers frustrates students under deadline pressure |

| Direct integration with Khan Academy's exercise graph reduces hallucination | Curriculum depth uneven beyond math, science, economics, and CS |

| Common Sense Media 4/5 AI rating outranks ChatGPT and Bard for K-12 use | Cannot help with homework from non-Khan curricula without context loss |

| Free institutional access for teachers in supported regions | U.S. billing address required for parent/learner subscriptions |

| Strong privacy guardrails (COPPA, FERPA, district data agreements) | Limited support for advanced college-level coursework |

▸ Table 4. Khanmigo: third-party ratings snapshot

| Platform | Score | Reviews | Summary Insight |

|---|---|---|---|

| G2 | 4.5 / 5 | 178 | Educators value the Socratic depth and free teacher tier |

| Capterra | 4.7 / 5 | 32 | Heavy emphasis on time savings for lesson planning |

| Common Sense Media | 4 / 5 | Editorial | Top-tier ranking for AI education products on safety and learning design |

| Sitejabber (Khan Academy parent) | 2.8-3.8 / 5 | 88+ | Polarized; complaints often about parent-platform issues, not Khanmigo specifically |

Duolingo Max: Generative AI as a Premium Tier Lever

Architecture and Operating Logic

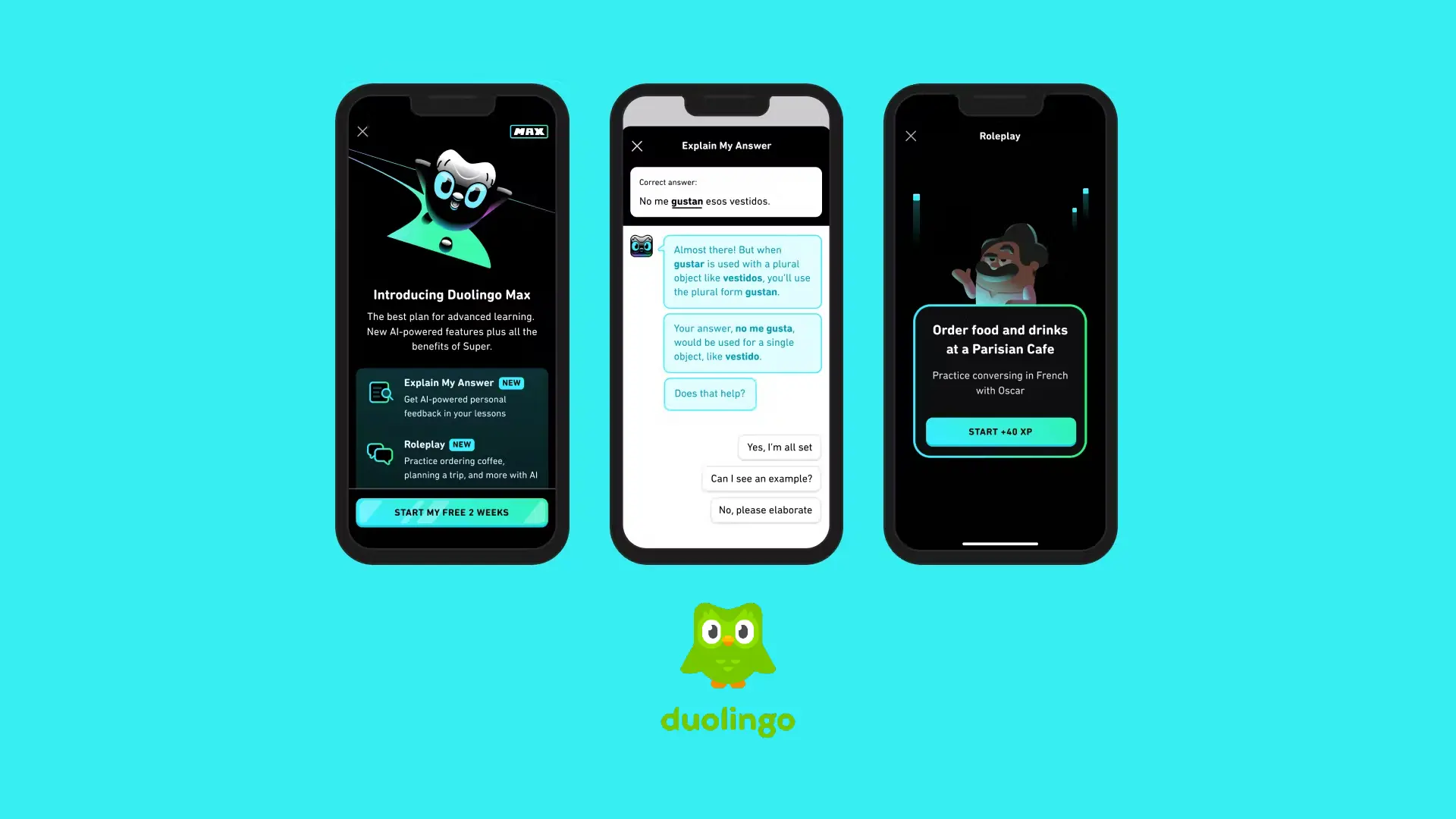

Duolingo Max layers two GPT-4-driven features, Roleplay and Video Call (with characters Lily and Falstaff), on top of the Super Duolingo experience. Roleplay scenarios are written by human curriculum experts who define the opening prompt and conversation goals; GPT-4 then drives turn-by-turn dialogue while a content review pipeline checks for factual drift and tone. Video Call adds voice-to-voice latency-optimized exchanges. Behind the consumer-facing AI sits Birdbrain, Duolingo's proprietary Llama-derived model that drives adaptive lesson selection and personalization at the exercise level. Generative AI has also collapsed Duolingo's content production cost; CEO Luis von Ahn has said roughly 100 percent of new lesson content can now be machine-generated and human-curated, accelerating launches into smaller languages.

Measured Performance

Duolingo's AI bet shows up directly in the top-line. Daily active users grew 51 percent year-over-year following the Max rollout, hitting 34 million in early 2024 and reaching 52.7 million by Q4 2025. Paid subscribers reached 10.9 million in Q2 2025 (up 37 percent YoY), generating quarterly revenue of USD 252.3 million. Approximately 8 percent of paid subscribers are on Max, but they generate 12-16 percent of subscription revenue. Since launch, Duolingo has reported a USD 516 million top-line lift attributed to Max, with stock appreciation of roughly 200 percent. Independent learner-experience research is thinner; testers report 5-7 minute Roleplay sessions feel scripted compared with longer conversational drills from competitors like Copycat Cafe.

Where It Breaks

Conversation length is the structural weakness. Roleplay sessions cap short to control GPT-4 inference costs, and pronunciation feedback remains limited compared with dedicated speech-tuned tools like ELSA Speak. Coverage is also asymmetric: full Roleplay and Video Call exist for Spanish, French, German, Italian, and Portuguese for English speakers, while Japanese, Korean, and Chinese learners get Video Call but no Roleplay. Explain My Answer, originally a paid Max feature, was made free in January 2026, leaving Max effectively a USD 168/year price tag for two features.

Real-World Deployment

Max sits at the top of a clear progression: Free (with energy limits), Super Duolingo (mid-tier), Max (premium). Early-career professionals studying for travel or work and intermediate learners (B1-B2 CEFR) preparing for conversation are the dominant cohorts. Schools and corporate training rarely deploy Max directly; they buy Super Duolingo licenses or use the Duolingo English Test for proficiency screening.

Ideal User Profile

Recommended for intermediate language learners studying Spanish, French, German, Italian, or Portuguese who want low-friction conversation reps. Avoid if you need certified pronunciation grading, require non-European target languages, or already have access to a tutor.

▸ Table 5. Duolingo plan structure (US, 2026)

| Plan | Price | Core Features | AI Features |

|---|---|---|---|

| Free | Free (ad-supported) | Full curriculum, all 148 courses, energy limit (25) | Birdbrain adaptive lessons |

| Super Duolingo | USD 13.99 / mo or ~USD 84 / yr | No ads, unlimited energy, mistakes review | Birdbrain + Explain My Answer (since Jan 2026) |

| Duolingo Max | USD 29.99 / mo or USD 168 / yr | All Super features | Roleplay, Video Call with Lily, Video Call with Falstaff |

| Super Family | USD ~120 / yr (up to 6) | Super for households | Same as Super tier |

Source: Duolingo App Store listings, TechCrunch (March 2023 launch), CheckThat.ai 2026 pricing audit.

▸ Table 6. Duolingo Max vs. Babbel Live vs. Pimsleur AI

| Feature | Duolingo Max | Babbel Live | Pimsleur Premium |

|---|---|---|---|

| Annual price | USD 168 | USD 599 (group lessons) | USD 240 |

| AI conversation partner | Yes (GPT-4 Roleplay + Video) | No (human tutors) | Yes (AI Voice Coach) |

| Pronunciation grading | Limited | Human-corrected | Strong (legacy audio focus) |

| Languages with full AI | 5 (ES, FR, DE, IT, PT) | 14 with human tutors | 50+ with audio focus |

| Free tier | Yes (Free Duolingo) | No | 7-day trial only |

| Best for | Casual learners, gamification | Mid-level conversation drills | Audio-first commuter learning |

▸ Table 7. Duolingo Max: strengths vs. weaknesses

| Strengths | Weaknesses |

|---|---|

| Conversation practice without a human tutor at one-tenth the cost | Roleplay sessions short and scripted; not equivalent to human tutoring |

| Tight integration with proven gamification keeps retention high | Asymmetric coverage: no Roleplay yet for Japanese, Korean, Mandarin |

| Generative content pipeline lets Duolingo expand languages faster | Pronunciation feedback weaker than ELSA Speak or Speak |

| 8% of paying base now generates 12-16% of subscription revenue, validating premium AI willingness-to-pay | After Explain My Answer became free, Max value proposition narrowed |

▸ Table 8. Duolingo: third-party ratings snapshot

| Platform | Score | Reviews | Summary Insight |

|---|---|---|---|

| G2 (Duolingo) | 4.6 / 5 | 375+ | Praised for engagement; some complaints about ads in free tier |

| Capterra | 4.7 / 5 | 1,200+ | Strong reviews for ease of use and habit-building |

| App Store (iOS, US) | 4.7 / 5 | 1.7M+ | Massive sample size; consistent across versions |

| Google Play | 4.5 / 5 | 10M+ | Recent reviews flag aggressive monetization friction |

MagicSchool AI: Workflow Compression for K-12 Teachers

Architecture and Operating Logic

MagicSchool routes requests across multiple foundation models, OpenAI, Anthropic, and Google, choosing the cheapest model that meets quality bars for each task type. The platform exposes more than 80 specialized teacher tools (lesson plans, rubrics, IEP drafts, quiz generators, parent emails, exit tickets) and 50-plus student-facing tools accessed only through teacher-controlled rooms. The Knowledge module, expanded for Enterprise customers in 2026, lets districts upload curriculum guides, rubrics, and policies once; downstream tools then condition outputs on that institutional context, eliminating prompt-rebuilding across teachers. MagicQuizzes, launched in early 2026, generates standards-aligned multiple-choice and short-answer assessments with immediate student feedback and aggregated class-level summaries for the teacher.

Measured Performance

MagicSchool reports more than 6 million educators and students using the platform as of early 2026, with major U.S. districts including Seattle Public Schools and Aurora Public Schools (Colorado) deploying it system-wide. Aurora reported a 28 percent increase in students meeting literacy goals after district-wide MagicSchool rollout. Mordor Intelligence singled out MagicSchool as an AI-native disruptor reporting a 28 percent outcome improvement and 88 percent satisfaction rate. Internal MagicSchool surveys cite teacher time savings of seven or more hours per week on planning, differentiation, assessments, and communication. Independent third-party RCT validation has not yet appeared in peer-reviewed journals.

Where It Breaks

Three structural concerns. First, breadth comes at the cost of depth: with 80-plus tools spanning planning, grading, and communication, no single workflow is as deep as a specialist tool (essay-grading platforms like CoGrader or Gradescope outperform MagicSchool's writing feedback at scale). Second, district-wide rollouts depend on teacher buy-in; tools save time only if teachers actually adopt them, and rollout fatigue is a documented adoption barrier. Third, MagicSchool's privacy posture is strong (FERPA, COPPA, GDPR, SOC 2 Type 2), but moderation is still LLM-based and can occasionally over-filter legitimate classroom content.

Real-World Deployment

Hillsborough County Schools (Florida) reported district-wide use across the 2025-2026 school year, with teachers using MagicSchool to convert raw text into charts for presentations, generate worksheets, and draft parent communications. Aurora Public Schools tied platform adoption to its literacy intervention program. The typical teacher workflow: open MagicSchool first thing Monday morning, generate a week of lesson plans and an exit ticket, then refine outputs against district standards stored in the Knowledge module.

Ideal User Profile

Recommended for K-12 districts and schools seeking a single AI layer across instructional, administrative, and communication tasks; teachers in resource-constrained schools who handle lesson planning and assessment alone. Avoid if you need a deep, specialist grading workflow or if your district's primary need is student-facing tutoring.

▸ Table 9. MagicSchool pricing tiers (2026)

| Plan | Price | Target | Limits and Inclusions |

|---|---|---|---|

| Free | USD 0 | Individual teachers exploring AI | Core tools, standard usage limits, basic support |

| Plus | USD 8.33 / month (annual) or USD 12.99 / month | Frequent individual users | Expanded usage limits, MagicQuizzes preview, extra features |

| Enterprise | Custom (district-level) | Schools and districts | SSO, district dashboards, custom privacy agreements, Knowledge module, implementation support |

Source: MagicSchool official pricing page; EduSageAI institutional pricing analysis (Apr 2026).

▸ Table 10. MagicSchool vs. Khanmigo (teacher tools) vs. Google Gemini for Education

| Feature | MagicSchool AI | Khanmigo for Teachers | Gemini for Education |

|---|---|---|---|

| Number of teacher tools | 80+ | ~15 | General-purpose chat |

| Multi-LLM routing | Yes (OpenAI, Anthropic, Google) | GPT-4 only | Gemini only |

| Curriculum library | Bring-your-own (Knowledge module) | Khan Academy native | Workspace integrations |

| Student-facing rooms | 50+ tools, teacher-moderated | Limited | Yes via Workspace |

| Student data training opt-out | Yes (no training on user data) | Yes | Yes (Edu tier) |

| Pricing model | Free / USD 8.33 / Custom | Free for teachers | Bundled with Workspace |

▸ Table 11. MagicSchool: strengths vs. weaknesses

| Strengths | Weaknesses |

|---|---|

| Reduces teacher administrative load by 7+ hours/week (vendor-reported) | Breadth-over-depth: no specialist workflow as strong as dedicated grading tools |

| Aurora Public Schools: 28% literacy-goal improvement | Independent peer-reviewed efficacy data still limited |

| Compliant across FERPA, COPPA, GDPR, SOC 2 Type 2 | Onboarding fatigue at the district level if teachers are not pre-sold |

| Multi-model routing reduces lock-in and lets MagicSchool optimize per task | Outputs require teacher review; not safe to publish unedited |

| Free tier genuinely usable; teachers can adopt without budget approval | MagicQuizzes still in preview pricing through June 2026 |

▸ Table 12. MagicSchool AI: third-party ratings snapshot

| Platform | Score | Reviews | Summary Insight |

|---|---|---|---|

| G2 | 4.7 / 5 | 115+ | Educators repeatedly cite time savings as the dominant benefit |

| Capterra | 4.8 / 5 | 70+ | Praised for breadth of tools and ease of onboarding |

| Common Sense Education | Recommended | Editorial | Endorsed for K-12 with privacy-first design |

| Product Hunt (launch) | 4.9 / 5 | Top product of week, 2023 | Strong educator-driven momentum at launch |

Coursera Coach: Embedded GenAI Inside Higher-Ed Pathways

Architecture and Operating Logic

Coursera Coach is a generative-AI tutor grounded in Coursera's expert-authored content. Coach retrieves video transcripts, lecture readings, and exercises from the active course, then composes answers anchored to that material. It runs three distinct interaction modes: Coach for Learning Assistance (in-course Q&A and explanations), Coach for Career Guidance (embedded in Coursera's career platform), and the newer Coach for Interactive Instruction, in which authors can configure dialogue-based teaching, role plays, and AI-graded open-ended assessments. Behind the scenes, Coursera Course Builder uses the same generative engine to translate, summarize, and structure course content; the platform now translates 4,000-plus courses into more than 25 languages.

Measured Performance

Coursera reported in late 2025 that Coach has supported over one million learners since launch, generating a 9.5 percent higher quiz pass rate among Coach users on supported courses. The 2025 Learner Outcomes Report (52,000-plus learners across 179 countries) found that 94 percent of Coach users said it improved their learning experience, citing easier comprehension, more engagement, and better retention. Coursera Coach won the Newsweek AI Impact Award in the AI Education: Best Outcomes, Commercial Learning category in 2025. Coursera's parent platform passed 175 million registered learners by Q1 2025.

Where It Breaks

Coach is anchored to Coursera courses. Learners outside that walled garden cannot use it for general study. Quality also degrades on legacy courses with thin transcripts or minimal supplemental material; Coursera has been retrofitting older content but the inconsistency is visible. Career coaching is helpful for general career framing but lacks the labor-market specificity of dedicated tools like LinkedIn's job recommendation engine. Coursera has not published full disaggregated efficacy data by demographic group, and the 9.5 percent pass-rate lift, while meaningful, is a self-reported aggregate, not a peer-reviewed RCT.

Real-World Deployment

Three primary cohorts use Coach. First, individual learners using Coursera Plus (the consumer subscription) for upskilling, particularly in data, AI, and business. Second, Coursera for Business and Coursera for Government customers, who deploy Coach across employee learning programs; the 2025 report shows 94 percent of learners in emerging economies achieve at least one positive career outcome, with Coach contributing to comprehension. Third, university and degree partners are embedding Coach into stackable credentials and degrees, with role-play and AI-graded assessment capabilities now reaching select Coursera authors.

Ideal User Profile

Recommended for working professionals upskilling on Coursera courses, enterprise L&D leaders running Coursera for Business deployments, and learners in emerging economies where Coach's translation and explanation features compound value. Avoid if your study is primarily off-platform or if you need an open-ended general AI tutor.

▸ Table 13. Coursera plans where Coach is available

| Plan | Price (US) | Coach Access | Best For |

|---|---|---|---|

| Free audit (per course) | Free (no certificate) | Limited to free courses | Curiosity-driven learners |

| Coursera Plus (monthly) | USD 59 / month | Full Coach across catalog | Multi-course self-paced learners |

| Coursera Plus (annual) | USD 399 / year | Full Coach across catalog | Year-long upskillers (~33% saving) |

| Coursera for Business | Custom (per seat) | Full Coach + admin reporting | Corporate L&D programs |

| Degrees / MasterTrack | USD 9,000-USD 45,000 | Coach in supported courses | Credit-bearing learners |

Source: Coursera consumer pricing pages; investor.coursera.com Q4 2025.

▸ Table 14. Coursera Coach vs. edX Xpert vs. Udacity Mentor (AI tutor benchmarks)

| Feature | Coursera Coach | edX Xpert | Udacity (Accenture) |

|---|---|---|---|

| Underlying model approach | Grounded LLM on course content | OpenAI-based learner assistant | AI mentor + human review |

| Reported learner uplift | 9.5% higher quiz pass rate | Not publicly disclosed | Career-program completion focus |

| Career guidance | Yes (Coach for Career) | Limited | Strong (mentor-led nano-degrees) |

| AI-graded assessments | Yes (rolling out 2026) | Limited | Yes (project rubrics) |

| Free tier | Limited (audit-only) | Yes (some courses) | No |

| Strongest content vertical | Data, AI, business, tech | MIT/Harvard professional | AI engineering, data science |

▸ Table 15. Coursera Coach: strengths vs. weaknesses

| Strengths | Weaknesses |

|---|---|

| 94% of users report improved learning experience (Harris Poll, n=52,000+) | Walled-garden: cannot help with non-Coursera study |

| 9.5% higher quiz pass rate on supported courses | Quality varies on older courses with thin transcripts |

| Newsweek AI Impact Award 2025 (Best Outcomes, Commercial Learning) | Career-coaching depth lower than specialist labor-market tools |

| Multilingual support across 25+ languages and 4,000+ courses | Self-reported metrics; no public peer-reviewed RCT |

| Embedded directly inside lesson video and reading flow | Coursera Plus required to unlock full Coach catalog access |

▸ Table 16. Coursera Coach: third-party ratings snapshot

| Platform | Score | Reviews | Summary Insight |

|---|---|---|---|

| G2 (Coursera) | 4.5 / 5 | 335+ | Strong reviews for catalog breadth; some flag uneven course quality |

| Capterra (Coursera) | 4.5 / 5 | 120+ | Praised for credentialing value and corporate use |

| Trustpilot (Coursera) | 2.6 / 5 | 5,000+ | Polarized: refunds and certificate issues dominate negative reviews |

| Newsweek AI Impact Award | Winner 2025 | Editorial panel | Commercial-learning category, citing measurable outcomes |

Carnegie Learning MATHia: Pre-LLM Cognitive Tutoring at Scale

Architecture and Operating Logic

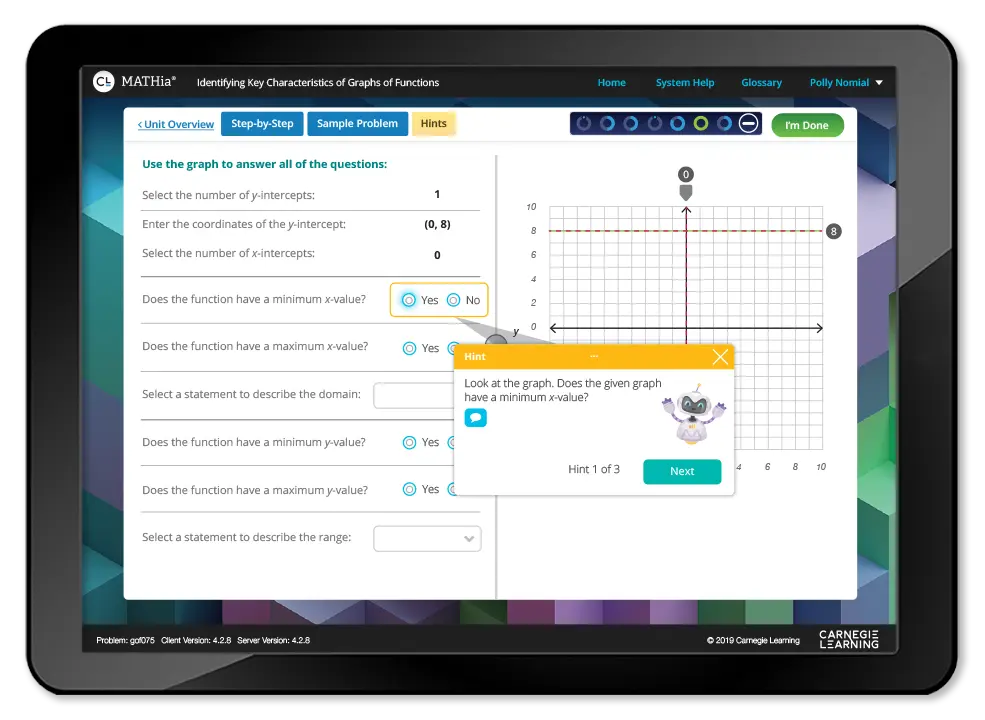

MATHia is the longest-running AI tutoring system in U.S. K-12 education, descended from work at Carnegie Mellon's intelligent tutoring lab in the 1980s. It is not a generative-AI product. It runs a cognitive model of mathematics expertise, encoding skill-by-skill knowledge components and inferring student mastery from problem-solving trajectories rather than just final answers. The system, used in grades 6-12, presents tailored exercises, identifies misconceptions, and routes students to targeted practice. The companion teacher app, LiveLab, applies machine learning to flag students who are idle, off-pace, or stuck, in real time during a class period, and was named Best Use of AI in Education at the 2019 EdTech Breakthrough Awards. The APLSE (Adaptive Personalized Learning Score) engine forecasts end-of-year state-test performance from in-platform behavior.

Measured Performance

MATHia has the strongest external validation of any tool in this report. A U.S. Department of Education-funded RAND Corporation randomized controlled trial of more than 18,000 students across 147 middle and high schools found that the Carnegie Learning blended approach (MATHbook plus MATHia) nearly doubled growth in standardized-test performance in the second year of implementation. A 2021 Student Achievement Partners study replicated improvements in middle-school Algebra 1, with the largest gains among initially under-performing students. MATHia met ESSA Level 1 (Strong Evidence) standards, the highest rigor tier in U.S. federal education research.

Where It Breaks

Three trade-offs. First, MATHia is mathematics-only, grades 6-12 (Algebra 1 to Integrated Math 3 plus pre-algebra); districts that need humanities or earlier elementary support buy other Carnegie products or different vendors. Second, MATHia is not a chatbot; students cannot type free-form questions and get conversational explanations, which feels limiting next to GPT-4-driven tools. Third, full deployment requires the MATHbook print/digital text, professional development, and teacher buy-in; Carnegie's pricing is institutional (approximately USD 45 per student per year for the Math Solution including MATHia) and not directly accessible to homeschool parents at scale.

Real-World Deployment

MATHia is deployed across thousands of U.S. middle and high schools as a core or supplemental math program. Typical pattern: 2-3 sessions per week of independent MATHia work integrated into a teacher-led classroom, with LiveLab dashboards displayed on the teacher's device for in-the-moment intervention. The APLSE score gives administrators a predictive lens before state-test season. Texas, for example, runs the Texas Math Solution which embeds MATHia statewide.

Ideal User Profile

Recommended for U.S. middle and high school math departments, districts that need ESSA Level 1 evidence for funding, and Title I schools targeting under-performing math cohorts. Avoid if you need conversational AI, non-math support, or a tool for grades K-5 (use MATHia Adventure separately) or higher-education math.

▸ Table 17. Carnegie Learning Math Solution / MATHia (institutional pricing)

| Plan | Price | Coverage | Notes |

|---|---|---|---|

| Math Solution incl. MATHia (per student/yr) | ~USD 45 | Grades 6-12 (core) | Includes MATHbook, MATHia software, LiveLab, PD |

| MATHia supplemental license | Custom | Grades 6-12 | Used alongside other core curricula |

| MATHia Adventure (K-5) | Custom | Elementary math | Separate K-5 product line |

| State partnerships (e.g., Texas Math Solution) | State-negotiated | State-wide | Bundled with state-aligned PD and lesson materials |

Source: Carnegie Learning sales pages and third-party pricing aggregators (Speechify 2025); institutional pricing varies by district.

▸ Table 18. MATHia vs. DreamBox vs. ALEKS (adaptive math benchmarks)

| Feature | MATHia | DreamBox | McGraw Hill ALEKS |

|---|---|---|---|

| Grade range | 6-12 (K-5 separate) | K-8 | Grades 3-12, college, professional |

| Modeling approach | Cognitive skill model + LiveLab ML | Intelligent Adaptive Learning | Knowledge Space Theory |

| Strongest evidence base | RAND RCT (~2x growth, Yr 2) | Multiple efficacy studies | Multiple efficacy studies |

| Real-time teacher dashboard | LiveLab (award-winning) | Insight Dashboard | Reporting suite |

| Predictive end-of-year scoring | APLSE | Limited | Knowledge State |

| Generative-AI overlay | No (cognitive tutor) | Limited | Limited |

▸ Table 19. MATHia: strengths vs. weaknesses

| Strengths | Weaknesses |

|---|---|

| ESSA Level 1 'Strong Evidence' rating, the highest U.S. federal research tier | Mathematics only, grades 6-12 (separate K-5 product) |

| RAND RCT (n=18,000+) showed near-doubling of test-score growth in Year 2 | Not a generative chatbot; no free-form conversational queries |

| LiveLab gives teachers in-class intervention signal, not lagging reports | Institutional pricing only; homeschool families have limited entry |

| APLSE accurately predicts end-of-year state-test performance | Requires teacher PD and curriculum integration to perform |

| Deep cognitive model, not just answer-pattern matching | Less marketing visibility than newer LLM-driven tools |

▸ Table 20. MATHia: third-party ratings snapshot

| Platform | Score | Reviews | Summary Insight |

|---|---|---|---|

| G2 | 4.4 / 5 | 75+ | Teachers value step-by-step tutorials and reporting depth |

| Capterra (Carnegie Learning) | 4.4 / 5 | 60+ | Strong reviews from math teachers; some report onboarding effort |

| EdReports | All-green (perfect) on Math Solution | Editorial | Top score across Focus, Rigor, and Teacher Supports gateways |

| EdTech Breakthrough Awards | Best AI in Education (2019, LiveLab); Best AI Solution (2020, MATHia) | Editorial | Two consecutive industry awards |

Five Trends Cutting Across All Five Platforms

▎ a. The Socratic-vs-Direct-Answer Divide Is Now an Architectural Choice

Khanmigo and MATHia refuse to give direct answers by design. Coursera Coach, Duolingo Max, and most MagicSchool tools will. This is no longer a model limitation, it is a product positioning decision and a regulatory hedge: districts increasingly require AI tools to demonstrate that they support, not substitute for, learning. Vendors that cannot prove pedagogical guardrails are losing institutional contracts.

▎ b. Multi-LLM Routing Is Replacing Single-Vendor Lock-In

MagicSchool's explicit use of OpenAI, Anthropic, and Google models per task is becoming the default for ed-tech vendors that need to balance cost, latency, and content moderation. Single-model dependency is now seen as a risk by procurement teams.

▎ c. Teacher Time Savings Is the Real Buying Trigger

MagicSchool's 7-hour-per-week claim, Khanmigo's free teacher tier, and Coursera's grading automation all converge on the same insight: teacher time is the scarcest input in education, and AI's most defensible economic case is reclaiming hours, not replacing pedagogy. The U.S. NEA reported in 2025 that 65 percent of teachers do not yet use generative AI; vendors that solve onboarding capture that cohort.

▎ d. Evidence Standards Are Bifurcating the Market

MATHia meets ESSA Level 1 evidence; most generative tools do not yet have published RCTs. As state and federal funding increasingly tie procurement to ESSA tiers, this gap will compound. New entrants will need to invest in third-party evaluations or partner with research institutions to compete for K-12 dollars.

▎ e. Privacy and Data-Training Posture Has Become Table Stakes

Every tool in this report explicitly states it does not train on student or teacher data. FERPA, COPPA, GDPR, and SOC 2 Type 2 compliance are now hygiene factors, not differentiators. Vendors without them are excluded from district RFPs before pricing is even evaluated.

Conclusion: Strategic Takeaways for Buyers and Operators

The five-year direction is clear. The market will consolidate around platforms that combine grounded content libraries, multi-LLM routing, teacher workflow tools, and verifiable outcome data. Standalone chatbots without curriculum anchors will continue to lose institutional share, even as they remain the default for casual, individual use.

For learners outside formal school systems, the opportunity is different. Platforms focused on practical AI literacy, such as Timtis, sit closer to the skills layer of the market, helping students, creators, and professionals understand how to actually use AI tools across writing, video, automation, and work. That matters because the next phase of AI education will not only be about tutoring students through existing subjects. It will also be about teaching people how to work with AI itself.

The vendors that win the next phase will not be the ones with the most features. They will be the ones with the most defensible evidence that their AI actually helps students learn faster, retain longer, build useful skills, and progress more equitably.

Comments