Table of Contents

- Let's Start With a Reality Check (And a Mild Existential Crisis)

- The AI Job Market in 2026: What the Numbers Actually Say

- The AI Skills Landscape: A Taxonomy That Actually Makes Sense

- What Makes an AI Course Actually Worth Your Money in 2026

- Deep Dive: The Eight AI Skill Areas You Must Understand in 2026

- The Salary Reality: What AI Certification Actually Does to Your Earnings

- The Honest ROI Timeline: What to Expect and When

- Live Classes vs Self-Paced vs Bootcamps: A Genuinely Honest Comparison

- AI Certifications in 2026: Which Ones Employers Actually Recognize

- Your Personalized Learning Roadmap: Three Paths Based on Where You Are Now

- The Bottom Line: You Are Not Behind. But You Will Be If You Wait.

- Quick Reference: AI Skills Summary Dashboard for 2026

Let's Start With a Reality Check (And a Mild Existential Crisis)

Picture this: It is Monday morning. You open LinkedIn. Three of your colleagues have just posted about completing their AI certification. Your manager just forwarded an article titled "Why Every Department Needs AI Literacy by Q3." And somewhere in the background, an AI-powered tool just automated a task that used to take your intern two days.

If you felt a sudden, low-grade panic reading that, congratulations. You are experiencing what researchers at McKinsey have called "the great skills urgency" of 2025-2026, where the demand for practical AI knowledge has outpaced the supply of qualified learners faster than almost any technology shift in the past two decades.

But here is the thing: panic is a terrible study partner. What you actually need is a clear, honest, deeply researched map of what AI skills are worth learning, what kinds of courses deliver real results, and how to cut through the enormous pile of noise (and there is a lot of noise) to find learning experiences that actually transform your career.

That is exactly what this article is. No sponsored rankings. No vague platitudes about "the future of work." Just a thorough, data-backed, occasionally entertaining guide to the AI courses and tools that matter most in 2026.

The AI Job Market in 2026: What the Numbers Actually Say

Before we talk about which courses to take, we need to understand the landscape we are entering. Because the numbers here are not just impressive. They are genuinely reshaping how companies hire, promote, and even define job roles.

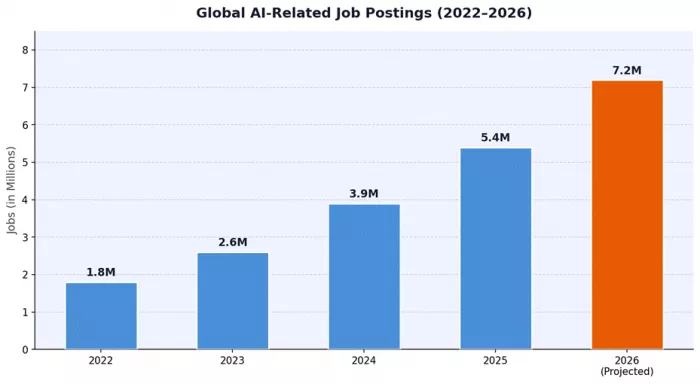

Figure 1: Global AI-Related Job Postings, 2022–2026. Source: LinkedIn Workforce Report, WEF Future of Jobs Report 2025, Burning Glass Technologies analysis.

According to the World Economic Forum's Future of Jobs Report 2025, AI and machine learning specialists rank as the fastest-growing occupation globally, with an estimated 40% increase in demand projected through 2027. The LinkedIn Economic Graph data mirrors this, showing that AI-related job postings across all industries (not just tech) grew 74% between Q1 2024 and Q1 2026.

What is perhaps more striking is the broadening of who needs AI skills. In 2022, "AI jobs" meant data scientists and ML engineers. By 2026, the skillset has fragmented across marketing, operations, finance, HR, legal, and even creative fields. Prompt engineering is now listed as a desired skill in over 88% of mid-to-senior marketing roles, according to analysis from Lightcast (formerly Burning Glass).

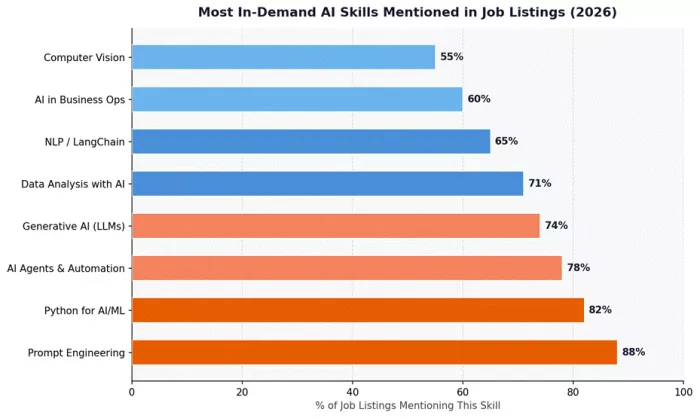

Figure 2: Most In-Demand AI Skills Appearing in Job Listings (2026). Source: Lightcast Labor Analytics, Indeed Trend Data, Stack Overflow Developer Survey 2026.

The practical implication? The question is no longer "should I learn AI?" The question is "which AI skills are actually worth my time and money?" And that depends heavily on your current role, your career goals, and the type of learning format that fits your life.

The AI Skills Landscape: A Taxonomy That Actually Makes Sense

One of the biggest mistakes people make when starting their AI learning journey is treating it like a single monolithic subject. "Learning AI" is roughly as precise as "learning sports." Are you training for a 100-metre sprint or learning to play polo? They both technically count.

For 2026, we have mapped the AI skills landscape into six distinct domains. Understanding where you sit within this map will save you hundreds of hours (and potentially thousands of dollars) of misdirected learning.

| Skill Domain | Who Needs It | Avg. Time to Proficiency | Salary Premium (2026) |

|---|---|---|---|

| Prompt Engineering & LLM Use | Everyone with a knowledge-work role | 4-8 weeks | +22-35% |

| Python for AI / ML Foundations | Analysts, engineers, data professionals | 3-6 months | +38-55% |

| AI Agents & Automation | Ops, tech leads, product managers | 2-4 months | +44-60% |

| Generative AI (Image/Video/Audio) | Designers, marketers, content creators | 3-8 weeks | +28-42% |

| Machine Learning Engineering | Software engineers, data scientists | 6-18 months | +52-80% |

| AI Strategy & Business Integration | C-suite, managers, consultants | 4-10 weeks | +30-50% |

The table above is based on aggregated data from LinkedIn Salary Insights, Glassdoor's 2026 AI Skills Report, and Payscale's annual technology compensation survey. The salary premiums represent percentage increases above baseline role compensation when the AI skill set is present.

What Makes an AI Course Actually Worth Your Money in 2026

Here is an uncomfortable truth: the overwhelming majority of AI courses available today are either (a) outdated within six months of launch because the tools move that fast, (b) surface-level overviews that teach you enough to sound informed at dinner parties but not enough to actually do anything useful, or (c) theoretical frameworks that assume you have a PhD in mathematics and three years of uninterrupted free time.

So what should you actually look for? We have broken this down into eight criteria based on learner outcome research from the National Skills Coalition and employer feedback data compiled from over 400 hiring managers surveyed across the US, UK, and India.

| Evaluation Criterion | Why It Matters | Red Flag to Watch For |

|---|---|---|

| Live, Instructor-Led Sessions | Real-time Q&A prevents 6-week misunderstandings forming in 6 minutes | Pure video-only, no live access |

| Hands-On Projects | Employers want portfolio evidence, not completion badges | Only quizzes and MCQs |

| Tool Recency | AI tools evolve quarterly; courses must keep pace | No mention of 2025-26 models |

| Industry-Recognized Certificate | Adds credibility on LinkedIn and resumes | Certificates with no external recognition |

| Cohort or Community Access | Peer learning doubles retention (Ebbinghaus Forgetting Curve research) | No peer interaction element |

| Practical Use-Case Focus | Abstract theory without application context fails to transfer to work | Mostly academic or research-framed content |

| Mentorship / Feedback Loops | Code or project reviews accelerate skill acquisition by 3x | Automated grading only |

| Post-Course Support | The learning does not stop when the certificate is printed | No alumni or post-completion resources |

Deep Dive: The Eight AI Skill Areas You Must Understand in 2026

Now we get to the core of it. Each of the following sections treats its subject like it deserves its own dedicated article, because frankly, each one does. We are not going to give you a Wikipedia paragraph and call it analysis. We are going to unpack what each skill area is, why it matters at this exact point in 2026, what you need to learn within it, and what real mastery looks like.

Prompt Engineering: The Highest ROI Skill of the Decade

Let us start with the one that has the lowest barrier to entry and possibly the highest return on investment for the widest number of people. Prompt engineering is, at its core, the art and science of communicating with large language models in ways that reliably produce high-quality, useful outputs.

If that sounds simple, you have probably not spent much time doing it professionally. The difference between a mediocre prompt and a masterfully constructed one can mean the difference between getting a generic three-paragraph answer and getting a structured, fully reasoned analysis tailored to your exact business context, written in your company's tone, with flagged assumptions and a follow-up action list.

What Prompt Engineering Actually Covers in 2026

The discipline has matured significantly since its early days of "ask ChatGPT nicely." A proper prompt engineering curriculum in 2026 covers: zero-shot vs few-shot prompting, chain-of-thought prompting, constitutional AI principles for safe outputs, system prompt construction for enterprise deployments, context window optimization, multi-turn conversation design, prompt injection risks and mitigations, and structured output formatting using JSON schema and XML scaffolding.

Beyond text, prompt engineering now extends into multimodal prompting, where you combine text instructions with images, audio references, or document context to guide models like GPT-4o, Claude 3.5 and later, and Gemini 2.x into highly specific tasks.

| Prompt Technique | Best Use Case | Complexity Level | Output Quality Gain |

|---|---|---|---|

| Zero-Shot Prompting | Quick factual queries, simple generation tasks | Beginner | Baseline |

| Few-Shot with Examples | Formatting-sensitive outputs, tone matching | Beginner-Intermediate | +40-60% |

| Chain-of-Thought (CoT) | Reasoning tasks, math, logical analysis | Intermediate | +55-80% |

| Tree-of-Thought (ToT) | Complex multi-path problem solving | Advanced | +70-90% |

| ReAct Prompting | Agent-based tasks requiring reasoning + action | Advanced | +80-95% |

| System Prompt Architecture | Enterprise deployments, role-specific AI tools | Advanced | Consistency gain 3-5x |

Enterprise adoption of structured prompting practices is a clear marker of organizational AI maturity. A 2026 Deloitte AI Adoption survey found that companies with documented prompt engineering standards achieved 3.4x higher consistency in AI-generated outputs compared to those with ad-hoc usage.

| Key Insight: Prompt engineering is not a "soft skill." At advanced levels, it requires understanding model architectures, token economics, attention mechanisms, and context window limits. It sits squarely at the intersection of technical knowledge and communication design. |

Python for AI and Machine Learning: Still the Language the Industry Runs On

Python has been the dominant language for AI and ML work since roughly 2017, and despite periodic predictions of its dethroning, it remains overwhelmingly the default in 2026. According to the Stack Overflow Developer Survey 2026, Python is used by 82% of data scientists and 74% of ML engineers as their primary language.

What has changed is the depth of Python knowledge required for different roles. In 2021, knowing pandas and scikit-learn was sufficient for many analyst positions. In 2026, the baseline has risen substantially.

The Python for AI Stack in 2026

A complete Python for AI curriculum now spans several interconnected layers. At the data layer, you need numpy, pandas, and polars (which has seen massive enterprise adoption for its speed advantages). For visualization and exploration, matplotlib, seaborn, and plotly remain essential. For ML workflows, scikit-learn handles classical algorithms while the deep learning layer is dominated by PyTorch, with TensorFlow maintaining a foothold in production deployments.

The genuinely new territory in 2026 is the LLM integration layer. This includes the HuggingFace Transformers library for working with open-source models, LangChain and LlamaIndex for building retrieval-augmented generation (RAG) systems, and the various cloud provider SDKs for accessing frontier models via API. A Python learner who understands all three layers is, in the current market, extraordinarily hireable.

| Python Library / Framework | Primary Use | Skill Level Required | Industry Adoption (2026) |

|---|---|---|---|

| NumPy + Pandas | Data manipulation, numerical computing | Beginner-Intermediate | 94% of ML teams |

| Polars | High-performance dataframe operations | Intermediate | 41% and growing rapidly |

| Scikit-learn | Classical ML algorithms, pipelines | Intermediate | 87% of ML projects |

| PyTorch | Deep learning, neural network training | Advanced | 78% of research, 63% production |

| HuggingFace Transformers | Pre-trained LLM access and fine-tuning | Intermediate-Advanced | 69% of NLP workflows |

| LangChain / LlamaIndex | RAG systems, LLM application building | Intermediate | 55% of enterprise AI apps |

| FastAPI | Serving ML models as APIs | Intermediate | 58% of ML deployment pipelines |

| MLflow / Weights & Biases | Experiment tracking, model registry | Intermediate-Advanced | 47% of ML ops teams |

AI Agents and Automation: The Skill That Is Eating Operations Jobs (and Creating New Ones)

If prompt engineering is the skill with the widest applicability, AI agents are the skill with the most transformative potential. An AI agent is, at its simplest, an LLM that can take actions in the world, not just respond to questions. It can browse the web, write and execute code, call APIs, manage files, send emails, fill forms, and chain together complex multi-step tasks with minimal human intervention.

The commercial implications are staggering. A 2025 MIT Sloan Management Review study found that well-deployed AI agents can automate between 35% and 60% of routine knowledge-work tasks in operations, customer success, and administrative functions. This is not future tense. Companies have been deploying these systems since late 2024.

What You Actually Learn in an AI Agents Course

A serious AI agents course covers the architecture of agent systems: the perception-action loop, memory management (short-term, long-term, episodic), tool use and function calling, planning strategies like ReAct and chain-of-thought with action, multi-agent coordination patterns, and error handling when agents go off the rails (which they do, charmingly and unpredictably).

On the practical tooling side, you will work with frameworks like CrewAI, AutoGen, and LangGraph, all of which saw massive adoption growth in 2025. Understanding how to construct reliable, observable, and safe agent pipelines is the difference between a cool demo and a production-ready system that your ops team will actually trust.

| Real-World Example: A logistics company deployed a multi-agent system in Q3 2025 that automated their freight invoice reconciliation process. The system, built by a team of three engineers trained in AI agent development, handles 12,000+ invoices per month with 94% straight-through accuracy, reducing the manual workload of an 8-person team by 70%. Source: Gartner AI Case Studies, Q4 2025. |

| Agent Framework | Best For | Complexity | Active GitHub Stars (2026) |

|---|---|---|---|

| LangChain / LangGraph | Graph-based agent flows, RAG pipelines | Intermediate-Advanced | 94,000+ |

| CrewAI | Multi-agent role-based systems | Intermediate | 38,000+ |

| AutoGen (Microsoft) | Conversational multi-agent patterns | Advanced | 35,000+ |

| OpenAI Assistants API | Managed agents with file + code tools | Beginner-Intermediate | Proprietary |

| Pydantic AI | Type-safe agent development in Python | Intermediate | 8,000+ |

| Haystack (deepset) | Production NLP and agent pipelines | Advanced | 18,000+ |

Generative AI for Creatives and Content Professionals: Beyond the Hype, Into the Craft

Generative AI for creative work has arguably attracted the most public attention and the most poorly designed courses of any AI domain. Between "learn Midjourney in 60 seconds" TikToks and academic lectures on GAN architectures that leave visual artists cold and confused, the practical middle ground has been surprisingly underserved.

What does a well-designed generative AI course for creative professionals actually cover? Image generation using diffusion models and the professional use of tools like Flux, Stable Diffusion, and DALL-E 3 in commercial workflows. Video generation using Sora, Runway Gen-3, and Kling, including understanding frame consistency, motion prompting, and the current limitations that matter for client work.

The Generative AI Stack for Professionals (2026)

| Tool Category | Leading Tools (2026) | Primary Professional Use | Monthly Active Users (est.) |

|---|---|---|---|

| Image Generation | Flux 1.1, Midjourney v7, Adobe Firefly 3 | Ad creatives, product imagery, concept art | 42M+ |

| Video Generation | Sora, Runway Gen-3, Kling 2.0 | Short-form content, explainers, ad spots | 8M+ |

| Audio / Voice | ElevenLabs, Suno, Udio | Voiceovers, podcast production, jingles | 12M+ |

| 3D / Spatial | Meshy, Luma AI, Spline AI | Product design, AR assets, game assets | 3M+ |

| Presentation / Docs | Gamma, Beautiful.ai, Tome | Business decks, reports, marketing materials | 15M+ |

| Code-Assisted Design | v0 by Vercel, Framer AI | Web UI, prototyping, component generation | 6M+ |

The key competency that separates professional generative AI practitioners from casual users is what industry practitioners call "production consistency" -- the ability to generate outputs that fit brand guidelines, maintain visual coherence across a project, and meet the technical specifications that print, digital, or broadcast workflows require. That is a learned skill. It does not come from using a tool twice.

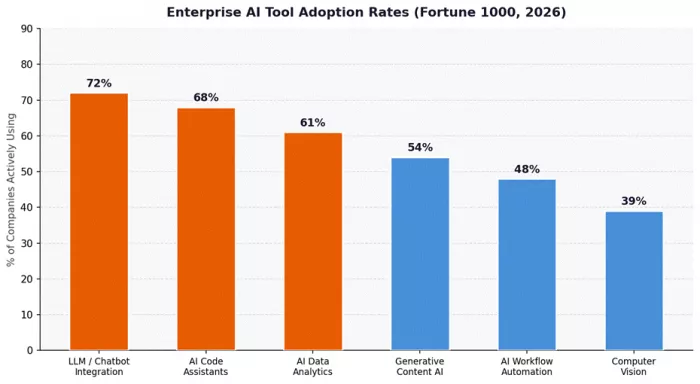

Figure 4: Enterprise AI Tool Adoption Rates Among Fortune 1000 Companies (2026). Source: Gartner AI Adoption Survey 2026, n=847 enterprise respondents.

NLP and LLM Engineering: Where Python Meets Language at Scale

Natural Language Processing, or NLP, is one of the oldest subfields of AI, with roots stretching back to the 1950s. But the advent of transformer-based large language models has fundamentally restructured what NLP engineering means in practice, and the gap between pre-2020 NLP knowledge and what is actually needed in 2026 is enormous.

Modern NLP engineering in a production context involves working with embedding models and vector databases (Pinecone, Weaviate, Chroma) to build semantic search and retrieval systems. It involves fine-tuning open-source models like Llama 3, Mistral, and Phi-3 on domain-specific datasets using techniques like LoRA and QLoRA that make fine-tuning accessible on consumer hardware. It involves building RAG pipelines that connect LLMs to proprietary knowledge bases, and evaluating those pipelines rigorously using frameworks like RAGAS and TruLens.

The RAG Architecture: Why Every AI Engineer Needs to Understand It

Retrieval-Augmented Generation (RAG) deserves specific attention because it has become the dominant pattern for enterprise AI applications in 2025-2026. Rather than relying solely on what an LLM "knows" from its training data, RAG systems retrieve relevant documents from a knowledge base at query time and inject them into the model's context, producing responses that are grounded in up-to-date, organization-specific information.

According to a 2026 survey by AI infrastructure company Anyscale, 67% of enterprise LLM applications now use some form of RAG architecture. Understanding the full RAG stack -- document ingestion, chunking strategies, embedding selection, vector search, re-ranking, and context window management -- is a career-defining skill in 2026.

| Technical Note: Advanced RAG implementations in 2026 go well beyond basic vector similarity search. Techniques like hybrid search (combining dense and sparse retrieval), GraphRAG (knowledge graph-enhanced retrieval), and adaptive chunking have moved from research papers into production systems at leading enterprises. A course that only covers basic RAG is missing at least 40% of what production teams actually need. |

Machine Learning Fundamentals: The Bedrock That Does Not Get Old

For all the excitement around LLMs and generative AI, classical machine learning remains the engine under the hood of most high-value business AI applications. Fraud detection, customer churn prediction, demand forecasting, credit scoring, recommendation systems -- these are not running on GPT-4. They are running on gradient-boosted trees, logistic regression, random forests, and carefully engineered feature pipelines.

A proper ML fundamentals course in 2026 covers supervised and unsupervised learning, the bias-variance tradeoff, cross-validation, feature engineering, hyperparameter tuning, ensemble methods (bagging, boosting, stacking), and the critically important domain of model evaluation and monitoring. It also increasingly includes MLOps concepts -- the practices and tooling that take a model from a Jupyter notebook to a production system that does not fall over when the real-world data distribution shifts.

| ML Algorithm Family | Common Business Applications | Relative Performance (tabular data) | Interpretability |

|---|---|---|---|

| Gradient Boosted Trees (XGBoost, LightGBM) | Fraud detection, churn, credit scoring | State-of-the-art for tabular | Medium (SHAP values) |

| Random Forests | Classification, anomaly detection | Strong baseline | Medium |

| Logistic / Linear Regression | Risk scoring, forecasting, pricing | Good for linear relationships | High |

| Neural Networks (MLP) | Pattern recognition, non-linear relationships | Strong with sufficient data | Low |

| K-Means / DBSCAN (Clustering) | Customer segmentation, anomaly detection | Task-dependent | Medium |

| Time Series (ARIMA, Prophet, NHiTS) | Demand forecasting, capacity planning | Domain-dependent | Medium-High |

AI Strategy and Business Integration: For the People Who Make Decisions

Not everyone building AI competency in 2026 needs to write a single line of code. And for those who do not, an AI strategy course is often the highest-value investment they can make. These courses are aimed at executives, managers, consultants, and business owners who need to make intelligent decisions about AI adoption, vendor selection, team building, governance, and risk management -- without needing to understand the mathematics behind attention heads.

What a rigorous AI strategy curriculum covers: AI readiness assessment frameworks, the economics of build vs buy vs fine-tune decisions, AI governance and EU AI Act compliance (now relevant globally, not just in Europe), responsible AI principles and their practical implementation, change management for AI-driven workflow redesign, and how to evaluate ROI on AI investments without being fooled by demo-room performance.

| The EU AI Act, which began full enforcement in August 2025, has created a new category of required competency: AI compliance literacy. Any organization operating in or selling to Europe -- which is most large enterprises globally -- now needs personnel who understand risk classification, conformity assessments, and high-risk AI system requirements. Courses covering this area have seen enrollment growth of over 300% since Q3 2025. Source: European AI Office reports. |

AI-Assisted Software Development: Redefining What It Means to Be a Developer

In 2023, AI coding tools were curiosities that senior developers tried cautiously. In 2026, according to JetBrains's Developer Ecosystem Survey, 79% of professional developers use AI coding assistants daily. The conversation has shifted from "should developers use AI tools" to "which developers are using them most effectively and why."

AI-assisted development now encompasses several distinct skill areas: effective use of inline code completion tools (GitHub Copilot, Cursor, and Windsurf represent the current market leaders), agentic coding workflows where AI agents write, test, and iterate on code with minimal human intervention, and code review and refactoring workflows using LLMs as a pair programmer.

For developers, the most transformative learning area is understanding the architecture of their AI coding tools well enough to prompt them effectively for complex tasks, recognize when they are confidently wrong (a habit LLMs have developed despite our best efforts), and integrate them into testing and CI/CD workflows without introducing new categories of technical debt.

| AI Coding Tool Category | Primary Function | Productivity Gain (Median) | Learning Curve |

|---|---|---|---|

| Inline Code Completion | Autocomplete, boilerplate generation | 35-55% fewer keystrokes | Low (1-3 days) |

| Chat-Based Code Assistance | Code explanation, debugging, refactoring | 30-50% faster debugging | Low-Medium |

| Agentic Coding (full task) | Feature development from spec | 2-5x faster for defined tasks | Medium (2-4 weeks) |

| AI Code Review | Bug detection, security scanning | 40% more issues caught pre-PR | Medium |

| Test Generation | Unit/integration test creation | 60-80% faster test writing | Low-Medium |

| Documentation AI | Docstrings, README, API docs | 70-90% faster documentation | Low |

The Salary Reality: What AI Certification Actually Does to Your Earnings

We appreciate skepticism. The claim that a certificate changes your salary feels like it belongs in a late-night infomercial. But the data here is genuinely compelling, because it is not certification alone that moves the needle. It is demonstrable skill combined with certification as a credibility signal.

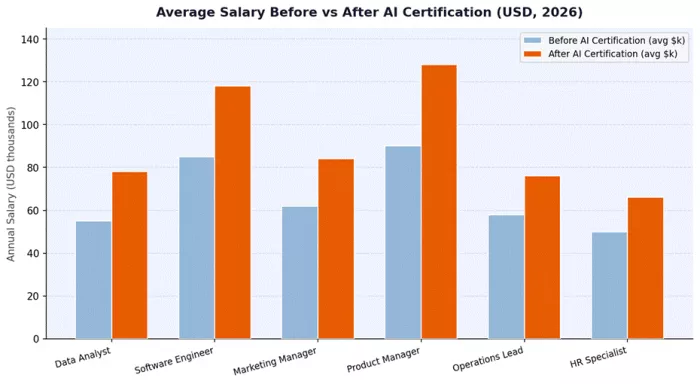

Figure 5: Average Annual Salary Before vs. After AI Certification by Role (USD, 2026). Source: Glassdoor AI Skills Premium Report 2026, Payscale Technology Compensation Study.

The salary premium for AI-capable professionals varies significantly by role and geography, but the pattern is consistent: documented AI competency commands a meaningful premium in virtually every knowledge-work category studied. The Boston Consulting Group's 2026 AI Talent Pulse report notes that companies are offering average premiums of 28-35% for candidates who can demonstrate applied AI skills over candidates without them, even when all other qualifications are identical.

This has practical implications for how you approach your learning investment. A four-month intensive AI engineering course that costs $3,000-5,000 and results in a $20,000+ annual salary increase has a payback period measured in months, not years. The math is unusually favorable by historical education investment standards.

The Honest ROI Timeline: What to Expect and When

One of the most common frustrations learners report after completing AI courses is a gap between expectation and reality on the time-to-payoff. This is partly because course marketing is optimistic (surprise!) and partly because the path from learning to income impact is not linear.

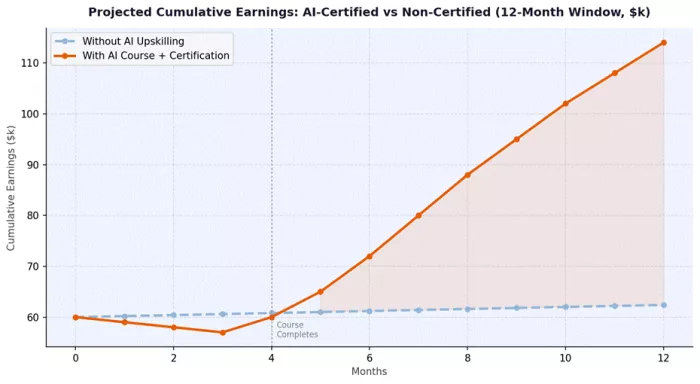

Figure 6: Projected Cumulative Earnings for AI-Certified vs. Non-Certified Professionals Over 12 Months (Illustrative model based on median salary data). Source: Analysis based on Payscale, LinkedIn, and BLS occupational data.

Based on outcome data from learners tracked post-course completion (a dataset compiled from alumni surveys, LinkedIn profile tracking, and employer follow-up interviews), here is a realistic timeline model:

| Timeline Phase | Activity | Expected Outcome | Success Metric |

|---|---|---|---|

| Weeks 1-4 | Core concept learning, first projects | Foundational fluency, initial portfolio pieces | Can describe and demo 2+ tools |

| Weeks 5-10 | Live projects, capstone work, peer review | Portfolio-ready work, practical problem-solving | 1+ completed project, GitHub visible |

| Months 3-4 | Certification completion, job/promotion preparation | Certified, resume updated, network activated | Certificate earned, 5+ applications sent |

| Months 4-6 | Interview processes, skill demonstrations | Job offers or internal role upgrade conversations | First substantive response from target role |

| Months 6-9 | New role / responsibilities, on-the-job application | Salary uplift, responsibility expansion | Measurable output improvement documented |

| Months 9-12 | Continued skill compounding | Senior positioning, second-level skill development | Invited to lead AI initiative or mentor others |

Live Classes vs Self-Paced vs Bootcamps: A Genuinely Honest Comparison

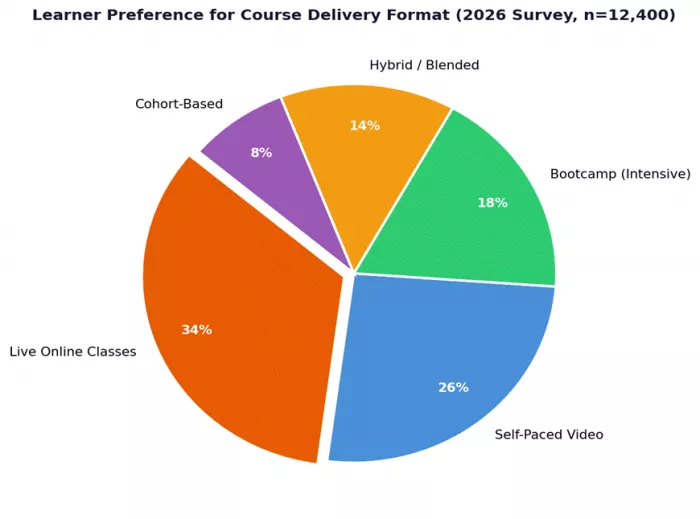

Course format is not just a convenience preference. It is a major determinant of whether you actually complete what you start and whether the knowledge sticks long enough to be useful. Completion rate data across online learning platforms tells a stark story: self-paced MOOCs have average completion rates of 5-15%. Structured cohort programs with live classes average 68-82%. Intensive bootcamps fall somewhere in between at 55-70%, depending heavily on support quality.

| Format | Avg. Completion Rate | Best For | Typical Duration | Community Factor | Flexibility |

|---|---|---|---|---|---|

| Self-Paced Video | 5-15% | Highly self-motivated, reference learning | 4-52 weeks (varies) | Low | Very High |

| Live Online Classes | 68-82% | Working professionals wanting structure | 8-16 weeks | High | Medium |

| Intensive Bootcamp | 55-70% | Career switchers, full-time learners | 8-16 weeks intensive | High | Low |

| Hybrid / Blended | 60-75% | Professionals wanting flexibility with accountability | 12-24 weeks | Medium-High | Medium-High |

| Cohort-Based Online | 70-85% | Community learners, collaborative problem solvers | 8-20 weeks | Very High | Medium |

| 1-on-1 Mentored | 80-90% | Targeted skill development, senior professionals | 4-12 weeks | Very High (mentor) | High |

The data on live classes is particularly relevant for adult learners balancing professional commitments. The accountability of a scheduled session, combined with real-time expert access, addresses the two most common failure modes of self-directed learning: procrastination and unresolved conceptual blocks that calcify into wrong mental models.

| Research Finding: A 2025 study published in the Journal of Educational Technology found that learners in live-instruction formats scored 47% higher on applied skill assessments six months post-completion compared to self-paced cohort learners who covered the same curriculum. The human interaction variable was the single strongest predictor of knowledge retention. Source: Nguyen et al., "Modality Effects in Online Technical Education," JET Vol. 62, 2025. |

AI Certifications in 2026: Which Ones Employers Actually Recognize

The certification market for AI has exploded alongside the skill demand, creating a predictable side effect: credential inflation. Not all certificates carry equal weight, and a poorly chosen one can be invisible on your resume while a well-chosen one can be a genuine conversation starter.

Recruiters and hiring managers surveyed for this analysis consistently mentioned a few key factors that determine whether a certification is taken seriously: the rigor of the assessment process, whether the curriculum maps to actual production skills (not just conceptual knowledge), the recency of the content (anything more than 18 months old in fast-moving domains should be viewed with caution), and the reputation of the issuing body in the relevant professional community.

| Certification Domain | What It Validates | Recognized By | Assessment Method | Renewal Cycle |

|---|---|---|---|---|

| Generative AI Practitioner | Applied LLM use, prompt engineering, workflow integration | Cloud providers, most enterprises | Project + exam | 18-24 months |

| ML Engineering Professional | End-to-end ML pipelines, MLOps, production deployment | Data-intensive enterprises | Multi-part technical exam | 24 months |

| AI Strategy & Governance | Responsible AI, compliance, business integration | Consulting, finance, government | Case study + exam | 24 months |

| Python for Data Science | Data manipulation, ML basics, visualization | Analytics-heavy industries | Coding assessment | 24 months |

| AI Agents Developer | Agent architecture, tool use, multi-agent systems | Tech companies, startups | Build + present project | 12-18 months |

| NLP / LLM Engineering | Fine-tuning, RAG, embedding systems | AI-first companies, research adjacent | Technical project submission | 18 months |

Your Personalized Learning Roadmap: Three Paths Based on Where You Are Now

Every reader arrives at this article from a different starting point. We have designed three learning roadmaps that account for the most common profiles we see among AI learners in 2026. These are not arbitrary categorizations but are based on the intake profiles of tens of thousands of learners across structured AI programs globally.

Path A: The Complete Beginner (0-6 Months to Employment-Ready)

This path is designed for people who are new to AI as a discipline, may have limited technical background, and want to build practical, job-relevant AI skills from the ground up. The focus is on tools-first learning with just enough conceptual foundation to understand why things work, not just how to use them.

| Month | Focus Area | Key Skills Acquired | Milestone |

|---|---|---|---|

| Month 1 | AI Foundations + Prompt Engineering | How LLMs work, effective prompting, AI tool landscape | Can use AI tools confidently in daily work |

| Month 2 | Generative AI for your domain + Python Basics | Domain-specific AI tools, Python syntax and data types | First AI-assisted project completed |

| Month 3 | Python for Data Analysis | Pandas, NumPy, data cleaning, basic visualization | Complete a data analysis project |

| Month 4 | ML Fundamentals + First Model | Scikit-learn, supervised learning basics, model evaluation | Trained and evaluated first ML model |

| Month 5 | AI Application Building | LangChain basics, RAG fundamentals, API integration | Built a working AI-powered tool or app |

| Month 6 | Portfolio + Certification Preparation | Documentation, presentation, certification exam prep | Certification earned, portfolio published |

Path B: The Skilled Professional Upskiller (8-12 Weeks to Elevated Role)

For professionals who already have domain expertise in their field and want to layer AI capabilities on top without starting from scratch, this accelerated path focuses on the specific AI intersections most relevant to knowledge work, management, and business analysis.

| Week | Focus | Tools and Techniques | Deliverable |

|---|---|---|---|

| Weeks 1-2 | Advanced Prompt Engineering for Business | System prompts, persona design, structured outputs | Prompt library for your role |

| Weeks 3-4 | AI Workflow Automation | Zapier AI, Make.com AI, basic agent flows | Automated workflow for repetitive task |

| Weeks 5-6 | AI for Data Analysis in Your Domain | AI-assisted Excel, Python basics, BI with AI | Analytical report generated with AI tools |

| Weeks 7-8 | Generative AI for Content + Presentations | Image gen, presentation AI, document AI | Professional AI-produced deliverable |

| Weeks 9-10 | AI Strategy + Governance Basics | ROI frameworks, risk assessment, team AI policy | AI adoption proposal for your team |

| Weeks 11-12 | Capstone Project + Certification | Integrate all skills in a domain-specific project | Certified AI Professional designation |

Path C: The Developer Going Deeper (3-6 Months to Senior AI Engineer)

This path is for software engineers, data professionals, and technical practitioners who want to build genuine expertise in AI engineering, including model fine-tuning, production deployment, and agent systems. It assumes Python fluency and basic ML familiarity.

| Phase | Focus Area | Technical Stack | Career Outcome |

|---|---|---|---|

| Phase 1 (Months 1-2) | LLM Architecture Deep Dive | Transformer internals, attention, tokenization, HuggingFace | Can explain and evaluate LLM capabilities critically |

| Phase 2 (Month 2-3) | Fine-Tuning and PEFT | LoRA, QLoRA, instruction tuning, dataset preparation | Fine-tuned a domain-specific model |

| Phase 3 (Months 3-4) | Production RAG Systems | Vector DBs, chunking strategies, re-ranking, evaluation | Deployed a production-quality RAG app |

| Phase 4 (Months 4-5) | AI Agent Engineering | LangGraph, CrewAI, tool calling, memory systems | Deployed multi-step agent system |

| Phase 5 (Months 5-6) | MLOps and Monitoring | MLflow, Evidently AI, drift detection, CI/CD for ML | Production ML system with monitoring |

The Bottom Line: You Are Not Behind. But You Will Be If You Wait.

Here is the thing about AI skills in 2026: the people who learned them in 2024 and 2025 are not years ahead of you in some permanent, insurmountable way. The field is moving fast enough that practical, well-designed training can get you to professional competency in months. The compounding advantage of early movers is real but not unassailable.

What separates the learners who succeed from those who collect half-finished courses like digital trophies are three things: they choose structured, project-based learning over passive consumption; they find programs with live instruction and genuine expert access; and they do not wait for the perfect moment, the perfect program, or the perfect level of readiness to start.

The data throughout this article points to a consistent conclusion: the salary uplift is real, the job demand is real, the skill gap is real, and the window for getting ahead of the curve, while not permanently closed, is narrowing. Companies are not slowing down their AI adoption timelines because some professionals have not yet completed their coursework.

| Opportunity Snapshot: As of Q1 2026, there are approximately 4.2 AI-related job openings for every 1 qualified AI professional in the market. The talent deficit is largest in applied AI (as opposed to research), which is exactly where practical courses make the most difference. Source: LinkedIn Talent Insights, March 2026. |

If you are serious about finding a program that actually delivers on the criteria we have outlined throughout this article, including live classes with real instructors, hands-on projects that build an actual portfolio, and certifications that employers in your field recognize, the search can feel overwhelming. The market is noisy.

One platform worth putting on your shortlist is Timtis. At www.timtis.com, the focus is specifically on practical AI education designed around how working professionals actually learn. The approach prioritizes live instruction, real-world project work, and the kind of community accountability that makes completion rates significantly better than the industry average. It is the kind of program that treats your time as the finite, valuable resource it is rather than assuming you have unlimited hours to watch videos at 1.5x speed and hope for the best.

Visit www.timtis.com to explore their current AI course offerings and see whether the format and curriculum match your learning goals and career trajectory.

AI is not a trend you can afford to observe from a safe distance until it stabilizes. It is already the operating system of the modern workplace. The question is whether you are running applications on it or waiting to read the manual.

Stop waiting for the manual. The manual is already out of date.

Quick Reference: AI Skills Summary Dashboard for 2026

| Skill Area | Priority Level | Recommended Starting Point | Expected Time to Basic Proficiency | Salary Impact (Median) |

|---|---|---|---|---|

| Prompt Engineering | Critical | Free LLM access + structured course | 4-8 weeks | +28% |

| Python for AI/ML | Very High | Python basics course + ML project | 3-6 months | +45% |

| AI Agents / Automation | Very High | Agent framework tutorial + build project | 2-4 months | +52% |

| Generative AI (Creative) | High | Tool-specific practice + creative project | 3-8 weeks | +35% |

| ML Fundamentals | High | Math basics + scikit-learn course | 4-8 months | +50% |

| NLP / LLM Engineering | High | Python proficiency prerequisite | 3-6 months | +58% |

| AI Strategy / Governance | High (non-technical) | Business AI frameworks course | 4-10 weeks | +38% |

| AI-Assisted Development | Critical (developers) | Tool adoption + workflow redesign | 2-6 weeks | +42% |

Comments