Table of Contents

- Introduction

- Forage: free certificate, open access, weak credentialing signal

- GitHub Copilot Student: the free plan that just got narrower

- Replit Agent: fastest to a demo, dangerous if mistaken for production

- Cursor Pro: the choice once a student can already code

- Lovable: the no-code platform with a real backend story

- Claude and ChatGPT: the lab partner who never sleeps

- Where this leaves a 2026 student portfolio

- Closing read

Introduction

Somewhere in 2025, the average undergraduate stopped describing their summer as "interned at" and started describing it as "shipped." The change of verb sounds small. It is not. "Interned" implies someone gave you an opportunity. "Shipped" implies you took one. Both can show up as a single line on a resume; only one of them shows up as a working URL.

That swap is the entire subject of this piece. The platforms below are the ones doing the actual work behind it: simulated case studies on Forage, the daily craft of a coding assistant inside an editor, the launchpad demos of a vibe-coding agent, the always-on tutor available at 3am with infinite patience and questionable accuracy. Each section presents the tool, what it actually does, what users say, and where field experience reveals it falls short. Numbers are dated where possible because pricing and limits in this category move fast.

Forage: free certificate, open access, weak credentialing signal

Forage runs more than 70 corporate-branded job simulations co-built with firms like JPMorgan, Goldman Sachs, BCG, Accenture, and KPMG. Anyone with an email can enrol. The catch is the trade between accessibility and credentialing weight, and that trade has been getting worse for the user.

What Forage actually offers

| Capability | What it actually does |

|---|---|

| Programme catalogue | 70+ simulations across finance, consulting, law, tech, FMCG. Heaviest in finance and consulting; thinner in design and product. |

| Module structure | 5 to 6 hours of self-paced modules. A fictional partner email kicks it off; tasks include analysis, modelling, or memo drafting. |

| Feedback layer | Pre-recorded video feedback from real employees of the partner firm; submitted work is benchmarked against a model answer. |

| Selection & enrolment | Open access. No application, no interview, no peer cohort. Anyone in any country can sign up. |

| Output / proof of completion | A branded certificate plus LinkedIn integration. Forage's own terms forbid listing the simulation as work experience or employment. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| Cost to student | Free across all programmes. Funded by employer partners who use Forage as a talent-sourcing channel. |

| G2 rating | 4.5 / 5 from 18 verified reviews; 66% of reviewers gave 5 stars, 33% gave 4. |

| Glassdoor (employer rating) | 4.4 / 5 from 30 reviews; 96% would recommend Forage as a workplace, useful as a proxy for content quality. |

| Time investment per programme | 5 to 6 hours, no deadline. Five completed in a week is technically possible and signals farming. |

Where it earns its place and where it falls short

| Strengths | Weaknesses |

|---|---|

| Genuinely free with no usage caps; removes financial barrier to industry exposure. | Cannot be listed under work experience per Forage's own terms; Yale and Arizona career offices flag this in writing. |

| Co-designed with named firms; tasks mirror real analyst work credibly. | Open enrolment removes the selection signal recruiters value most. |

| Best-in-class for industry exploration before applying to anything competitive. | Top-tier consulting and banking screeners now actively discount Forage-heavy CVs. |

| Useful interview talking points, since model answers expose where reasoning fell short. | Limited peer or mentor interaction; feedback is canned, not personal. |

Field observation: Forage works as exposure, not credential. A pharmacy student who uses a BCG simulation to figure out whether strategy work fits comes out ahead. The same student listing four certificates as employment comes out flagged.

GitHub Copilot Student: the free plan that just got narrower

GitHub's student offering reached nearly two million verified users by early 2026. The plan stayed free in the March 2026 update; what changed was access to premium models. Anyone whose workflow relied on manually picking Claude Opus or GPT-5.4 has had to rebuild it.

What Copilot actually offers students

| Capability | What it actually does |

|---|---|

| IDE coverage | Native integration with VS Code, JetBrains IDEs, Visual Studio, Neovim, Vim, and the GitHub web editor. |

| Completion engine | Unlimited basic ghost-text completions; suggestions match local code style and project conventions. |

| Chat, edit, and agent modes | Inline chat for questions, edit mode for targeted changes, agent mode for multi-file tasks. PR review on GitHub.com. |

| Model access (post Mar 2026) | GPT-5.4, GPT-5.3-Codex, Claude Sonnet, Claude Opus reachable only through Auto mode. Manual model picker disabled on the student tier. |

| Premium request budget | 300 premium requests per month. Claude Opus 4.7 counts as 7.5 requests per call, capping heavy users at roughly 40 hard requests. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| Student plan cost | Free with verified .edu address or DigitalOcean / GitHub Education enrolment. |

| Paid tiers (non-student) | Pro $10/mo, Pro+ $19/mo, Business $19/seat/mo, Enterprise $39/seat/mo. |

| Capterra rating | 4.7 / 5 from 28 reviews; the highest of any AI coding tool tracked on Capterra. |

| G2 rating | 4.5 / 5; 122 mentions for ease of use, 106 for intelligent assistance, 35 for productivity gain. |

| April 2026 status | Signups for new Pro, Pro+, and Student plans paused on April 20, 2026 per GitHub's notice. |

Strengths and weaknesses, by use case

| Strengths | Weaknesses |

|---|---|

| Free tier covers genuinely heavy student usage on basic completions. | Premium model access narrowed in March 2026; Auto mode picks for the user, not against the user. |

| Tightest IDE integration of any tool reviewed; works in whatever editor the student already uses. | 300-request cap drains fast for anyone working on substantial debugging chains. |

| Public-code matching filter prevents accidentally suggesting copyleft snippets. | Loses context in files over a few hundred lines; suggests inconsistent variable names. |

| Shapes habits toward smaller, testable functions because the autocomplete rewards them. | Roughly a third of CS students now struggle to complete assignments with Copilot disabled. |

Field observation: the GitHub history Copilot helps a student build is genuinely cleaner than what undergraduates produced two years ago. The fluency it removes the need to acquire is a different problem entirely, and one no recruiter is going to fix.

Replit Agent: fastest to a demo, dangerous if mistaken for production

Replit makes the path from "describe an app" to "deployed URL" shorter than any tool reviewed. That speed is also the source of its reputational damage. The SaaStr database deletion incident of July 2025 is now logged as OECD AI Incident 1152. Subsequent guardrails reduced but did not eliminate the underlying behaviour Capterra reviewers still report.

What Replit actually offers

| Capability | What it actually does |

|---|---|

| Browser IDE | Full editing environment for 50+ languages with no local install. Works on Chromebooks and tablets. |

| Replit Agent 4 (Mar 2026) | Builds in parallel across isolated micro-VMs; resolves merge conflicts automatically with what Replit reports as ~90% success. |

| Deployment | One-click publish to a Replit subdomain or custom domain. PostgreSQL, Replit Database, and Object Storage available natively. |

| Collaboration | Multiplayer real-time editing, role-based access, comments. Supports interview environments and small team builds. |

| Mobile and offline | Full iOS and Android apps. No offline support; constant internet required. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| Pricing tiers | Starter (free), Replit Core $20/mo with $20 credit included, Replit Pro $100/mo team plan, Enterprise custom. |

| Billing model | Effort-based for Agent: every Agent interaction is billable, including text guidance with no code change. |

| G2 rating | 4.5 / 5 from 327 verified reviews. |

| Capterra rating | 4.6 / 5; reviewers cite both rapid prototyping and surprise overage charges. |

| User base scale | Millions of total users; over 500,000 business users per Replit's stated figures. |

Strengths and weaknesses, by use case

| Strengths | Weaknesses |

|---|---|

| Idea to deployed URL in an evening; faster than any local development setup. | Effort-based billing produces unpredictable costs; $100+ overages on heavy weekends are common in user reports. |

| No environment configuration; templates handle dependency installation. | Agent has destructive failure modes documented in Capterra and Reddit threads. |

| Strong fit for hackathon demos, school projects, and weekend prototypes. | Internet-only operation rules out fieldwork, travel, or unreliable connectivity. |

| Genuinely useful for non-coders who want to ship something they can show. | Production deployments carry real risk; the Lemkin SaaStr incident is referenced specifically by recruiters. |

Field observation: Replit lives in the throwaway zone. Hackathon demos, school projects on dummy data, weekend prototypes. Anything with real users, real money, or real data should run elsewhere.

Cursor Pro: the choice once a student can already code

Cursor is a VS Code fork with an LLM threaded into every action. The killer feature is Composer for multi-file edits. Independent reviews put productivity gains at 30 to 50% on real refactor work for developers who already know what they are doing. Beginners with no foundation get a different and worse outcome.

What Cursor actually offers

| Capability | What it actually does |

|---|---|

| Editor base | Forked from VS Code; preserves extensions, themes, keybinds. Migration from VS Code is one click. |

| Composer | Multi-file editing with codebase indexing; understands functions, types, and patterns across the project. |

| Tab autocomplete | Predicts the next 5 to 10 lines, often refactors as the user types. The feature most heavy users describe as the hook. |

| Agent mode | Autonomous task completion. Can scaffold a Next.js dashboard with auth and database in one prompt; checkpoints allow rollback. |

| Integrations | Model Context Protocol (MCP) for external tools, Skills for specialised agent behaviours, BugBot for PR review at $40/seat/mo. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| Pricing tiers | Hobby (free, limited agent requests), Pro $20/mo, Teams $40/seat/mo, Ultra $200/mo. |

| G2 rating | 4.6 / 5 from 34 reviews; 79% gave 5 stars, 17% gave 4. |

| August 2025 incident | Pricing migration to credit pool surprised heavy users with multi-hundred-dollar bills; CEO Michael Truell apologised in July, refunds were issued. |

| Recurring user complaint | $20 plan drains quickly for premium-model API usage; Auto mode is the workaround. |

Strengths and weaknesses, by use case

| Strengths | Weaknesses |

|---|---|

| Composer handles 200-component refactors that would take an engineer hours, in minutes. | Pricing model treats heavy use like a cloud bill, not a subscription. |

| VS Code base means almost zero switching cost for existing developers. | Suggestions can be inconsistent on complex code; manual validation still required. |

| Multi-model support including Claude, GPT, and Gemini in a single workspace. | Performance lag on lower-spec hardware once multiple agents are running. |

| Active feature velocity; MCP and background agents shipped in early 2026. | Worst possible tool for a beginner; produces working code without producing understanding. |

Field observation: Cursor rewards the student who already shipped something the hard way last year. It penalises the one who never has.

Headline scores cluster between 4.5 and 4.7 across the stack; the differences in what each tool actually buys are larger than the ratings suggest.

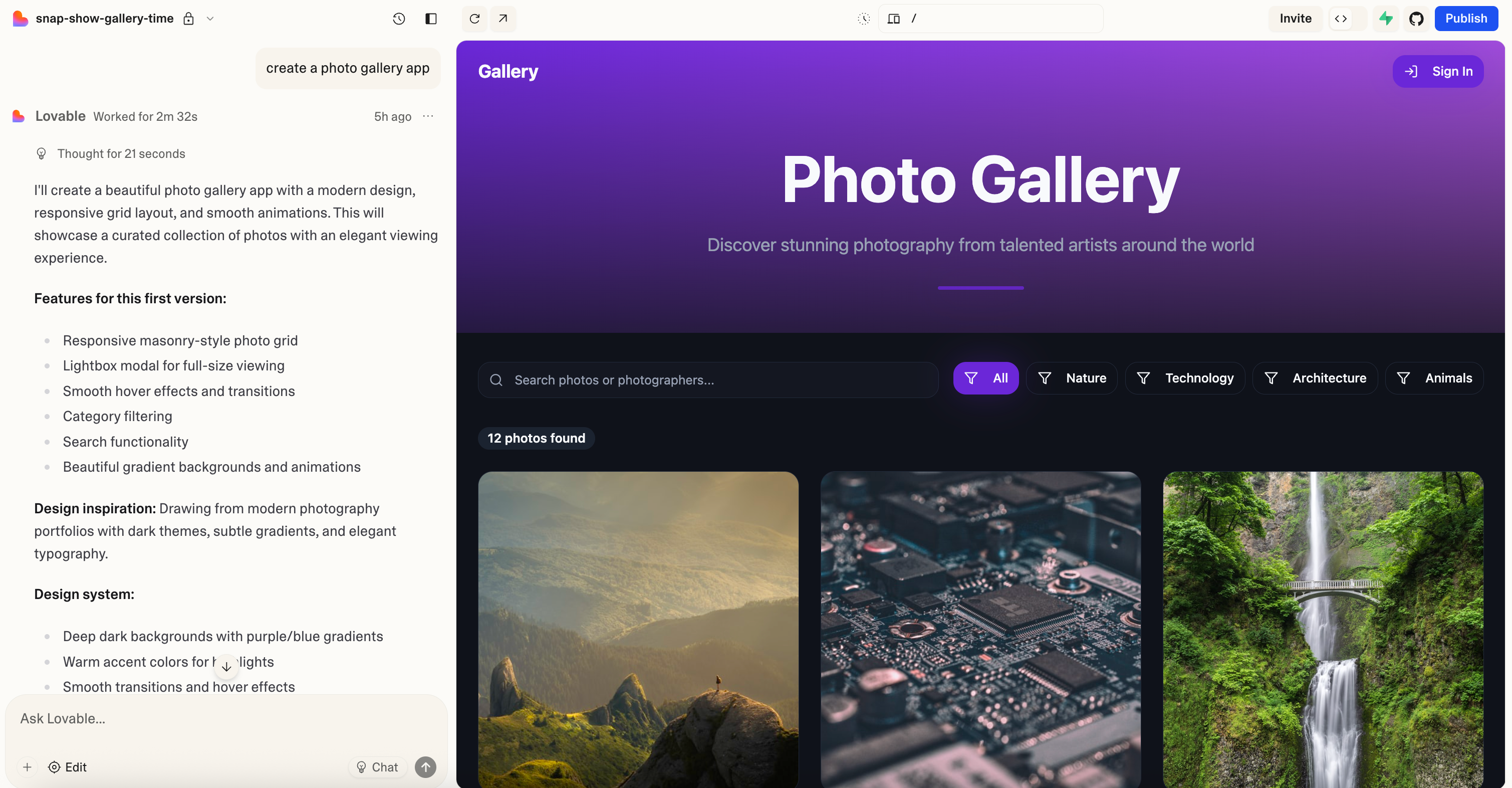

Lovable: the no-code platform with a real backend story

Lovable is the Stockholm startup that hit $100 million in annual recurring revenue within 12 months of launch, per the company's own disclosures. It is the platform a non-technical co-founder actually ships with. The genuinely differentiated detail nobody else flags is that Visual Edits, every colour change, font tweak, spacing adjustment, consume zero credits.

What Lovable actually offers

| Capability | What it actually does |

|---|---|

| Generation stack | React + Tailwind frontend; Supabase backend with database, auth, storage; Stripe for payments. All wired up natively. |

| Lovable Cloud (2.0) | Built-in Supabase-based backend. Cloud projects launched mid-2025 removed the need to configure Supabase externally. |

| Visual Edits | Direct UI manipulation, click-and-modify on layout, colour, typography. Zero credit cost in user reports across 2026 reviews. |

| GitHub sync | Bidirectional. Code stays as React/TypeScript and can be hardened in Cursor or VS Code, then synced back. |

| Agentic Mode (2.0) | Multi-step autonomous edits with what Lovable reports as 91% error reduction over single-prompt mode. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| Free tier | 5 daily credits, ~30/month maximum. Sufficient for evaluation, not for serious building. |

| Pro plans | $25/mo (100 credits + 5 daily, custom domain, badge removal), $100/mo (400 credits). |

| Business and Enterprise | Business $50/mo adds SSO, data training opt-out, role-based limits. Enterprise custom. |

| G2 rating | 4.6 / 5 from 153 reviews. |

| Credit cost per action | Simple edits ~0.5 credits; complex features (auth, payments) ~1.2 credits per Lovable's own publicly listed pricing logic. |

Strengths and weaknesses, by use case

| Strengths | Weaknesses |

|---|---|

| Visual Edits cost zero credits; meaningful gap against Replit's effort-based billing on iteration-heavy weeks. | Free tier is too thin for genuine project work; Pro is effectively the entry point. |

| Bidirectional GitHub sync prevents lock-in; engineering co-founders can take over cleanly. | Long debugging sessions burn credits fast; user-reported workaround is exporting to local IDE. |

| Full-stack output: frontend, auth, database, payments in one prompt cycle. | Cloud region is locked once chosen; cannot migrate Cloud to Supabase later. |

| Strong template library and Figma import keep design quality higher than typical no-code output. | Edge cases outside the standard pattern (background jobs, complex permissions) require code-level intervention. |

Field observation: the workflow that has emerged on student-built MVPs is a Frankenstein chain. Prompt the app in Lovable, sync to GitHub, harden in Cursor, push back. The portfolio piece is no longer pure anything, and that is the point.

Claude and ChatGPT: the lab partner who never sleeps

RAND's December 2025 panel pegged ChatGPT at 53% of US students using it for schoolwork; Google Gemini doubled to 28% in a single year. Claude's user base is smaller but the composition is unusual. Anthropic's analysis of one million Claude student conversations found computer science students producing 36.8% of all traffic against 5.4% of US degrees, a six-times overrepresentation that compounds across every assignment.

What students actually use these for

| Capability | What it actually does |

|---|---|

| Drafting and revision | 33% of US students use AI for drafting per RAND. Turnaround on essays drops by half or more. |

| Debugging code | Median resolution time falls from hours to minutes. Stack Overflow web traffic is down more than 50% since 2022 per Similarweb. |

| Concept clarification | Personalised explanations at any reading level. Reduces office-hour queueing pressure. |

| Mock interviews and prep | Unlimited reps with custom rubrics. Narrows the prep gap with the candidate who has a wealthy parent. |

| File analysis and research | Multimodal upload: PDFs, images, code, spreadsheets. Used heavily in literature reviews and data wrangling. |

Pricing and user ratings

| Pricing & ratings snapshot | Detail (latest, Q1-Q2 2026) |

|---|---|

| ChatGPT pricing | Free tier with daily limits, Plus $20/mo, Pro $200/mo, Team $25-30/seat/mo, Edu pricing for institutions. |

| Claude pricing | Free tier, Pro $20/mo, Max $100 to $200/mo, Team and Enterprise custom. |

| ChatGPT G2 rating | 4.7 / 5; the most-added skill on LinkedIn in 2025 with 60% of new skill additions among job-seekers. |

| Claude G2 rating | 4.7 / 5; smaller review base but skewed heavily technical. |

| Stack Overflow Developer Survey 2025 | 84% of professional developers now use AI tools daily; up 14 points in two years. |

Strengths and weaknesses, by use case

| Strengths | Weaknesses |

|---|---|

| Free tiers are genuinely useful and cover most undergraduate workflows. | 67% of students believe AI is harming their critical thinking, per RAND December 2025; up from 54% the previous May. |

| Always available; replaces the senior friend who used to explain concepts. | Hallucinated APIs, invented citations, and confident wrong answers persist across all major models. |

| Levels access to interview prep and study help across income brackets. | Voice flattening: undergraduate writing across a single class starts reading identically. |

| Reduces blank-page paralysis and cuts revision cycles dramatically. | Models go easier than real interviewers and inflate confidence ahead of the actual screen. |

Field observation: the technical cohort using Claude six times more intensely than its share of degrees would predict is compounding an advantage every week. The students still pretending not to use any of these tools are not winning anything.

Where this leaves a 2026 student portfolio

LinkedIn's 2025 skills data is the bluntest aggregate signal: ChatGPT was the most-added skill on the platform with 60% of new additions among job-seekers; prompt engineering hit 38%. The Stepstone/ISE 2025 graduate recruiter survey put IT, digital, and AI skills at the top of what employers want. IntuitionLabs' 2025 review of big tech hiring noted that screening criteria have tightened around two markers: a public GitHub history with real commits, and a portfolio of shipped, deployed projects, regardless of how they were built.

A clean LinkedIn with eight Forage simulations and zero shipped code is now a weaker profile than a messy GitHub with five real, deployed, AI-augmented projects, even when the GitHub belongs to a freshman. Microsoft's 2025 cross-generational survey: 87% of Gen Z office workers say AI has helped their careers, against 76%

Closing read

“Build experience” used to mean show up somewhere reputable for a summer and be useful. In 2026 it means assemble an artefact stack a recruiter can audit in twenty minutes. The six tools above are not interchangeable. Forage builds context. Copilot and Cursor build craft. Replit and Lovable build evidence on projects where speed matters more than polish. Claude and ChatGPT build the underlying thinking that lets the rest of the stack mean anything. Used together, the assembly is more compelling than the resume that came out of a 12‑week summer at a Fortune 500. Used naively, by a student who never debugged anything they did not delegate, the assembly is a sandcastle; the work has not disappeared, it has moved upward into specifying, judging, and explaining.

Sitting next to that stack, a learning hub like Timtis quietly changes what students actually do with these tools. Instead of bouncing between Forage certificates, half-finished Replit demos, and scattered ChatGPT chats, a student can work through structured paths that force them to turn experiments into shippable artefacts and written reflections. That kind of environment does not replace internships or projects, but it does shape them: a GitHub repository that follows a Timtis-style brief, a Lovable prototype built against a clearly defined user story, or a Claude-powered research memo that cites its sources rigorously. When a recruiter opens the portfolio, they are not just seeing “used AI tools,” they are seeing a trail of scoped problems, deliberate choices, and explanations that hold up under questions.

Comments