There is a strange new ritual unfolding in dorm rooms worldwide. Before cracking a textbook, before glancing at the assignment brief, before so much as skimming the syllabus, a student opens a chat window and types a question. Multiply that small habit by a few hundred million times a week, and the quiet revolution running through higher education comes into focus.

The shift is not coming. It already happened. The question worth asking now is whether students actually understand what changed, because most are using AI without seeing the larger productivity equation around it.

Why This Shift Is Different From Every Tech Wave Before It

Calculators changed math homework. Google changed research. Wikipedia changed the term paper. Each of those tools sped up a single step without disturbing the workflow itself.

Generative AI does something stranger. It compresses the distance between “having a question” and “having an answer” so dramatically that the act of thinking starts to feel optional. That is the deeper change hiding behind every adoption statistic.

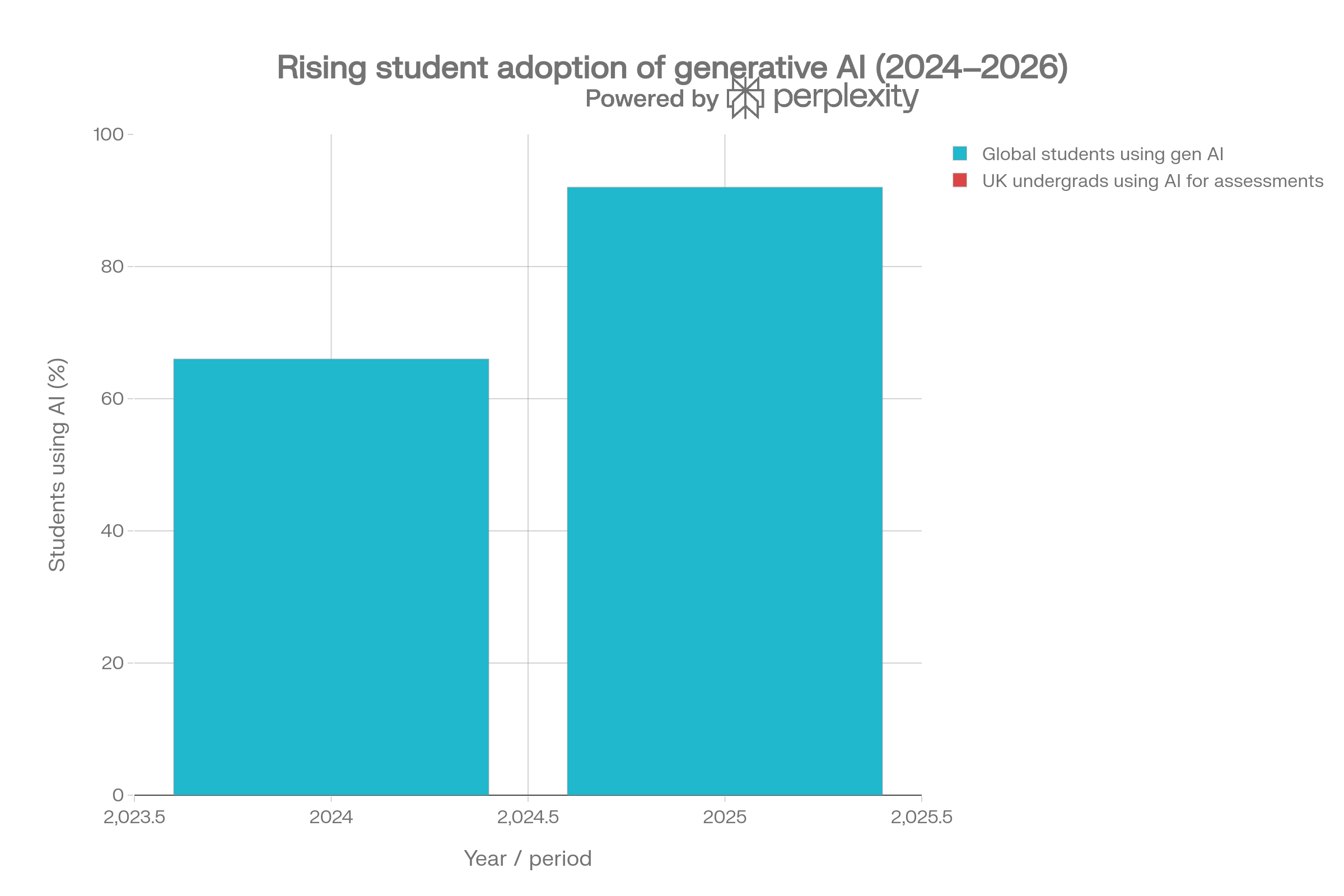

According to DemandSage's 2026 compilation of global education data, student adoption of generative AI jumped from 66% in 2024 to 92% in 2025. By early 2026, roughly 86% of higher-education students rely on AI as their primary research and brainstorming partner. The UK's Higher Education Policy Institute (HEPI) tracked a parallel leap: undergraduate use of AI for assessments climbed from 53% to 88% in a single academic year.

A Quick Snapshot of the Student AI Landscape

| Indicator | Figure | Source |

|---|---|---|

| Students using AI globally (2025) | 92% | DemandSage |

| Higher-ed students using AI as primary research partner | 86% | DemandSage, 2026 |

| UK undergrads using AI for assessments | 88% | HEPI Student GenAI Survey |

| Students relying on ChatGPT regularly | 66% | Programs.com |

| Schools with formal AI guidelines | ~10% | UNESCO 2025 audit |

| Students who feel underskilled with AI | 58% | Programs.com |

| Education AI market size by 2027 | $20B+ | Industry projections |

The real headline buried in these numbers is not adoption. It is preparation. Nearly every student is using these tools. Almost none received structured training in how to use them well.

The New Definition of “Being Productive”

Old productivity was about output speed: pages drafted, problems solved, chapters read. New productivity is about cognitive allocation. The question shifts from “how much can a student produce in three hours” to “which of these tasks should a human brain even be doing in the first place.”

That mental shift sounds abstract. Look at what it actually means on a Tuesday night.

Old Workflow vs New Workflow: A Side-by-Side Look

| Task | Pre-AI Approach | AI-Native Approach |

|---|---|---|

| Reading a 40-page paper | Highlight, take notes, re-read | Structured summary, ask follow-ups, verify key claims |

| Essay starting point | Outline from scratch, stare at blank page | Generate three angle options, pick one, draft in own voice |

| Exam prep | Re-read notes, hope for the best | Convert notes into flashcards and adaptive practice quizzes |

| Coding assignment | Stack Overflow loop, trial and error | Pair-program with model, debug interactively |

| Research lit review | Manually scan databases | Use citation-grounded tool to map terrain, then read deeply |

The pre-AI student spent roughly 80% of available time on retrieval and 20% on synthesis. The AI-native student flips that ratio. That is the productivity gain in one sentence.

The Hidden Cost Most Students Don't Notice

Productivity has a tax, and nobody hands students the receipt. Research summarized by Chanty in early 2026 suggests that habitual reliance on instant answers can dull critical thinking and weaken memory retention, particularly among students and early-career professionals. When uncertainty gets resolved in two seconds, the mental muscle built for ambiguity quietly atrophies.

There is a cultural side effect baked in too. Speed stops being a bonus and becomes the floor. A draft that once took three days feels overdue if it is not submitted by tomorrow morning, because a model can technically produce one in nine minutes. The gain in raw speed shows up not as relief but as more decisions packed into shorter windows.

The Tradeoff Map

| What AI Sharpens | What AI Quietly Dulls |

|---|---|

| Speed of first drafts | Patience for slow thinking |

| Range of ideas considered | Confidence in independent judgment |

| Access to expert-level explanations | Tolerance for productive confusion |

| Editing and formatting precision | Original voice and stylistic risk |

| Volume of practice questions generated | Long-term retention of unprompted recall |

Students who thrive in 2026 understand this tax and pay it deliberately. They choose where to preserve the mental friction, because friction is where learning lives.

A Tale of Two Students

Picture two seniors in the same political theory course.

Student A opens ChatGPT, pastes the essay prompt, lightly edits the output, submits. Time invested: 25 minutes. Grade: a respectable but unremarkable B-minus. Long-term retention of the argument: roughly zero.

Student B uses Claude to summarize three assigned readings, drafts the essay by hand, then asks the same model to argue against the thesis. After the model attacks the draft, Student B revises the three weakest paragraphs. Time invested: 4 hours. Grade: A-minus. Long-term retention: solid enough to reference in a job interview six months later.

Both students “used AI.” Only one actually leveraged it. That gap is the real productivity divide on every campus today.

What Students Are Actually Doing With AI

The cheating panic in headlines does not match what survey data shows. First Page Sage's early-2026 breakdown of typical AI queries, cross-referenced with HEPI findings, reveals a far more mundane reality.

Where Student AI Effort Actually Goes

| Use Case | Share of Activity | Visual |

|---|---|---|

| Concept explanation and research | 37% | █████████████████████ |

| Academic research and reading prep | 19% | ██████████ |

| Coding and technical assistance | 14% | ███████ |

| Writing assistance (drafting/email) | 14% | ███████ |

| Brainstorming and ideation | 11% | ██████ |

| Editing, formatting, polish | 5% | ███ |

The dominant use case is cognitive scaffolding, not ghostwriting. HEPI's UK data tells the same story: students most often turn to AI to explain concepts, summarize articles, and suggest research directions. The “AI wrote my essay” narrative drives clicks. The reality is closer to “AI helped a student figure out what to write about.”

The exception is meaningful, though. Roughly 19% of students leave AI-generated text completely unedited in submitted work. That slice is what gets institutions nervous, and reasonably so.

The Tool Stack That Holds Up in 2026

Reviewer fatigue is real. Every other blog claims to rank the “10 best AI tools for students,” and most are recycled affiliate lists with a pastel color scheme. The tools that actually keep showing up in real student workflows are fewer in number and more specialized in purpose.

The Practical Student AI Stack

| Tool | Sweet Spot | Free Access | Why It Earns a Spot |

|---|---|---|---|

| ChatGPT | General drafting, explanation, exploration | Yes | Broadest capability, strong on quick clarification |

| Claude | Long PDFs, careful comparison, natural writing | Yes (15-40 messages per 5 hours) | Best long-document handling and natural tone |

| Google Gemini | Workspace tasks, in-doc help | Free Premium for verified US college students | Native integration with Docs, Slides, Sheets |

| NotebookLM | Source-grounded study from uploads | Free with Google account | Answers locked to uploaded materials, low hallucination risk |

| Perplexity | Research with inline citations | Yes | Transparent sourcing for early-stage research |

| Grammarly | Final-pass editing and tone | Yes | Sentence-level polish without rewriting voice |

| Quizlet (Q-Chat) | Active recall and practice testing | Yes (limited) | Conversational quizzing that adapts |

| Otter.ai | Lecture transcription | Yes (limited minutes) | Real-time capture, searchable transcripts |

The stronger move is not picking one tool. It is pairing them. A workflow that keeps showing up among high-performing students looks like this:

1.Record the lecture with Otter

2.Upload the transcript and slides into NotebookLM for source-locked review

3.Have Claude or ChatGPT generate practice questions and explain weak spots

4.Run essay drafts through Grammarly for final polish

5.Use Quizlet or a spaced-repetition app for retention

Students who build a workflow like this report study-time savings in the 30 to 50% range, with grades holding steady or improving.

Quick Insights Worth Keeping

•The adoption fight is over. AI is in every backpack. The new contest is fluency, not access.

•Productivity now means choosing which mental work to delegate and which to protect.

•Heavy passive use erodes retention. Deliberate active use compounds it.

•Free tiers cover roughly 90% of academic needs. The urge to pay for “premium” is mostly marketing.

•The fastest-growing skill gap on campus in 2026 is not coding or data analysis. It is prompt fluency.

How to Actually Use AI Like a Top Student

Students producing the best work in 2026 share a few habits that have almost nothing to do with which model they chose.

Prompt with constraints, not requests. “Write me an essay on inflation” produces noise. “Compare three competing economist explanations for 2024-2026 inflation, list one weakness in each, and flag where the data is contested” produces something worth arguing with.

Verify every citation. Models still invent sources with terrifying confidence. Cross-check anything bound for a bibliography against Google Scholar or a real database. The 19% of students leaving raw output in submitted work are the same group losing marks for fabricated references.

Rewrite, never paste. The rewriting step is where actual learning happens. Pasted output is academic fast food: filling, fast, and forgotten by next week.

Use source-grounded tools for high-stakes work. NotebookLM and Perplexity reduce hallucination risk because they cite where claims came from. For research that ends up in a graded paper, that matters.

Treat AI as a sparring partner, not a butler. Asking a model to attack a draft sharpens thinking. Asking it to validate a draft produces flattery in essay form.

The Bigger Picture: Why This Matters After Graduation

The professional world that current students will walk into already runs on AI fluency. ChatGPT alone crossed 900 million weekly users in 2026, and workplace data from the same period shows average regular users saving 40 to 60 minutes per day on routine tasks. Enterprise messages processed through custom AI workflows grew roughly nineteen times year over year.

Employers in 2026 are no longer impressed that a candidate can use ChatGPT. They want to see how that candidate uses it differently from everyone else who can. The fluency gap that started in dorm rooms is about to show up in salary offers.

That makes this productivity shift more than a study trick. It is a career signal, building quietly across four years of college choices. The student who treats AI as a calculator for tedious work and keeps their own thinking sharp will outperform the one who treats it as a ghostwriter and arrives at graduation unable to think without a chat window open.

The Bottom Line

The AI productivity shift is not a forecast. It is the current operating system of student life. Treating AI as a calculator, a tool that handles routine work so the brain can focus on harder thinking, builds graduates ready for the world they are entering. Treating it as a ghostwriter produces diplomas with nothing behind them.

That is why the next advantage in education will come less from access to AI and more from learning discipline around it. Students who use structured learning spaces like Timtis to understand AI as a study, workflow, and problem-solving layer are closer to the real shift than those simply collecting prompts.

Technology stays neutral. Habits do not. That distinction, more than any tool list, pricing tier, or trending model, is the productivity shift worth understanding before the next semester starts.

Comments